A Beginners Guide to Network Meta Analysis - Dr Chris Noone

Based on Evidence Synthesis Ireland's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Network meta-analysis supports decision-making by estimating effects across many interventions, combining direct and indirect evidence to identify which option is best for a given outcome.

Briefing

Network meta-analysis is positioned as a decision-making tool that can answer not only whether interventions work, but which specific option works best—by combining direct head-to-head trial evidence with indirect comparisons across a whole “network” of treatments. In psychology and other health fields where trials often compare different therapies against different control conditions, traditional pairwise meta-analysis tends to lump heterogeneous interventions together, inflating uncertainty and obscuring practical choices. Network meta-analysis instead keeps interventions and control groups distinct, estimates effects across many pairings, and can infer comparisons that never appeared in a single trial.

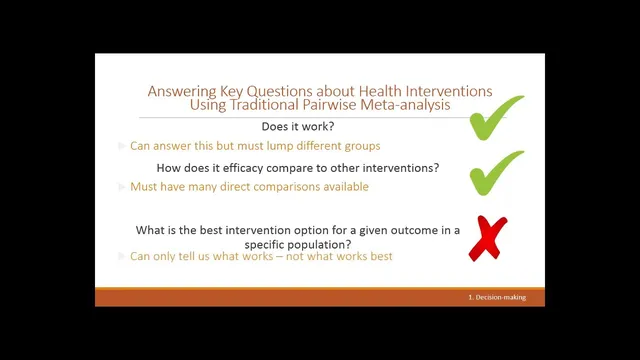

The talk starts with the limits of pairwise meta-analysis for complex behavioral interventions. When social anxiety treatments include multiple therapy types (e.g., cognitive behavioral therapy, cognitive therapy, social skills training) and trials use varied control groups, combining them into one “psychological intervention” effect breaks the assumption that trials share a common effect. Similarly, control groups produce different effect sizes, which increases heterogeneity and makes interpretation less useful for clinicians and policymakers. Pairwise meta-analysis can indicate whether treatments generally outperform controls, and sometimes compare two interventions directly when enough head-to-head trials exist—but it cannot reliably identify the best option among many alternatives.

Network meta-analysis addresses these gaps by building a network where each treatment (and often each control) is a node, and the thickness of connections reflects how many trials compare those nodes directly. With this structure, analysts can compute direct effects from head-to-head studies, derive indirect effects when two treatments share a common comparator, and then integrate both sources into a single network estimate. The method also supports ranking interventions using multiple ranking approaches, producing a practical output for funding and guideline decisions.

Key validity assumptions receive special attention. Homogeneity in network meta-analysis is reframed through transitivity and consistency: transitivity requires that effect modifiers are similarly distributed across comparisons (for example, participant characteristics or delivery contexts should not systematically differ between parts of the network), while consistency checks whether indirect and direct evidence agree for the same comparison. Disconnected networks are another practical risk: if some interventions are only compared within a subgraph that never links back to the rest (often through a shared control), the network becomes harder to analyze and may require complex handling.

Because many health interventions are “complex,” the talk emphasizes additional considerations. Control conditions such as “usual care” may not be truly comparable across trials, and bias in complex interventions can propagate through the network, affecting estimates beyond where it originates. The ideal scenario includes both direct and indirect evidence, reliable and consistent outcome measurement (often supported by standard outcome sets), transparent reporting, and—where possible—individual participant data sharing.

To deal with complexity, the presentation highlights component-based network meta-analysis. Instead of treating each intervention as a single package, interventions can be decomposed into clinically meaningful components (or dismantled into essential techniques), coded using behavior change technique frameworks and related taxonomies, and then modeled so that component combinations become nodes. This approach can identify which components are effective and which appear inert, including potential interactions between components.

Finally, a step-by-step workflow is illustrated using a hypertension example comparing physical activity interventions with first-line anti-hypertensive pharmacological therapies. The process adapts systematic review planning via a PRISMA extension for network meta-analysis, broadens the intervention/comparator criteria, screens and selects studies to build a connected network, checks consistency, and reports both pairwise results and network estimates (including ranking outputs). The session closes with resources and training options, plus a forward look at methodological development—especially automation for component extraction and AI-assisted evidence updating—alongside the continued need for statistical expertise, even if user-friendly tools can support teaching and learning.

Cornell Notes

Network meta-analysis (NMA) is presented as a way to compare many interventions at once, using both direct head-to-head trials and indirect comparisons through shared comparators. It helps answer practical questions that pairwise meta-analysis often cannot—especially which intervention is best among multiple options—while also separating effects from different control groups. Validity depends on assumptions such as transitivity (effect modifiers are similarly distributed across comparisons) and consistency (direct and indirect evidence agree), and disconnected networks can complicate inference. For complex interventions, the talk highlights component-based NMA, which breaks interventions into coded components and models effective parts and interactions. A hypertension case study illustrates the workflow: broaden the search, build a connected network, check consistency, then report network estimates and rankings.

Why does pairwise meta-analysis struggle with behavioral interventions like social anxiety treatments?

How does NMA estimate comparisons that never appear in a single head-to-head trial?

What are transitivity and consistency, and why do they matter?

What does a “disconnected network” mean in NMA, and what causes it?

How can NMA handle complex interventions beyond treating each as a single package?

What changes in the workflow when moving from a standard systematic review to NMA?

Review Questions

- What specific assumptions must hold for indirect comparisons in NMA to be credible, and how do transitivity and consistency differ?

- In what ways can control groups undermine NMA if they are treated as interchangeable, and how does component-based NMA help address intervention complexity?

- Using the hypertension example, what steps would you follow to build a connected NMA network and decide what to report (pairwise vs network estimates vs rankings)?

Key Points

- 1

Network meta-analysis supports decision-making by estimating effects across many interventions, combining direct and indirect evidence to identify which option is best for a given outcome.

- 2

Pairwise meta-analysis can mislead when trials lump heterogeneous interventions together or use non-comparable control groups, increasing heterogeneity and obscuring practical choices.

- 3

NMA relies on transitivity (similar distribution of effect modifiers across comparisons) and consistency (agreement between direct and indirect evidence) for valid inference.

- 4

Disconnected networks arise when some interventions never connect to the rest of the network through shared comparators, making analysis more complex.

- 5

For complex interventions, control-group content and bias can meaningfully affect network results, and component-based NMA can identify effective intervention parts and interactions.

- 6

A practical NMA workflow includes broad PICO planning, connected network diagram evaluation, consistency checks, and reporting both pairwise and network estimates plus rankings.