A bigger brain for the Unitree G1- Dev w/ G1 Humanoid P.4

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Natural-language object grounding uses Moonream 2 to localize arbitrary targets, then converts image-space points into XY plus a depth-based Z delta for arm movement.

Briefing

A natural-language vision system paired with a depth-to-robot mapping pipeline is making the Unitree G1 more capable of seeking arbitrary objects—without relying on a fixed list of classes. The setup uses a vision-language model (VLM) to identify objects described in plain language, mark their image locations, and then convert those locations into XY positions and a depth-based Z estimate. Those 3D-ish coordinates feed an arm policy to move the robot toward targets like a “black robotic hand” and a “graphics card,” with the interface showing tracked points in real time.

The key practical takeaway is that object tracking is no longer limited to a small set of pre-trained categories. Instead, the VLM can interpret flexible descriptions (“red bottle of water,” “microwave,” or even abstract queries like “device to heat food”) and return either captions, bounding-style detections, or precise point annotations. In tests, point-based queries often outperform object detection for matching the intended target—for example, “red bottle of water” can correctly localize a red bottle even when object detection confuses it with a yellow one. This matters because the downstream arm control depends on accurate target localization; small perception errors can translate directly into wrong reach behavior.

Performance is still a proof-of-concept bottleneck rather than a fundamental limit. The arm motion is intentionally slow, but the perception loop is also constrained by low update rates—around 1 to 0.5 frames per second for point predictions—despite the Moonream 2 model running in roughly 150 milliseconds per inference. The next steps are therefore framed as optimization work to increase throughput, plus accuracy improvements for ambiguous detections (e.g., the system sometimes mistakes the intended hand for a tripod). A simple mitigation is proposed: add visual cues like colored tape around the hand so the VLM can disambiguate reliably.

Beyond reaching, the work also tackles a separate “side quest”: keeping SLAM and the occupancy grid usable when the head-mounted depth camera is tilted. With the camera aimed outward (to see countertops), the occupancy grid becomes saturated and the robot’s orientation in the map goes wrong. The fix is operational rather than magical: export an environment variable for the head tilt angle (set to 25°) and adjust the SLAM/occupancy calculations accordingly. The result is a noticeably improved occupancy grid, though remaining errors likely come from slight angle mismatch and hard-coded floor-height assumptions.

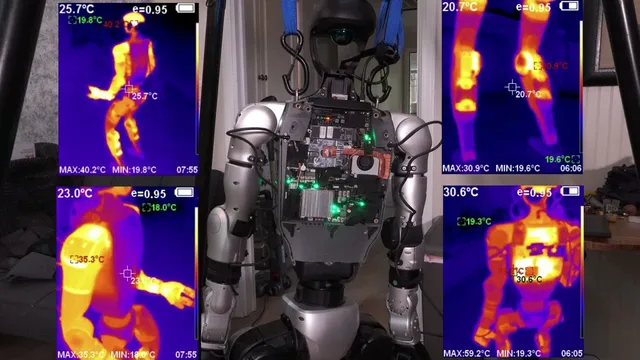

Hardware reliability and sensing geometry remain major constraints. A debugging detour revealed the right hand is effectively dead—thermals show it is cold, and swapping ports and testing configurations did not restore power. Attempts to use alternative “Inspire” hands are blocked by missing adapter hardware. Meanwhile, the camera’s head position creates a fundamental depth mismatch: the system estimates depth from the head camera, but the arm needs depth relative to the hand. That drives the camera dilemma—either add additional cameras closer to hand level (e.g., chest-mounted) or compensate algorithmically.

Looking forward, the plan is to improve arm control and likely incorporate inverse kinematics (or IK as a stepping stone) while acknowledging that reaching is not the same as navigating around obstacles. Path planning will be required to avoid “going through” barriers, and the discussion weighs real-world data versus simulation. The immediate goal is a better-looking, more reliable arm policy using the existing perception-to-coordinate pipeline, then iterating on camera strategy so the robot can both see objects and know where its hands are in space.

Cornell Notes

The system pairs a vision-language model with depth sensing to let a Unitree G1 search for objects described in natural language, then convert those detections into coordinates for arm movement. Moonream 2 supports captions, object detection, and point annotations; point queries often localize the intended target more accurately than bounding-style detection. The current setup is a proof of concept: arm motion is slow by design and perception updates run at roughly 0.5–1 FPS, leaving clear room for optimization. A separate effort shows SLAM/occupancy mapping can be repaired when the head tilt changes by exporting a tilt angle (25°) and adjusting calculations, though small angle errors still cause map drift. Hardware issues (a dead right hand) and camera geometry (head-based depth vs hand-relative depth) remain the biggest blockers.

How does natural-language object seeking work without a fixed class list?

Why do point annotations often beat object detection in this setup?

What’s the SLAM/occupancy-grid problem when the head camera tilts back, and how is it fixed?

What makes hand-relative depth hard when the depth camera sits on the head?

What hardware failure was discovered, and why did it matter?

Why is path planning separate from inverse kinematics for reaching?

Review Questions

- What outputs from Moonream 2 are used to derive coordinates for arm control, and how do point grounding and object detection differ in accuracy?

- Why does correcting the head tilt angle improve the occupancy grid, and what kinds of remaining errors might still appear even after setting the tilt to 25°?

- What sensing limitation arises from a head-mounted depth camera, and what two broad strategies are proposed to address it?

Key Points

- 1

Natural-language object grounding uses Moonream 2 to localize arbitrary targets, then converts image-space points into XY plus a depth-based Z delta for arm movement.

- 2

Point-based VLM queries can outperform object detection for disambiguating similar objects (e.g., red vs yellow bottles), which is crucial for accurate reaching.

- 3

Current system speed is limited by low perception update rates (about 0.5–1 FPS for point predictions) even though Moonream 2 inference is around 150 ms, so optimization is a clear next step.

- 4

SLAM/occupancy mapping can be repaired under head tilt changes by exporting a tilt-angle environment variable (set to 25°), though small angle errors and floor-height assumptions can still distort the map.

- 5

Camera placement creates a depth mismatch: head-based depth estimates don’t translate cleanly into hand-relative depth needed for grasping.

- 6

A dead right hand was confirmed via thermals (cold under thermal imaging) and port testing, preventing immediate right-arm grasping until power/hardware is fixed.

- 7

Reaching requires both arm control (potentially IK) and separate path planning to avoid moving through obstacles.