A pathway to more efficient generative models | Will Grathwohl | 2018 Summer Intern Open House

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Invertible generative modeling becomes more flexible when continuous-time dynamics replace discrete flow steps.

Briefing

Invertible generative models can become more expressive and potentially more efficient by switching from discrete “flow steps” to a continuous-time formulation that replaces Jacobian log-determinants with an integral of divergence. In standard normalizing flows, each transformation must be invertible and the model must compute the log determinant of the Jacobian at every step—requirements that push architectures toward simpler, computationally friendly transformations. That simplicity forces models to stack many steps (as in Glow) to gain expressiveness, which can increase parameter count and compute.

A continuous normalizing flow reframes the model as parameterizing a dynamical system. Instead of applying a sequence of discrete transformations from time 0 to a final time, the model treats the data as evolving under a learned vector field. The likelihood then follows from the change-of-variables formula: in the continuous limit, the sum of log determinants becomes an integral over time of the divergence of the vector field. This shift matters because computing log determinants of arbitrary Jacobians is expensive—typically scaling like n cubed after forming the Jacobian—and there is no efficient unbiased estimator in general. Divergence, by contrast, admits an efficient unbiased estimator using automatic differentiation: sample a Gaussian “probe” vector, use it to probe the Jacobian via automatic differentiation (effectively estimating the trace of the Jacobian), and the expectation recovers the divergence. That estimator can be plugged into the continuous likelihood calculation to yield an unbiased estimate of log-likelihood, enabling training with standard backpropagation tools.

The tradeoff is computational. Discrete flows apply a known sequence of transformations; continuous flows require numerically integrating an ordinary differential equation (ODE) to transform samples and to evaluate likelihood terms. Training becomes harder because gradients must backpropagate through the ODE solution. Work from the University of Toronto provides a method to compute the needed gradients by solving an augmented ODE system, leveraging decades of numerical methods for ODEs to make the approach tractable.

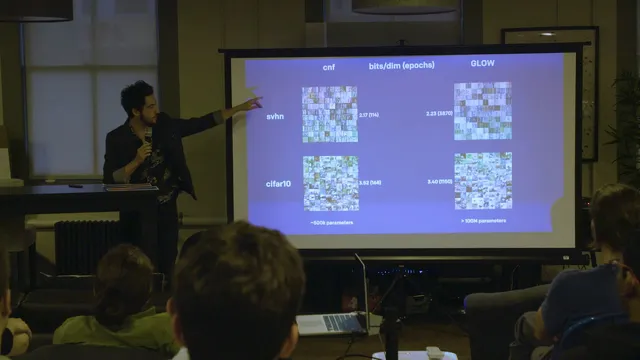

Empirically, continuous normalizing flows have been compared against Glow and RealNVP. The results reported are competitive with RealNVP, and in some datasets continuous normalizing flows even outperform Glow, though they have not fully beaten Glow yet. The main bottleneck is training time: continuous models currently take too long to train, limiting how large they can be made. A visualization example shows a learned gradient field warping digit images through time toward a Gaussian-like distribution; after integrating forward, the model can integrate backward to generate samples. The architecture described uses a single neural network that, at each time step, takes in the current image and the time value to parameterize the vector field, with the ODE integration performing the actual invertible transformation.

Overall, the approach offers a clear path to more flexible invertible generative models: use divergence-based likelihood estimation to avoid Jacobian log-determinant bottlenecks, then rely on ODE solvers and augmented-system gradient methods to keep training feasible. Remaining issues center on making training fast enough to scale model capacity and close the gap with the strongest discrete-flow baselines.

Cornell Notes

Continuous normalizing flows recast invertible generative modeling as a continuous-time dynamical system. The key technical change replaces the discrete sum of Jacobian log-determinants with an integral over time of the vector field’s divergence. Divergence can be estimated efficiently and unbiasedly using Gaussian probe vectors with automatic differentiation, avoiding the expensive Jacobian log-determinant computation. The remaining challenge is training: it requires numerically integrating an ODE and backpropagating through the ODE solution. An augmented ODE method from the University of Toronto enables gradient computation, making standard backprop feasible. Reported experiments show competitive results with RealNVP and sometimes better-than-Glow performance, with training speed as the main limiter.

Why do standard normalizing flows often rely on simpler transformations?

What changes when moving from discrete flows to continuous-time dynamics?

Why is divergence estimation computationally easier than Jacobian log-determinants?

How does training work when the model requires solving an ODE?

What empirical results were reported against Glow and RealNVP?

Review Questions

- How does the continuous-time change-of-variables formula transform the likelihood computation compared with discrete normalizing flows?

- What role do Gaussian probe vectors play in estimating divergence, and why does this avoid the Jacobian log-determinant bottleneck?

- What makes gradient-based training harder for continuous normalizing flows, and how does the augmented ODE method address it?

Key Points

- 1

Invertible generative modeling becomes more flexible when continuous-time dynamics replace discrete flow steps.

- 2

Continuous normalizing flows replace the sum of Jacobian log-determinants with an integral of the vector field’s divergence.

- 3

Divergence admits an efficient unbiased estimator using Gaussian probe vectors and automatic differentiation, unlike Jacobian log-determinants.

- 4

Training requires numerical ODE integration and backpropagation through the ODE solution, which is computationally demanding.

- 5

An augmented ODE gradient method from the University of Toronto enables tractable gradient computation for continuous flows.

- 6

Reported experiments show competitive performance with RealNVP and sometimes better-than-Glow results, with training speed as the main remaining limitation.