Abstract Linear Algebra 16 | Gramian Matrix

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Orthogonal projection P onto a finite-dimensional subspace U is found by expressing P as a linear combination of a basis of U and enforcing ⟨Bj, X − P⟩ = 0 for each basis vector Bj.

Briefing

Orthogonal projection onto a finite-dimensional subspace can be computed by turning the “find the right coefficients” problem into a linear system built from inner products—specifically using the Gramian (Gram) matrix. Given a subspace U of dimension K inside an inner product space V, any vector X decomposes as X = P + N, where P lies in U and N lies in the orthogonal complement U⊥. Writing P as a linear combination of a basis B = {B1, …, BK}, the coefficients are determined by enforcing orthogonality: X − P must be orthogonal to every basis vector Bj. That condition produces K linear equations in the K unknown coefficients, which can be solved efficiently once the matrix of inner products is assembled.

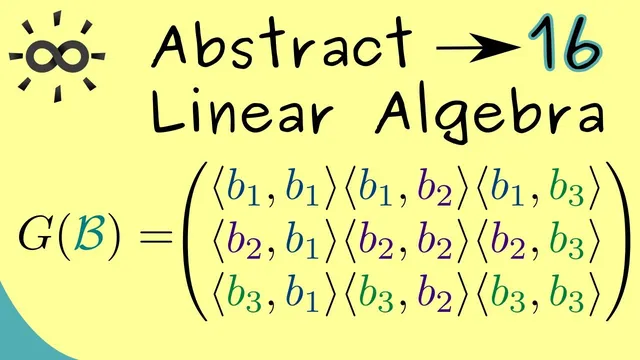

Concretely, the orthogonality requirement ⟨Bj, X − P⟩ = 0 for each j = 1,…,K leads to ⟨Bj, X⟩ = Σi=1..K λi ⟨Bj, Bi⟩. This system has the matrix form G(B)λ = b, where λ is the column vector of coefficients (λ1,…,λK), b has entries bj = ⟨Bj, X⟩, and the Gramian matrix G(B) is the K×K matrix whose (j,i)-entry is ⟨Bj, Bi⟩. The Gramian matrix is thus the inner-product “signature” of the chosen basis, and it acts as the mechanism that converts geometric orthogonality into algebraic solvability.

A key question is whether the coefficients are uniquely determined. Uniqueness holds exactly when the Gramian matrix is invertible. For a square Gramian matrix, invertibility is equivalent to having a trivial kernel. The transcript proves this by assuming a coefficient vector β in the kernel, so G(B)β = 0, and then translating that back into geometry: the corresponding linear combination Y = Σi βi Bi lies in U, yet it is orthogonal to every basis vector Bj, hence orthogonal to all of U. That forces Y to lie in U ∩ U⊥, which contains only the zero vector, so all βi must be zero. Therefore the kernel is trivial, G(B) is invertible, and the linear system has a single solution—meaning the orthogonal projection P is uniquely determined for any K-dimensional subspace.

An example in R^3 makes the method concrete. Let U be the yz-plane, spanned by two basis vectors (1,0,0) is excluded; instead the basis vectors are chosen so the subspace is the plane where x = 0 (the transcript’s computation yields the expected projection that removes the x-component). With a 2-dimensional basis, the Gramian matrix becomes a 2×2 matrix of inner products, and the right-hand side uses inner products with X. Solving the resulting system gives coefficients that reconstruct P as a linear combination of the basis vectors; the final result matches the geometric expectation: the component of X perpendicular to the yz-plane vanishes, leaving the orthogonal projection onto the plane. The takeaway is that Gramian matrices provide a general, basis-driven route to orthogonal projection in any finite-dimensional inner product space.

Cornell Notes

Orthogonal projection onto a K-dimensional subspace U can be computed by solving a K×K linear system built from inner products. For a basis B = {B1,…,BK} of U, write the projection P as P = Σi=1..K λi Bi. Enforcing orthogonality of the residual X − P to every basis vector Bj gives equations ⟨Bj, X⟩ = Σi λi ⟨Bj, Bi⟩. These equations assemble into G(B)λ = b, where G(B) is the Gramian matrix with entries ⟨Bj, Bi⟩ and b has entries ⟨Bj, X⟩. The Gramian is invertible because any vector in its kernel would produce a nonzero element in U ∩ U⊥, which is impossible; thus the projection is unique.

How does orthogonality determine the coefficients of the projection P onto U?

What exactly is the Gramian matrix G(B), and where does it appear in the projection formula?

Why does invertibility of G(B) guarantee a unique orthogonal projection?

How does the kernel argument connect algebra to geometry?

In the R^3 example projecting onto the yz-plane, what does the computation achieve?

Review Questions

- Given a basis B = {B1,…,BK} for U, write the linear system whose solution gives the orthogonal projection of X onto U.

- What does it mean geometrically if a nonzero vector lies in U ∩ U⊥, and how does that relate to the kernel of the Gramian matrix?

- How would you form the right-hand side vector b in the equation G(B)λ = b, and why does it depend on X?

Key Points

- 1

Orthogonal projection P onto a finite-dimensional subspace U is found by expressing P as a linear combination of a basis of U and enforcing ⟨Bj, X − P⟩ = 0 for each basis vector Bj.

- 2

The Gramian matrix G(B) is the K×K matrix of inner products ⟨Bj, Bi⟩ for a basis B = {B1,…,BK} of U.

- 3

The projection coefficients λ solve the linear system G(B)λ = b, where b_j = ⟨Bj, X⟩.

- 4

The Gramian matrix is invertible because any vector in its kernel would create a nonzero element in U ∩ U⊥, which must be {0}.

- 5

Invertibility of G(B) guarantees the orthogonal projection is unique for any K-dimensional subspace in an inner product space.

- 6

In the yz-plane example inside R^3, solving the 2×2 Gramian system produces a projection that removes the component orthogonal to the plane (the x-component).