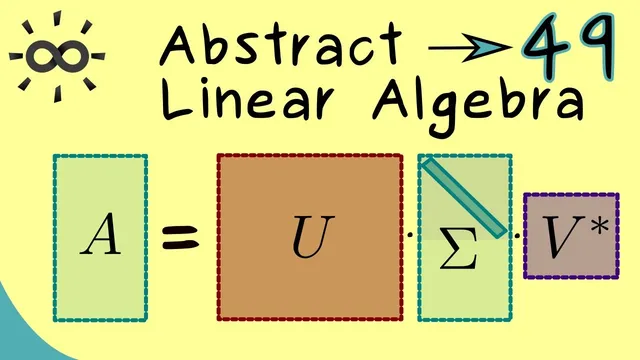

Abstract Linear Algebra 49 | Singular Value Decomposition (Overview)

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Singular value decomposition expresses any m×n matrix A as A = U Σ V*, with U and V unitary and Σ diagonal in the rectangular sense.

Briefing

Singular value decomposition (SVD) turns any matrix—square or rectangular—into a “diagonal” core using unitary changes of basis, making the matrix’s action easy to interpret and compute with. The key move is to relax the strict relationship used in earlier decompositions: instead of requiring the left and right factors to be inverses of each other (as in diagonalization or Jordan form), SVD keeps them unitary but allows them to be unrelated. That tradeoff is what makes it possible to force the middle matrix to be diagonal (in the rectangular sense) for essentially every matrix.

Earlier matrix decompositions split a matrix A into three parts: a left factor U, a middle factor D (diagonal or Jordan form), and a right factor V, with strong constraints tying U and V together. In the unitary-friendly Schur decomposition, U and V are unitary and inverses of each other, but the middle matrix is only upper triangular, not diagonal. SVD targets the missing ingredient: a diagonal middle matrix. Achieving that requires weakening the connection between the unitary factors—U and V no longer have to be inverses—so A and the diagonal core are not similar. Still, they remain equivalent: the diagonal core represents the same linear map under different choices of bases.

Concretely, for an m×n matrix A, SVD takes the form A = U Σ V*, where U is an m×m unitary matrix, V* is the conjugate transpose of an n×n unitary matrix V (so V* = V^{-1}), and Σ is an m×n diagonal-like matrix. “Diagonal” here means that if the rectangular matrix is cut down to an ordinary square, the result is a standard diagonal matrix: entries on the diagonal are the singular values, while everything below (or to the right, depending on whether there are more columns than rows) is zero. This definition is what lets SVD handle rectangular matrices without special cases.

Because U and V are unitary, they preserve lengths and angles, so the change of basis is geometrically well-behaved. The decomposition also has a clean linear-map interpretation. View A as the matrix representation of a linear map L: C^n → C^m with respect to the standard bases. Then Σ is the matrix representation of the same map L but with respect to new orthonormal bases in the input and output spaces. Those new bases are encoded in the columns of V (for the input side) and U (for the output side). The central goal becomes: choose orthonormal bases so that the representation of L becomes diagonal.

The transcript frames the remaining task as constructing the specific unitary matrices U and V that achieve this diagonal form. It points to the spectral theorem for normal matrices as the tool that guarantees such unitary choices, setting up the explicit calculations for the next step in the series.

Cornell Notes

Singular value decomposition writes any m×n matrix A as A = U Σ V*, where U and V are unitary and Σ is diagonal in the rectangular sense. The diagonalization is achieved by relaxing the strict inverse relationship between the unitary factors used in earlier decompositions: A and Σ are not similar, but they are equivalent, meaning they represent the same linear map under different bases. Unitarity matters because it preserves lengths and angles, so the basis changes are orthonormal. Interpreting A as a linear map L: C^n → C^m, the columns of V give the new input basis and the columns of U give the new output basis, making Σ the representation of L in those bases. The next step is computing U and V explicitly, with the spectral theorem for normal matrices providing the construction route.

Why does SVD relax the relationship between the unitary factors compared with Schur or diagonal/Jordan forms?

What does “diagonal” mean for a rectangular matrix in the SVD formula A = U Σ V*?

How does unitarity (U and V unitary) connect to geometry and computation?

In what sense are A and Σ “equivalent” even though they are not similar?

How do the columns of U and V relate to the new bases for the linear map L: C^n → C^m?

What role does the spectral theorem for normal matrices play in obtaining U and V?

Review Questions

- How does SVD achieve a diagonal middle matrix when Schur decomposition only guarantees an upper triangular one?

- Explain why A and Σ are equivalent but not necessarily similar in the SVD framework.

- For an m×n matrix, describe where the nonzero entries of Σ can appear and how this matches the “cut to a square” definition of diagonal.

Key Points

- 1

Singular value decomposition expresses any m×n matrix A as A = U Σ V*, with U and V unitary and Σ diagonal in the rectangular sense.

- 2

SVD forces a diagonal middle matrix by relaxing the strict inverse relationship between the unitary factors used in earlier decompositions, so A and Σ are not similar.

- 3

Despite not being similar, A and Σ are equivalent because they represent the same linear map under different orthonormal bases on the domain and codomain.

- 4

Unitarity preserves lengths and angles, making the basis changes geometrically meaningful and numerically well-behaved.

- 5

For rectangular matrices, “diagonal” means the nonzero singular values lie on a diagonal block, with zeros filling the remaining triangular region (below or to the right depending on dimensions).

- 6

The columns of V define the new orthonormal basis in C^n (the input space), while the columns of U define the new orthonormal basis in C^m (the output space).

- 7

Constructing U and V explicitly is tied to the spectral theorem for normal matrices, which provides the needed unitary structure.