Abstract Linear Algebra 51 | Singular Value Decomposition (Algorithm and Example)

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Nonzero eigenvalues of A* A and A A* coincide, so singular values can be obtained from either product.

Briefing

Singular value decomposition (SVD) can be built systematically from eigenvectors of either A* A or A A*, without any “magic.” The core insight is that A* A and A A* share the same nonzero eigenvalues, so their square roots become the singular values of A. That order-independence matters because it lets the algorithm choose whichever diagonalization is easiest, then reconstruct the full SVD using unitary matrices on both sides.

For any rectangular complex matrix A, the SVD writes A as U Σ V*, where U and V are unitary (or unitary-sized after padding), and Σ is diagonal (rectangular-shaped) with singular values s1, s2, … on the diagonal in decreasing order. The singular values are nonnegative real numbers obtained as s_i = sqrt(λ_i), where λ_i are eigenvalues of A* A (equivalently, of A A*). The nonzero eigenvalues match because if A* A v = λ v with λ ≠ 0, then multiplying by A gives A A* (A v) = λ (A v), producing the same λ for the other product; the reverse direction works similarly. The only difference between A* A and A A* is how many trailing zeros appear.

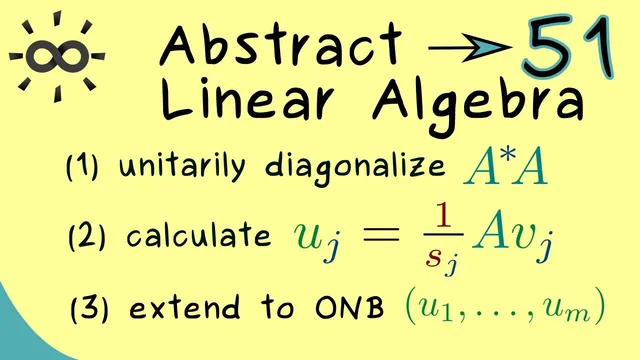

The algorithm proceeds in clear steps. First compute A* A and find its eigenvalues λ1 ≥ λ2 ≥ … ≥ λn, repeating according to multiplicity. Next form an orthonormal eigenbasis for A* A using the spectral theorem for normal (self-adjoint) matrices, producing a unitary matrix V. Let r be the rank of A* A, equivalently the count of nonzero eigenvalues. Then define the singular values s_j = sqrt(λ_j) for j = 1,…,r and place them into Σ. The remaining task is constructing U. For each j ≤ r, compute u_j from v_j via u_j = (1/s_j) A v_j, which normalizes the vectors automatically because the eigenvalue relation forces ||A v_j|| to equal s_j. For indices beyond r, fill out U with additional orthonormal vectors (or pad with zeros in Σ) so that U has the correct size.

An explicit 2×2 example makes the procedure concrete. Take A = [[1, √3],[2, 1]]. Compute A* A to get eigenvalues 6 and 2. The corresponding eigenvectors of A* A are normalized to form v1 and v2. The singular values become √6 and √2. Then u1 and u2 are obtained by multiplying A by v1 and v2 and dividing by the corresponding singular values, yielding orthonormal columns for U. With U, Σ = diag(√6, √2), and V* assembled, the SVD is complete. The result is not just “diagonalization,” because Σ must be nonnegative on its diagonal and the decomposition uses unitary matrices on both the left and right.

Overall, the SVD algorithm reduces to diagonalizing a normal matrix (A* A or A A*), taking square roots of eigenvalues, and using A to transfer eigenvectors into the left singular vectors. That structure is what makes SVD both computationally reliable and broadly useful in applications.

Cornell Notes

Singular value decomposition expresses any complex matrix A as A = U Σ V*, where U and V are unitary and Σ is diagonal (rectangular) with nonnegative entries. The singular values are the square roots of the eigenvalues of A* A (or equivalently A A*), and the nonzero eigenvalues match between these two products. The algorithm starts by diagonalizing A* A: compute its eigenvalues λ1,…,λn and build an orthonormal eigenbasis to form V. If r is the rank of A* A, then s_j = sqrt(λ_j) for j ≤ r populate Σ. The left singular vectors come from u_j = (1/s_j) A v_j, and any remaining columns of U are filled to complete an orthonormal basis.

Why do A* A and A A* produce the same nonzero eigenvalues, and why does that matter for SVD?

How does the algorithm determine the singular values and where do they go in Σ?

How are the left singular vectors u_j constructed from the right singular vectors v_j?

What role does the rank r play in completing U and Σ?

In the 2×2 example, how do the eigenvalues of A* A translate into the final SVD?

Review Questions

- Given an eigenpair A* A v = λ v with λ ≠ 0, what vector becomes an eigenvector of A A*, and what eigenvalue does it share?

- Why is it valid to define singular values as square roots of eigenvalues of A* A, and what guarantees they are nonnegative?

- If rank(A* A) = r, what must Σ look like, and how many columns of U are computed directly from u_j = (1/s_j) A v_j?

Key Points

- 1

Nonzero eigenvalues of A* A and A A* coincide, so singular values can be obtained from either product.

- 2

Singular values are defined as s_j = sqrt(λ_j) where λ_j are eigenvalues of A* A, guaranteeing they are nonnegative real numbers.

- 3

The SVD has the form A = U Σ V*, with Σ diagonal (rectangular) and singular values ordered decreasingly on its diagonal.

- 4

Right singular vectors come from an orthonormal eigenbasis of A* A, forming the unitary matrix V.

- 5

Left singular vectors for nonzero singular values are computed via u_j = (1/s_j) A v_j, which normalizes automatically.

- 6

The rank r determines how many singular values are nonzero; remaining diagonal entries of Σ are set to 0.

- 7

U is completed with additional orthonormal vectors when r is smaller than the matrix dimension, ensuring U is unitary-sized.