Academic Writing with ChatGPT (Part 1 - Beginner)

Based on E-Research Skills's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

ChatGPT can support academic writing by generating ideas, outlines, and drafts, but students must rewrite and edit to avoid generic copy and to maintain ownership.

Briefing

Academic writing with ChatGPT is less about copying finished text and more about steering the model through careful prompting, structured workflows, and controlled “memory” so outputs match a specific thesis or literature review plan. The core message: use ChatGPT to generate ideas, outlines, and drafts as starting material, then edit heavily, verify facts, and format the work to look like the student’s own voice—because wholesale copy-paste triggers plagiarism/AI-detection risk and produces generic, hard-to-defend writing.

The session begins with practical guidance on which ChatGPT versions matter for academic work. ChatGPT 3.5 is positioned as a beginner-friendly option with a knowledge cutoff around September 2021, while ChatGPT 4 extends coverage to roughly March 2023, and “GPT-4o” (referred to as “4 40”) reaches about October 2023. Multimodal capability is highlighted as a major upgrade: text plus image, audio, and video-related features (with “Sora AI” mentioned as upcoming). The instructor also warns that free tiers may be slower during peak usage and that message limits can change over time.

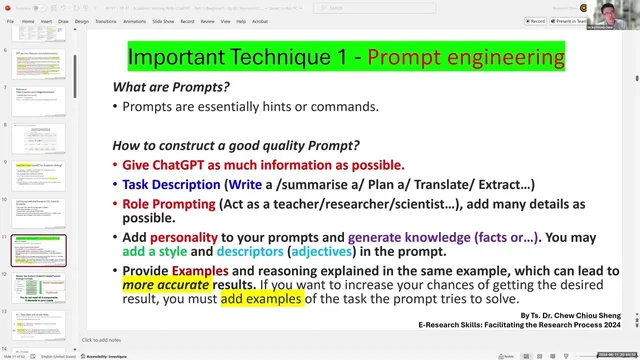

A second pillar is prompt engineering—turning a vague request into a detailed task. The guidance emphasizes giving ChatGPT enough context (goal, audience, role/persona, tone, format, and examples) so it can produce more accurate and usable academic output. Role-play is encouraged (e.g., “act as a professor” or “act as a reviewer”), along with specifying output structure such as tables, bullet points, or email formats. The session also recommends batching tasks efficiently: instead of one request per message, combine multiple sub-tasks in a single prompt (e.g., analyze, summarize, categorize, then draft), while noting that splitting into multiple prompts can help if results degrade.

To keep outputs consistent across a long writing process, the session introduces “memory” as a way to store reusable preferences—like a thesis structure template. A demonstration shows saving a thesis outline into memory (e.g., title page, abstract, acknowledgements, table of contents, introduction, literature review, methodology, results, discussion, conclusion) and then reusing it later so ChatGPT can generate section-by-section drafts aligned to that plan. The instructor cautions that saving incorrect or overly broad instructions can “corrupt” future outputs, so memory should be set deliberately and updated when needed.

For literature reviews, the session outlines an 11-step approach built around keywords and synthesis rather than copying: brainstorming keywords, forming a research problem statement, creating an outline, optionally defining key terms, then writing a critical review that is coherent, well-structured, and properly referenced. “Coherence” is treated as a skill: paragraphs must link logically, not just summarize separate papers. Critical review is framed as identifying both supporting and contradicting findings and using citations to prove claims. The session repeatedly stresses verification: rely on multiple sources/tools, use citation tools or plugins for accurate referencing, and never trust AI-generated citations blindly.

Finally, the session provides a hands-on workflow for building a research proposal and literature review around an example topic (virtual reality and mathematics education for elementary learners). It demonstrates generating keywords, proposing research gaps, drafting research objectives and research questions, and sketching hypotheses and study design elements (e.g., experimental vs traditional groups, pre-test/post-test, and statistical comparisons). The overarching takeaway is that ChatGPT becomes useful when treated like a research assistant that needs direction, constraints, and human editing—not a copy-and-paste author.

Cornell Notes

The session frames academic writing with ChatGPT as a controlled process: generate ideas and structure, then edit, verify, and cite properly. It distinguishes ChatGPT 3.5, 4, and GPT-4o by knowledge cutoffs and emphasizes multimodal capabilities for richer academic assistance. Prompt engineering is presented as the main lever—requests should include goal, role/persona, tone, format, and examples to produce more accurate outputs. “Memory” is introduced to store reusable thesis structure and writing preferences so later drafts stay consistent. For literature reviews, an 11-step workflow is recommended, with special focus on coherence and critical review (supporting and contradicting evidence) backed by citations.

Why does the session discourage copy-pasting AI-generated academic text, even if it’s paraphrased?

How should a user design prompts to get better academic outputs?

What is “memory” in this workflow, and how is it used for thesis writing?

What does the session mean by “coherence” and “critical review” in a literature review?

How does the session suggest building a research proposal from scratch using ChatGPT?

What verification steps are recommended when using AI for academic writing?

Review Questions

- What specific elements should be included in a prompt (task, goal, role, tone, format, constraints) to improve academic writing outputs?

- How would you use “memory” to keep a thesis outline consistent across multiple ChatGPT sessions, and what risks come from saving incorrect memory instructions?

- In an 11-step literature review workflow, where do coherence and critical review fit, and what evidence should support each?

Key Points

- 1

ChatGPT can support academic writing by generating ideas, outlines, and drafts, but students must rewrite and edit to avoid generic copy and to maintain ownership.

- 2

Use ChatGPT 3.5, 4, and GPT-4o with awareness of knowledge cutoffs (around Sep 2021, Mar 2023, and Oct 2023 respectively) and expect different capabilities.

- 3

Prompt engineering improves results when requests specify goal, background, role/persona, tone, output format, and constraints like word limits.

- 4

Store reusable thesis preferences (like section structure) using ChatGPT “memory,” but set it carefully because incorrect memory can distort later outputs.

- 5

Literature reviews should prioritize coherence (logical paragraph links) and critical review (supporting and contradicting evidence) backed by verified citations.

- 6

Never trust AI-generated citations or facts blindly; verify with original sources and cross-check across multiple tools.

- 7

When building a research proposal, move systematically from keywords → topic → problem statement (gap) → objectives/questions → hypotheses → study design and analysis plan.