Agent2Agent Protocol (A2A), clearly explained (why it matters)

Based on David Ondrej's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

A2A is a standardized agent-to-agent protocol from Google that aims to eliminate custom, framework-specific integrations between AI agents.

Briefing

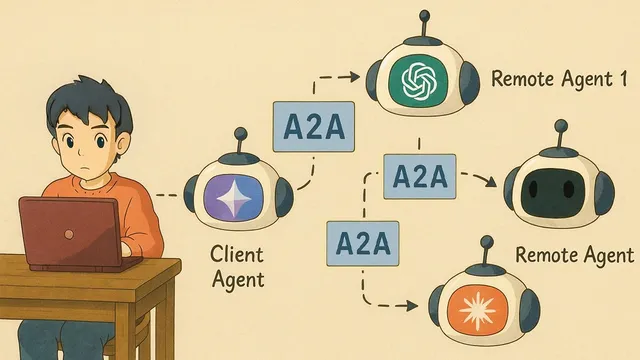

Agent-to-agent (A2A) protocol—released by Google—is positioned as a common “language” that lets AI agents from different companies and frameworks communicate through a standardized interface. The core promise is practical: instead of stitching together bespoke integrations for every tool or vendor, an agent can discover and call any other agent that supports A2A, enabling faster, more scalable, and more future-proof agent-to-agent interoperability.

A2A’s pitch comes from a real pain point in today’s agent ecosystem: fragmentation. Agents are built across different frameworks, APIs, and toolchains, so connecting them often means custom glue code and constant rework as vendors change. A2A aims to remove that chaos by requiring each compatible agent to expose a single HTTP endpoint plus a small JSON “agent card.” With that in place, a client agent can send tasks to remote servers and receive results (or follow-up questions) in a consistent way.

The protocol is contrasted with Model Context Protocol (MCP). MCP focuses on connecting tools and data to an agent—think databases, APIs, and other resources—while A2A focuses on connecting agents to other agents. Rather than competing, the two are framed as complementary: an agent speaking A2A can call other agents, and an MCP server can supply the tools, prompts, and resources those agents need. In the combined architecture described, A2A handles agent-to-agent communication, while MCP handles tool/data access.

To make the concept concrete, the walkthrough breaks A2A into four building blocks: the agent card (JSON business-card describing what an agent does and how to reach it), the A2A server (the running service that receives requests and returns outputs), the A2A client (the requester that reads agent cards, packages tasks, and collects responses), and the A2A task (a single unit of work with a lifecycle from submitted to finished). The task concept is emphasized as the uniform way to track prompts and jobs across systems.

The practical demo uses Google’s open-source A2A repository and shows local setup steps: cloning the repo, creating a Python environment with Conda, installing dependencies with uv, and running sample agents. Two example agents are brought up—one described as an image generator and another as a reimbursement handler—each exposed on local ports. A lightweight UI then registers both agents by their addresses, lists available agents, and routes user requests to the right remote agent via A2A.

The takeaway is less about the demo’s specific agents and more about the interoperability mechanism. The setup is presented as “day zero” for a standard that should reduce integration friction as the ecosystem grows. The walkthrough also highlights that early tooling may be rough (UI quirks and request frequency tuning), but the underlying protocol—standardized endpoints, discovery via agent cards, and task-based communication—is treated as the foundational step that could make multi-agent workflows across frameworks routine.

Cornell Notes

Google’s Agent-to-Agent (A2A) protocol is designed to standardize how AI agents communicate across different frameworks and vendors. Compatible agents expose a single HTTP endpoint and an “agent card” in JSON, letting an A2A client discover them and send “A2A tasks” for execution. The protocol is organized around four concepts: agent card, A2A server, A2A client, and A2A task, which tracks work from submission through completion. A2A is positioned as complementary to MCP: MCP connects tools and data to an agent, while A2A connects agents to other agents. This matters because it reduces the integration chaos that currently forces custom, framework-specific glue code.

What problem does A2A try to solve in the AI agent ecosystem?

How does an A2A-compatible agent advertise itself and become discoverable?

What roles do the A2A server and A2A client play?

Why is the A2A task concept important?

How does A2A differ from MCP, and how can they work together?

What does the demo setup demonstrate about A2A in practice?

Review Questions

- If you wanted to integrate a third-party agent into your system using A2A, what two things must that agent expose?

- Explain how A2A and MCP would be used together in a workflow where one agent needs external tools and also needs to delegate work to another agent.

- What lifecycle states does an A2A task move through, and why would that matter for debugging or orchestration?

Key Points

- 1

A2A is a standardized agent-to-agent protocol from Google that aims to eliminate custom, framework-specific integrations between AI agents.

- 2

Compatible agents expose a single HTTP endpoint plus a JSON agent card for discovery and routing.

- 3

A2A communication is organized around four concepts: agent card, A2A server, A2A client, and A2A task.

- 4

A2A focuses on agent-to-agent interoperability, while MCP focuses on tool/data access; the two are meant to complement each other.

- 5

In a combined architecture, an agent can use A2A to delegate to other agents and MCP to fetch tools, prompts, and resources those agents rely on.

- 6

The demo emphasizes that the specific agents are less important than the standardized mechanism for discovery, task submission, and result retrieval.