AgentHQ by Github

Based on Sam Witteveen's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Agent HQ is positioned as an operations-and-governance layer where AI agents can be assigned tasks, supervised, and restricted by access controls.

Briefing

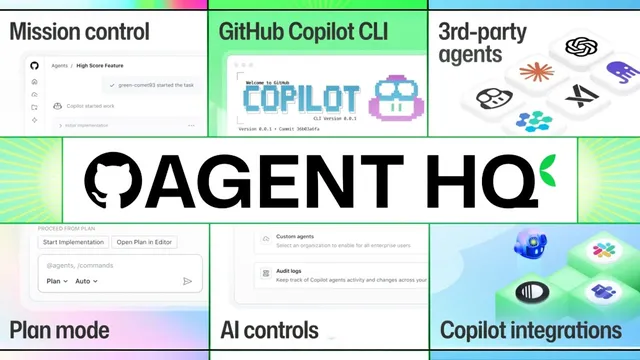

GitHub’s Universe pitch centers on a shift from “AI that helps write code” to “AI that runs software work under governance.” The centerpiece is Agent HQ: a control-and-monitoring layer where multiple AI coding agents can be assigned tasks, supervised, and granted—or denied—access to code and other resources. Rather than treating agents as isolated tools, GitHub positions Agent HQ as the operational “home” for an agent workforce, complete with human-in-the-loop oversight to prevent runaway behavior.

Agent HQ is framed as multi-agent and multi-vendor from day one. GitHub signals that organizations won’t be locked into a single model provider by enabling integration with agents from companies such as Anthropic and Google, and even startups like Cognition. Anthropic’s leadership appears in the messaging as well, with an emphasis on how Claude code agents’ capabilities can be used and deployed through Agent HQ. The control surface isn’t just task assignment; it includes “house rules” and compliance mechanisms—described through an agents markdown dock and other configuration options—so different agents can follow consistent standards across an organization’s agent suite, not just within one repository.

Security and administration are treated as first-class requirements. For organizations, Agent HQ is presented as an admin-controlled system for enforcing security compliance and controlling which repositories agents can use. The underlying concept extends beyond code: repos can contain any kind of documents, enabling examples like giving an agent access to brand-standard guidelines so updates remain consistent with brand requirements. In practice, this suggests a workflow where agents operate on structured organizational knowledge while staying within permission boundaries.

Beyond Agent HQ, the broader theme is that AI is becoming embedded across the software development life cycle. GitHub’s messaging moves from earlier “AI as a typing assistant” toward AI participating in planning, implementation, refactoring, and review—more like a junior engineer managed by humans than a standalone code generator. GitHub also points to planning tools such as GitHub spec kit, positioning AI as part of how work is specified before code is written.

A third pillar targets quality and security hardening. GitHub acknowledges a growing concern that many agent-generated outputs amount to “slop code”: even when much of the code is usable, it still needs review for quality improvements and—critically—security vulnerabilities. The governance angle is that organizations can’t scale agent usage without auditability and measurable ROI. Agent HQ’s dashboarding and metrics are positioned to answer executive questions: who is using agents, which pull requests get accepted, how much leverage teams gain, and which usage patterns correlate with production-ready outcomes. GitHub’s strategic bet is that it doesn’t need to win every single-agent race; it aims to become the central operations layer that runs, monitors, and governs agents from many providers—turning AI coding into something directors can audit, measure, and trust.

Cornell Notes

GitHub is pushing Agent HQ as an “operations layer” for an organization’s AI agent workforce, not just a smarter coding assistant. Agent HQ assigns work to multiple agents, supervises them, and controls access to code and resources, with human-in-the-loop oversight to reduce the risk of agents going off the rails. The platform is designed to be multi-agent and multi-vendor, integrating agents from providers like Anthropic and Google and even startups such as Cognition. GitHub also ties the system to governance: centralized policies, audit trails, code quality scoring, and dashboards that track adoption and outcomes like pull request acceptance. The goal is to make AI participation across planning, implementation, refactoring, and review auditable and measurable for leadership.

What is Agent HQ, and why does it matter compared with “AI coding assistants” that only generate code?

How does GitHub try to avoid vendor lock-in with Agent HQ?

What mechanisms are described for enforcing “house rules” and compliance across agents?

How does Agent HQ handle security and organizational permissions?

Why does GitHub focus so heavily on code quality and security review?

What metrics and governance capabilities are meant to satisfy leadership concerns about ROI and auditability?

Review Questions

- How does Agent HQ’s permissioning and human-in-the-loop supervision change the risk profile compared with using agents ad hoc?

- What does “multi-vendor” mean in the context of Agent HQ, and which examples of providers are mentioned?

- Why does GitHub emphasize code quality scoring and audit trails when discussing AI agents in production workflows?

Key Points

- 1

Agent HQ is positioned as an operations-and-governance layer where AI agents can be assigned tasks, supervised, and restricted by access controls.

- 2

GitHub frames Agent HQ as multi-agent and multi-vendor, integrating agents from providers including Anthropic, Google, and startups like Cognition.

- 3

“House rules” and compliance settings are meant to enforce consistent behavior across an organization’s agent suite, not just within a single project.

- 4

Admin controls extend to security compliance and repository-level permissions, with examples that include agents working from brand-standard documentation.

- 5

GitHub argues AI should be embedded across the software development life cycle—planning, implementation, refactoring, and review—rather than acting only as a code generator.

- 6

A major governance focus is preventing “slop code” from reaching production by combining review, security checks, and code quality scoring.

- 7

Dashboards and metrics are designed to make agent usage auditable and measurable for leadership, including pull request acceptance and ROI-style leverage tracking.