AI Detector Bypass - Learn to Manually Humanise AI content with me!

Based on Qualitative Researcher Dr Kriukow's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI detectors look for structural and statistical patterns—especially low variation—not just specific “AI words.”

Briefing

AI detectors don’t mainly flag writing for “AI words.” They look for statistical and structural patterns—especially low variation in how sentences are built, how ideas are connected, and how confidently claims are phrased. The practical takeaway is blunt: swapping a few “favorite phrases” won’t work. To reduce detection risk, writers need to deliberately change sentence structure, add academic-style uncertainty, and break up the uniform rhythm that language models tend to produce.

The most cited signals include perplexity and burstiness. Perplexity reflects how “surprised” a language model is by the text; human writing tends to be less predictable, while AI output often follows more statistically expected paths. Burstiness tracks variation—human sentences typically vary in length, structure, and word choice, whereas AI text can feel uniform. Detectors also pay attention to grammar polish and stylistic consistency: AI-generated prose is often grammatically flawless and stylistically steady, which can read as suspiciously even.

Beyond rhythm, detectors look for repetition and overuse of connectors. Common transition phrases—therefore, however, in conclusion—can become a tell when they appear too frequently or in predictable patterns. Vocabulary is another factor: AI often sticks to “safe” academic wording and rarely uses colloquial language, which can make the text sound generic. Coherence and depth matter too. Even when sentences are superficially meaningful, AI writing may lack originality, offer argumentation that feels hollow, or present statements that sound plausible but don’t add real argumentative weight. Some detectors also compare output against large language corpora, looking for similarities to patterns seen in known AI-generated text.

The video’s core method for “humanizing” is to restructure at the sentence level rather than editing individual words. One recommended technique is “intellectual hesitation”: replacing absolute claims with hedged academic language such as appears, is likely, is suspected, or can play a role. Another is adding subtle critique—acknowledging inconsistencies or alternative perspectives instead of presenting a single, unqualified viewpoint. Writers are also urged to vary sentence openings and syntax. Instead of repeatedly starting with the same template (e.g., “This study shows…” or “Firstly…”), they should use introductory clauses, dependent clauses, or inverted structures to disrupt the model-like cadence.

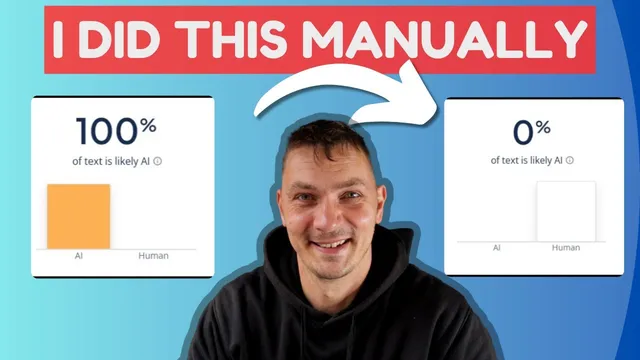

To make the advice concrete, the transcript walks through an example paragraph generated by ChatGPT and then flagged as “100% generated” by an AI-detection tool. The edits focus on changing sentence rhythm and logic flow: softening “plays a critical role” to “can play a critical role,” trimming vague or redundant phrases, simplifying overly packed lists, and avoiding near-duplicate sentence structures that mirror each other in length and form. The final revision also reorders elements (e.g., starting with “As…”) and combines ideas to reduce structural similarity between adjacent sentences.

The closing guidance is practical: read for meaning, then rewrite in one’s own words rather than trying to preserve the original phrasing. The goal isn’t to “trick” detectors with superficial substitutions; it’s to produce writing that reflects human variation in structure, uncertainty, and argumentative texture—especially in academic contexts where the bar for naturalness is higher.

Cornell Notes

AI detectors rely on more than keyword spotting. They look for statistical predictability (perplexity), low variation (burstiness), repetitive sentence structure, overused transitions, overly polished grammar, and generic “safe” vocabulary. They also flag writing that lacks genuine argumentative depth or originality, even when sentences sound academically correct. The transcript’s main fix is structural: introduce academic hedging (“can,” “appears,” “is likely”), add subtle critique, and vary sentence openings and syntax using clauses and reordering. An example paragraph flagged as 100% AI-generated is revised by softening absolutes, trimming vague lists, simplifying meaning, and avoiding near-identical sentence templates.

What signals do AI detectors use besides “AI vocabulary”?

How does “perplexity” and “burstiness” translate into writing choices?

Why do hedging and “intellectual hesitation” matter for detection risk?

What does varying sentence openings and syntax look like in practice?

What specific editing moves were used on the flagged example paragraph?

What is the transcript’s recommended workflow for rewriting?

Review Questions

- Which detector signals in the transcript are structural (rhythm/variation) rather than lexical (word choice)?

- Give two examples of hedging language and explain how each changes the certainty of an academic claim.

- What kinds of sentence-level changes most directly disrupt the “uniform template” pattern described in the transcript?

Key Points

- 1

AI detectors look for structural and statistical patterns—especially low variation—not just specific “AI words.”

- 2

Perplexity and burstiness are used as key indicators: human writing is less predictable and more varied in sentence structure and length.

- 3

Over-polished grammar, consistent style, and repeated sentence starters can increase detection risk even when sentences are correct.

- 4

Overused transitions and connectors (e.g., therefore/however/in conclusion) become a tell when they appear too predictably.

- 5

Academic “intellectual hesitation” (hedging with appears/is likely/is suspected) helps replace AI-like absolutes.

- 6

Subtle critique and alternative perspectives can make argumentation feel less one-note and more human.

- 7

The most effective fixes come from rewriting for meaning and restructuring sentences to avoid near-duplicate templates.