AI Frontiers: Jesper Hvirring Henriksen (OpenAI DevDay)

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

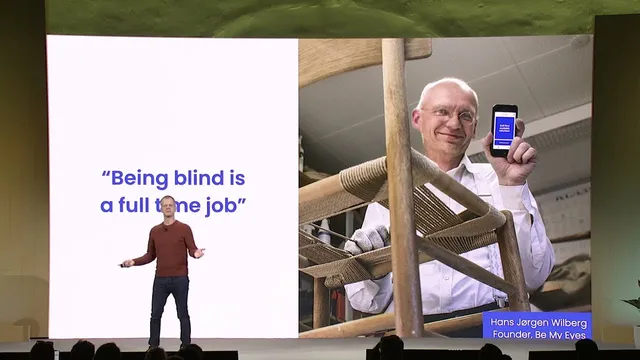

Be My Eyes launched “Be My AI” on GPT-4V to give blind and low-vision users an always-available alternative to volunteer video calls.

Briefing

Be My Eyes has launched “Be My AI” on GPT-4V, giving blind and low-vision users an always-available visual assistant that can describe images in real time—without requiring a volunteer video call. The core shift is independence: users can get help 24/7 when they don’t want to rely on others, don’t feel like talking to a stranger, or simply need quick answers at inconvenient times. The service is built on Be My Eyes’ existing model—over half a million users supported by more than 7,000,000 volunteers—but adds an AI option for moments when human assistance isn’t ideal.

The practical impact centers on image understanding across both everyday life and the web. Many apps and websites are accessible at a basic level, yet their images often lack meaningful alt text, leaving screen reader users unable to interpret photos and graphics. With Be My Eyes and Be My AI, users can request thorough descriptions of images encountered online or inside apps, including group chat photos that are otherwise confusing and inaccessible website graphics that screen readers can’t fully convey. A cited example from a US government site describes a chart whose visible text is generic (“Bar chart showing rising global temperatures since 1880”) while the AI-generated description provides a more usable interpretation—framing the difference as “another world” for users who previously had no meaningful access to the visual content.

The rollout also emphasizes that the descriptions are not only accurate but often conversational and lightly witty, which matters for usability. Beta testing results are presented through user outcomes and usage metrics: one long-time user (Caroline) moved from about two calls per year to becoming a beta tester in March and then completing more than 700 image descriptions. Another beta tester (Lucy Edwards) demonstrates the workflow in daily tasks—checking eggs for shells, reading an oat milk expiry date, sorting laundry, identifying food on a plate, interpreting product packaging, and even getting captions for Instagram photos that lack audio description.

Operationally, Be My Eyes describes a staged deployment: a small beta group in March when GPT-4 launched, followed by a broader iOS rollout about six weeks before the talk. Usage has reached roughly a million image descriptions per month, with satisfaction ratings around 95% after accounting for downtime and system errors. Language support has expanded to about 36 languages, attributed largely to prompting the model to respond in the user’s language.

Beyond consumer use, Be My AI is also deployed in enterprise support workflows, including Microsoft’s Disability Answer Desks. Users can start with a chatbot for computer troubleshooting rather than escalating to a call; the service reports that 9 out of 10 users who begin with chat do not escalate. The broader conclusion is that AI systems that can see, hear, and respond in human-like ways are expected to materially improve accessibility in assistive technologies—turning visual understanding into a practical, scalable support layer for people who need it most.

Cornell Notes

Be My Eyes added “Be My AI” built on GPT-4V to provide blind and low-vision users an always-on alternative to volunteer video calls. The service focuses on turning images—both in daily life and on the web—into detailed, usable descriptions, especially where websites and apps lack meaningful alt text. Early deployments show high engagement (about a million image descriptions per month) and strong satisfaction (around 95% after discounting downtime/system errors). Language support expanded to roughly 36 languages, largely by prompting the model to answer in the user’s language. The same capability is also used in enterprise support, including Microsoft’s Disability Answer Desks, where most users resolve issues via chat without escalating to calls.

What problem does Be My AI solve that volunteer video calls can’t always?

Why does image description matter for accessibility on the web and in apps?

What does the transcript suggest about the quality of GPT-4V descriptions?

How quickly did Be My AI scale, and what usage/satisfaction numbers were reported?

How did language support expand, and what was the approach?

How is Be My AI used outside consumer assistance?

Review Questions

- How does the lack of meaningful alt text affect accessibility, and how does Be My AI address that gap?

- What metrics were used to describe Be My AI’s early performance (usage volume and satisfaction), and what do they imply about user adoption?

- In what ways does the transcript connect visual AI capabilities to both consumer accessibility and enterprise support workflows?

Key Points

- 1

Be My Eyes launched “Be My AI” on GPT-4V to give blind and low-vision users an always-available alternative to volunteer video calls.

- 2

The service targets situations where independence matters—users can get help without scheduling or relying on others.

- 3

Be My AI improves access to images online and in apps, especially when images lack meaningful alt text for screen readers.

- 4

Reported early results include about a million image descriptions per month and roughly 95% satisfaction after discounting downtime/system errors.

- 5

Language support expanded to about 36 languages by prompting the model to respond in the user’s language.

- 6

Be My AI is also used in enterprise support, including Microsoft’s Disability Answer Desks, where most users resolve issues via chat without escalating to calls.