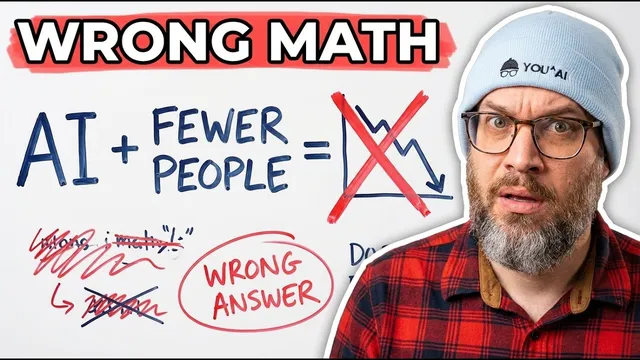

AI Made Every Company 10x More Productive. The Ones Cutting Headcount Are Telling on Themselves.

Based on AI News & Strategy Daily | Nate B Jones's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Treat execution cost as the core constraint; AI efficiency gains should expand opportunity rather than only reduce headcount.

Briefing

AI is compressing the cost of turning ideas into working products—so the biggest strategic mistake is treating the future as a fixed “headcount pie.” Instead of asking how many jobs can be cut, leaders are being urged to ask what people can do differently now that execution is dramatically cheaper and faster. The payoff is not just productivity gains; it’s a shift in what companies can attempt, how quickly they can learn, and who gets to build.

A central framing comes from “Jevons’ paradox”: when a resource becomes more efficient, consumption rises rather than falls. The argument applies to work because AI lowers the “execution cost” of intelligence by an order of magnitude or more. That change should expand demand for software and insight, not shrink it—mirroring past tech expansions when key inputs got cheaper (steel enabling skyscrapers and railroads; computing enabling personal computing, the internet, mobile, and cloud; distribution enabling new media categories). The implication is blunt: layoffs may happen, but the winners will be the organizations that bet on expanded opportunity rather than optimizing for efficiency alone.

Six “people-focused unlocks” outline what that opportunity looks like in practice.

First, teams need to “go fast.” AI can compress product iteration cycles from months to days, changing strategy from “pick the best bet” to “run many learning cycles.” The transcript points to Cursor’s February 2026 cloud agents update as an example: developers can spin up to 20 parallel agents on isolated cloud VMs, with a growing share of code and pull requests generated autonomously. Faster iteration shifts the bottleneck from building to deciding—moving from “can we build it?” to “should we build it?”—and requires leaders to empower entrepreneurial behavior rather than fear punishment.

Second, the “equation for builders” changes as the translation layer between domain knowledge and software disappears. Domain experts—doctors, logistics managers, teachers—can describe what they need and have agents build it quickly. Tools such as Lovable, Bolt, and Replet are cited as early signals that production-quality development is moving toward non-coders, which could expand the builder base from tens of millions of developers to hundreds of millions.

Third, software quality becomes the default. Agent-driven testing, security review, documentation, performance optimization, accessibility, and visual polish are framed as verifiable and increasingly routine, reducing the historical gap between top-tier teams and everyone else.

Fourth, “every company is going to be a platform.” Instead of treating integrations as painful bridges, organizations should assume agents will interact with open systems and build integrations proactively—turning platform strategy into something ICs can execute quickly.

Fifth, the market for ambition expands because cheaper execution flips investment math. CFOs are urged to reconsider risk and roadmaps when experiments can be run more cheaply and failures cost less.

Sixth, organizations must move at the speed of insight: once customer-relevant insight is reliable, teams should default to getting it into code rather than waiting on process, documentation, or approvals.

The closing claim is that these unlocks don’t require AGI or speculative breakthroughs. The hard part is human: redefining upskilling, changing incentives, and building new capabilities around vision, domain expertise, customer empathy, and creative execution—before the opportunity passes by.

Cornell Notes

AI’s real impact is lowering the cost of execution for intelligence, which should expand what companies can build rather than shrink the opportunity into a fixed headcount pie. The transcript argues that Jevons’ paradox applies to work: efficiency gains increase consumption, so demand for insight and software should rise. It then lays out six people-centered “unlocks”: go fast (more learning cycles), enable new builders (domain experts building directly), make quality the default (agent-driven testing and review), treat every company as a platform (proactive integrations), fund ambition (revised ROI math), and move at the speed of insight (default to shipping code). The stakes are strategic: the biggest challenge is not technical—it’s mindset, empowerment, and upskilling for roles that haven’t existed at this scale.

Why does the transcript reject “headcount reduction” as the main AI question?

What does “go fast” change about strategy and decision-making?

How does the “translation layer” concept change who can build software?

Why does the transcript say software quality will become the default?

What does “every company is going to be a platform” mean in an agent world?

What is “speed of insight,” and why is it different from “speed of execution”?

Review Questions

- Which constraint does the transcript treat as the real driver of the “fixed pie” narrative, and how does Jevons’ paradox support that view?

- Pick two of the six unlocks and explain how each changes a specific bottleneck in product development (e.g., building vs deciding, or process vs shipping).

- What kinds of human capabilities does the transcript say become more scarce—and therefore more valuable—when execution costs drop?

Key Points

- 1

Treat execution cost as the core constraint; AI efficiency gains should expand opportunity rather than only reduce headcount.

- 2

Use Jevons’ paradox to anticipate that cheaper intelligence increases overall consumption of products and services.

- 3

Compress iteration cycles to shift strategy from rare, high-stakes bets to frequent learning cycles and faster decision-making.

- 4

Unlock domain experts as builders by removing the lossy, expensive translation layer between expertise and software.

- 5

Make quality routine by using agent-driven testing, security review, documentation, and polish as standard procedure.

- 6

Adopt platform thinking across the organization by building integrations proactively so agents can operate in open systems.

- 7

Reframe investment and risk calculations: when execution is cheaper, ambition and experimentation become financially rational.