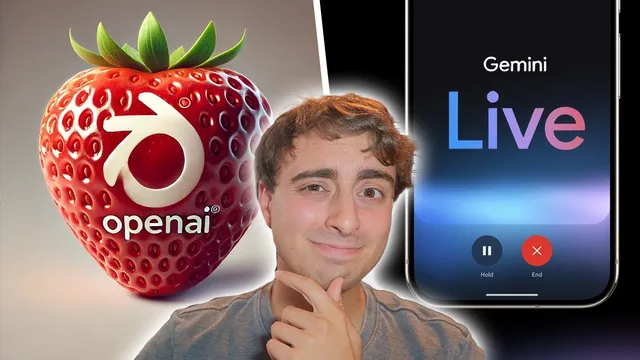

AI RECAP: Rumored GPT-4o Large Model & Gemini Live vs GPT-4o Advanced Voice

Based on MattVidPro's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

The “Q* / strawberry” rumor claims a new reasoning architecture could improve first-pass problem solving, illustrated by a letter-count prompt that often fails today.

Briefing

A swirl of “strawberry/Q*” rumors about OpenAI’s next reasoning model is colliding with concrete updates—yet the most important question remains unanswered: whether any new model actually changes what users can do inside ChatGPT. The speculation centers on a rumored “Q* / strawberry” architecture described as a new reasoning engine meant to give large language models more human-like problem solving. A simple test is used to illustrate the gap today: asking a model how many “R” letters are in “strawberry” often produces an incorrect count, even though it can sometimes self-correct after being prompted.

The hype takes on a more concrete feel because high-profile OpenAI figures appear to engage with strawberry-themed posts on X. Sam Altman replies “amazing” to a tweet from an account using strawberries as its branding, and other AI insiders amplify the attention. At the same time, the official ChatGPT account posts about a “new GPT-4o Omni model” being available in ChatGPT, but the transcript’s author reports not noticing any obvious differences in the app—no clear model switch, no access to new “Advanced voice,” and no change in image generation behavior (still tied to the Dolly3 API and existing image tools).

Still, one prediction seems to land on timing. The strawberry-themed account had claimed something would arrive from OpenAI at 10:00 a.m., and OpenAI did release an updated SWE-bench iteration—a benchmark designed to evaluate how well AI systems solve real-world software tasks. That matters because it’s a more developer-relevant yardstick than generic chat benchmarks. The same account also points to Thursday as a likely day for a “GPT-4o Omni large” release, with the idea that OpenAI might pair a new model with a benchmark drop to justify performance gains.

Even if a Thursday release arrives, the transcript argues that “better reasoning” won’t be convincing without a clear explanation of how it works and when it beats alternatives like prompting tricks or retrieval/search-based systems such as Perplexity. The core frustration is transparency: users want to know what’s actually changing under the hood, not just what’s being teased.

On the Google side, the day’s major announcement is “Gemini Live,” positioned as a voice-first experience on Android. The transcript’s author demonstrates a Gemini Live flow with multiple selectable voices and a conversation that brainstorms science activities, but then draws a key distinction: Gemini Live appears to convert speech to text, generate a response in text, and then convert that text back to audio—meaning it’s not “native” multimodal voice in the same way as OpenAI’s rumored/advanced voice approach. The Gemini Live feature set also includes Android integration and small utilities (like a calendar extension that reads concert flyers), but nothing presented as a leap that would force a platform switch. Overall, the transcript frames OpenAI’s strawberry/Q* mystery as the more consequential story, while Google’s Gemini Live looks incremental compared with what’s already available elsewhere.

Cornell Notes

Rumors about OpenAI’s “Q* / strawberry” architecture claim a new reasoning engine could make large language models solve problems more like humans. A common illustration is the “strawberry” letter-count prompt, where models often give wrong answers unless users prompt for self-correction. Despite strawberry-themed posts from prominent OpenAI figures and a claim of an upcoming “GPT-4o Omni large” on Thursday, reported testing finds no obvious model changes in ChatGPT, and image generation still appears tied to existing Dolly3-based tools. OpenAI did release an updated SWE-bench benchmark at the predicted time, a meaningful signal for developers because it targets real software task performance. Google’s Gemini Live launches on Android with voice and multiple voices, but it appears to rely on speech-to-text and text-to-speech rather than native multimodal voice, making it less of a direct competitor to advanced voice systems.

What is the “strawberry/Q*” reasoning claim, and how is it tested in the transcript?

Why do strawberry-themed posts from prominent accounts matter to the rumor’s credibility?

What concrete OpenAI update lands at the predicted time, and why is it significant?

What does the transcript claim about “GPT-4o Omni” and user-visible changes in ChatGPT?

How does Gemini Live differ from OpenAI’s advanced voice approach, according to the transcript?

Review Questions

- What specific prompt example is used to illustrate the gap between current LLM behavior and human-like reasoning, and why does it matter?

- Which OpenAI release mentioned in the transcript is tied to developer evaluation (benchmarks), and what does SWE-bench measure?

- According to the transcript, what technical pipeline does Gemini Live appear to use, and how does that affect its claim to “advanced voice” capability?

Key Points

- 1

The “Q* / strawberry” rumor claims a new reasoning architecture could improve first-pass problem solving, illustrated by a letter-count prompt that often fails today.

- 2

Prominent OpenAI engagement with strawberry-themed posts increases attention, but reported user testing finds no obvious ChatGPT model changes yet.

- 3

OpenAI’s release of an updated SWE-bench at the predicted time is a concrete, developer-relevant signal because it targets real software task performance.

- 4

The transcript’s author reports no visible differences in GPT-4o Omni behavior, no access to Advanced voice, and no clear change in image generation pathways tied to Dolly3.

- 5

The Thursday “GPT-4o Omni large” claim remains unverified in the transcript, with skepticism focused on the need for transparent explanations and measurable advantages.

- 6

Google’s Gemini Live launches on Android with multiple voices and useful utilities, but it appears to rely on speech-to-text and text-to-speech rather than native multimodal voice.

- 7

Gemini Live is framed as incremental compared with advanced voice systems, while the OpenAI strawberry mystery is treated as the more consequential storyline.