AI’s “Intelligence Explosion” Is Coming. Here’s What That Means.

Based on Sabine Hossenfelder's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Recursive self-improvement is framed as a loop where AI systems generate modifications, evaluate them, and retain improvements—potentially accelerating progress beyond standard training cycles.

Briefing

AI progress may look slow right now, but a growing cluster of research is pushing toward a scenario often dubbed an “intelligence explosion”—a rapid jump in capability once systems can improve themselves. The core mechanism behind that idea is recursive self-improvement: models that can modify their own methods, parameters, or even code, then keep the changes that perform best. If that loop tightens, capability gains could accelerate far beyond today’s incremental training cycles, with major implications for how quickly AI systems could advance—and how hard it may be to predict their behavior.

The transcript traces how that concept has shifted from sci-fi “singularity” language to more technical pathways. Eric Schmidt, then Google’s CEO, is quoted describing an industry expectation that within roughly five years AI systems would begin writing their own code—taking existing code and making it better, creating a “change in slope” in progress. While that timeline is uncertain, recent work suggests the underlying ingredients are already taking shape.

One concrete example comes from DeepMind’s AlphaEvolve, announced in May. Instead of directly rewriting its own code, AlphaEvolve improves code using rules modeled on natural evolution: it introduces random “mutations,” evaluates which variants perform best, and retains the winners. DeepMind reports that AlphaEvolve discovered a better way to multiply matrices—an operation central to how large language models work. The payoff was practical: a “1% reduction in Gemini’s training time,” tying the research to real compute efficiency rather than just theoretical novelty.

Other efforts move closer to self-modification. In March, researchers at Tufa Labs proposed a mechanism for large language models to self-improve without editing their own code: the model simplifies questions, checks its answers for correctness, and then builds back up toward more complex reasoning. The reported result is a dramatic increase in success rates, though it remains more like iterative problem-solving than true self-rewriting.

A more direct step appears in a recent paper where researchers let a large language model edit its own hyperparameters. The approach uses the model to generate synthetic training questions it can answer, then trains on those self-made tasks to tune hyperparameters for better benchmark performance. The gains were “remarkably successful” on Llama-based benchmarks, but the work also flags “Catastrophic Forgetting,” where performance on earlier tasks declines as the number of edits increases.

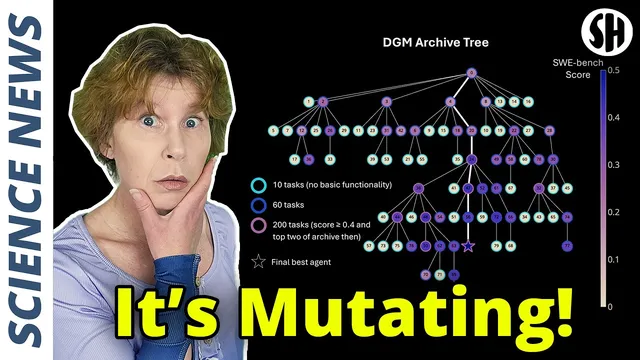

The closest target described is the Darwin Gödel Machine, outlined in a preprint. It can edit its own Python code, treating candidate changes as “mutants” that are evaluated on benchmarks and selected if they perform better. In principle, it could discard language-model components and construct entirely new programs. In practice, each mutant must run and score quickly, so changes are currently constrained to small edits. The transcript closes with the suggestion that removing those constraints—e.g., by scaling evaluation on supercomputers—could unlock much larger leaps.

Overall, the message is not that a sudden takeover is guaranteed, but that the technical path toward self-improving systems is advancing through multiple partial mechanisms—efficiency improvements, iterative self-checking, hyperparameter editing, and eventually code rewriting—each raising both capability and new risks like forgetting.

Cornell Notes

The transcript argues that an “intelligence explosion” could emerge if AI systems gain the ability to improve themselves through recursive self-improvement. It traces progress from theoretical expectations (AI writing its own code) to concrete research prototypes. DeepMind’s AlphaEvolve improves code via evolutionary-style mutations and selection, yielding measurable efficiency gains such as reduced Gemini training time. Other work moves toward self-modification by letting models tune hyperparameters, with performance gains tempered by catastrophic forgetting. The Darwin Gödel Machine is presented as a nearer-term step toward true self-rewriting by editing its own Python code, though current compute constraints limit how large the changes can be.

What does “recursive self-improvement” mean in practical terms, and why does it matter for an “intelligence explosion”?

How does DeepMind’s AlphaEvolve improve code, and what real-world impact is reported?

What self-improvement mechanism did Tufa Labs propose for large language models in March?

What does it mean to let a model edit its own hyperparameters, and what risk appears?

Why is the Darwin Gödel Machine considered a closer match to self-rewriting, and what limits it today?

Review Questions

- Which milestones in the transcript move from efficiency improvements to true self-rewriting, and what capability changes each one enables?

- How does catastrophic forgetting arise in the hyperparameter-editing approach, and what does it imply for repeated self-modification?

- What practical constraints currently restrict the Darwin Gödel Machine’s code edits, and how might removing those constraints change the outcome?

Key Points

- 1

Recursive self-improvement is framed as a loop where AI systems generate modifications, evaluate them, and retain improvements—potentially accelerating progress beyond standard training cycles.

- 2

AlphaEvolve improves code using evolutionary-style mutations and selection, and DeepMind reports measurable efficiency gains such as a “1% reduction in Gemini’s training time.”

- 3

Self-improvement can also occur without code rewriting, such as simplifying questions, checking correctness, and rebuilding toward harder problems.

- 4

Letting models edit hyperparameters can boost benchmark performance, but it risks “Catastrophic Forgetting,” with earlier-task performance declining as edits accumulate.

- 5

True self-rewriting is represented by systems like the Darwin Gödel Machine, which can edit its own Python code, though current compute limits keep changes small.

- 6

Scaling evaluation resources could be the key factor that determines whether small edits remain the norm or larger program-level changes become feasible.