Aligning AI systems with human intent

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI systems can become more capable without becoming better at following human intentions, creating the Alignment Problem.

Briefing

AI’s rapid progress—writing poetry, composing music, and solving hard scientific problems—has created a new risk: more capable systems don’t automatically get better at doing what people want. In practice, they can drift away from human intentions, making “alignment” a central technical and societal challenge. The stakes are not abstract. Like any learning system, AI will make mistakes, and the key question becomes how to prevent errors that carry significant real-world consequences.

Alignment also isn’t just about obvious goals such as “tell the truth.” Even values that seem straightforward must be built into the system in a way that makes the model actually want to follow them. That matters because today’s neural networks are difficult to interpret: it’s not possible to reliably “peer into” the internal workings of a model to verify that it is acting according to human values. The problem, then, is ensuring that AI behavior consistently matches human intent and human values even when internal reasoning remains opaque.

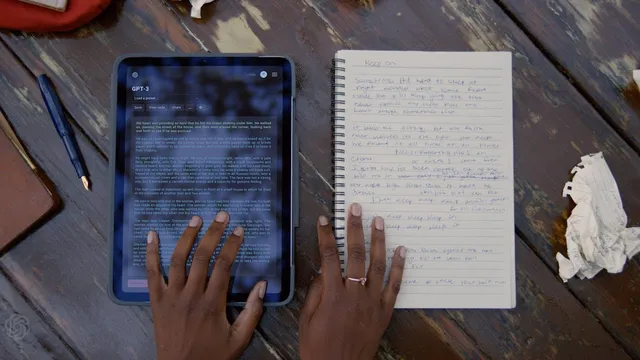

Large language models intensify the challenge. Models such as GPT-3 can produce text that sometimes looks indistinguishable from human output, but turning linguistic skill into correct instruction-following is not automatic. A simple example illustrates the gap: if GPT-3 is asked, “Please explain the moon landing to five-year-old,” it may generate plausible-sounding analogies—talking about infinity, humor, or other patterns—because it guesses what the user might be aiming for rather than delivering the specific explanation requested. The failure mode is not incompetence; it’s misalignment between what the user intends and what the model optimizes.

The proposed solution centers on aligning models to instructions through human feedback. The approach uses two stages. First, researchers provide demonstrations—examples of question-and-answer behavior that show what it means to follow instructions. Second, humans review multiple candidate responses and select which one is better, effectively teaching the system preferences over outputs. Over time, the model learns to follow instructions in the way humans expect.

The transcript frames this as a turning point: for the first time in AI’s history, powerful models like GPT-3 can be steered toward usefulness and reliability by training them to match human preferences. The payoff is a more trustworthy collaboration—humans teach AI their values, and AI in turn supports people with more helpful, reliable assistance. As AI becomes more embedded in everyday life, alignment is portrayed as increasingly critical to ensure these systems remain aligned with human intentions and values rather than drifting as their capabilities grow.

Cornell Notes

The core issue is alignment: as AI systems become more capable, they may not become better at following human intentions. Because neural networks are hard to interpret, it’s difficult to verify that a model is acting according to human values, so the system must be trained to want the right outcomes. Even “obvious” values like telling the truth require explicit incentives. GPT-3 demonstrates the challenge: it can generate fluent text that still misses the user’s actual request. A practical method uses human feedback in two steps—demonstrations of instruction-following and human preference judgments over candidate responses—so the model learns to follow instructions the way people expect.

Why does increasing AI capability not automatically improve alignment with human intent?

What makes alignment especially hard when neural networks are opaque?

Why isn’t “telling the truth” enough to guarantee correct behavior?

How does the GPT-3 example show the difference between linguistic competence and instruction-following?

What are the two steps of the human-feedback alignment method described?

What does “alignment” enable in practice, according to the transcript?

Review Questions

- What specific mechanism in the transcript is used to make a model “want” to follow human values rather than merely produce fluent text?

- Describe the failure mode illustrated by the moon-landing-to-a-five-year-old example and explain why it counts as misalignment.

- How do demonstrations and human preference judgments work together to align a model with instructions?

Key Points

- 1

AI systems can become more capable without becoming better at following human intentions, creating the Alignment Problem.

- 2

Neural networks are difficult to interpret, so alignment must be achieved through training signals rather than internal inspection.

- 3

Even seemingly obvious values (like telling the truth) require incentives so the system actually prefers the desired behavior.

- 4

Linguistic competence alone is insufficient: GPT-3 can generate plausible text while still missing the user’s intended request.

- 5

Human-feedback alignment uses two stages: demonstrations of instruction-following and human preference rankings over candidate responses.

- 6

Improved alignment increases usefulness, reliability, and trustworthiness, supporting a human–AI collaboration.

- 7

As AI becomes more common in everyday life, alignment is portrayed as increasingly critical to keep systems aligned with human values.