Alpha Geometry

Based on West Coast Machine Learning's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

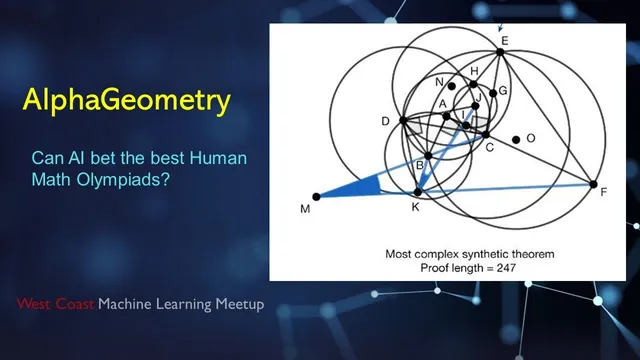

Alpha Geometry solves a subset of plane-geometry proof tasks by alternating between a symbolic theorem prover (closure computation) and a language model that proposes auxiliary constructions.

Briefing

Alpha Geometry is a system that solves a difficult subset of geometry proofs—specifically “plane geometry” problems—without human demonstrations, by combining a symbolic deduction engine with a small GPT-style language model. The key breakthrough is not that the model performs geometric reasoning end-to-end; instead, it learns to propose promising auxiliary constructions (new points, lines, circles) while the symbolic engine deterministically derives all consequences and checks whether the target statement follows. That division of labor matters because geometry proof datasets are scarce, while the symbolic engine can verify correctness instantly once the right construction is proposed.

The symbolic side works like an open-book theorem prover for geometry. It stores a database of geometric rules expressed as equality-style propositions (for example, angle relationships in triangles, parallel-line angle facts, and other standard inference patterns). Given an initial set of premises—such as “AB is parallel to CD,” “ABC is a triangle,” and the goal statement—this engine repeatedly matches rules to the current facts, adds newly implied facts, and computes the closure: the full set of statements derivable from the premises. For many simpler geometry tasks, this closure computation can reach the answer in seconds.

The hard cases are those where the proof requires auxiliary construction: adding specific new objects like perpendicular feet, angle bisectors, midpoints, or circles inside/outside a configuration. A central example discussed is proving equal areas of two triangles that share a base under a parallel-lines condition. The symbolic engine alone can’t “invent” the needed perpendicular constructions; it can only propagate consequences of what’s already present. The combinatorial explosion is the problem: there are many possible points and constructions one could add, and generic heuristics for choosing them don’t scale.

To address data scarcity, the system generates its own training proofs. It first samples random premise sets, then uses the symbolic engine to grow a directed graph of derivable facts. Each node corresponds to a statement that becomes provable from earlier nodes; by tracing backwards from a randomly chosen node, the system extracts a synthetic proof: a minimal set of premises plus the sequence of deduction steps. From an initial pool of hundreds of millions of synthetic proofs, deduplication leaves about 100 million, and a further backward “hardening” step removes premises that the final conclusion doesn’t depend on—forcing the proof to reintroduce those missing objects via auxiliary construction.

The language model is trained on these synthetic proof strings using next-token prediction from scratch (not pretrained weights), with a custom tokenizer tailored to geometry facts and construction commands. During inference, it does not see the symbolic engine’s entire closure. Instead, it alternates with the symbolic engine: given the current premises and goal, the model proposes one auxiliary construction; the symbolic engine then recomputes closure to see whether the goal is reached. Because the model’s suggestions can be wrong, the system uses beam search—keeping hundreds of candidate construction paths in parallel (reported as beam width 512) and limiting how many constructions each path can add (max depth 16). Only the top candidates survive to the next iteration.

On the benchmark of 30 International Mathematical Olympiad-style geometry problems, the system solves 25, beating prior computer baselines (previous bests were around 10/30) and landing near the average performance of top human gold medalists over the same set. Ablations show performance drops when training data is reduced, when fine-tuning on auxiliary-construction-heavy proofs is removed, or when beam width is lowered—suggesting the search strategy and the synthetic proof curriculum are both crucial. The result is a practical, human-readable proof trace: each step corresponds to an explicit construction, followed by symbolic rule applications that justify the derived facts.

Cornell Notes

Alpha Geometry combines a symbolic deduction engine with a GPT-style language model to solve plane-geometry proof problems. The symbolic engine stores geometric rules and, given premises, computes the full deductive closure to verify whether the target statement follows. The language model’s job is narrower: propose one auxiliary construction at a time (new points, perpendiculars, midpoints, circles, etc.) that the symbolic engine can then use to derive consequences. Because real geometry proofs are scarce, training uses synthetic proofs generated by sampling premises and growing proof graphs with the symbolic engine, then “hardening” proofs so they require auxiliary construction. Inference alternates model suggestions with symbolic verification using beam search (beam width 512, max depth 16), yielding 25/30 solutions on the referenced Olympiad geometry benchmark—close to top human averages.

Why can’t the symbolic deduction engine solve the hardest geometry problems by itself?

How does the system generate training data when geometry proof datasets are too small?

What does “hardening” proofs mean, and why does it force auxiliary construction?

What exactly does the language model do during inference, and what does it not do?

How does beam search fit into the proof search process?

Why are the reported results meaningful even if the method uses brute-force search?

Review Questions

- What is the division of labor between the symbolic deduction engine and the language model, and how does that affect what each component is responsible for during inference?

- Describe how synthetic proofs are generated from premise sampling and proof graphs, and explain how “hardening” changes which steps require auxiliary construction.

- Why does beam search help in this setting, and what do beam width and max depth control in the construction sequence exploration?

Key Points

- 1

Alpha Geometry solves a subset of plane-geometry proof tasks by alternating between a symbolic theorem prover (closure computation) and a language model that proposes auxiliary constructions.

- 2

The symbolic deduction engine can only derive consequences of given premises; it cannot invent the new points/circles/perpendiculars that many Olympiad proofs require.

- 3

Training data is synthesized: random premise sets are expanded into proof graphs using the symbolic engine, then backward-traced to extract proof sequences.

- 4

A “hardening” step removes premises not needed for the conclusion, forcing later proofs to reintroduce those objects via auxiliary construction.

- 5

Inference uses beam search (beam width 512, max depth 16) to explore many candidate construction paths in parallel, pruning by the language model’s next-token probabilities.

- 6

The language model is trained on full proof strings but, during inference, it does not receive the symbolic engine’s full closure; it only outputs the next construction suggestion.

- 7

On the referenced 30-problem Olympiad geometry benchmark, the system solves 25, outperforming earlier computer baselines and approaching top human averages.