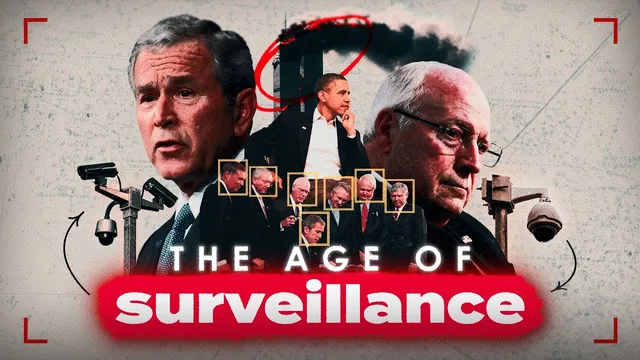

Americans Are Being Watched (and it’s getting worse)

Based on Second Thought's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

New York City’s camera density is presented as high enough that people can be identified from multiple blocks away, especially when paired with automated facial recognition.

Briefing

Surveillance in the U.S. has expanded into a tightly networked system where police, federal intelligence agencies, and major tech companies can draw from the same data streams—making privacy loss both pervasive and hard to opt out of. The core claim is that this isn’t just “more cameras” or “more tracking,” but a structural shift: data collection has become continuous, automated, and contractually outsourced, so government oversight can scale without running into constitutional limits that apply to direct government action.

New York City is used as a concrete snapshot. Estimates cited in the transcript put the city’s camera count around 15,000, with roughly 100 publicly accessible cameras encountered just to reach lower Manhattan. Those cameras can identify people from more than two blocks away, and the argument is that if law enforcement can access them, they can be paired with automated facial recognition. The transcript points to NYPD facial recognition use in 22,000 cases between 2017 and 2021, including efforts to track BLM protesters in 2020. Beyond cameras, the surveillance “arsenal” includes repurposed body cameras, drones filming protests, automated license plate readers, and even fake cell towers used to collect phone-related data.

The reach extends beyond policing into everyday consumer technology. A French location-finding app was flagged by Yale’s privacy lab for tracking users via inaudible sound signals picked up by phones. Meanwhile, the transcript argues that major platforms—especially Google—retain search histories indefinitely (including Incognito) and that Google tracking infrastructure appears on the vast majority of top websites. It also ties the post-Snowden landscape to upstream sharing: surveillance programs such as PRISM and XKeyscore are described as enabling the NSA to search emails, messages, and browsing history, and to obtain phone call records from carriers like AT&T and Verizon without prior authorization. The transcript further cites the Brennan Center for a figure of 3.4 million warrantless FBI searches of Americans’ phone calls, emails, and text messages in 2021.

The “how it happened” section traces the shift to Silicon Valley’s relationship with intelligence after 9/11. It describes a CIA-backed venture capital effort in 1999 (initially framed as a limited experiment) that later became permanent and more influential after 9/11. It then links Google’s rise to intelligence partnerships and data-driven surveillance capabilities: Google’s search data collection is portrayed as a turning point, with founders recognizing that search behavior could power targeted advertising and predictive behavioral profiling. The transcript claims that legal protections for privacy were weakened rapidly after 9/11—especially through the Patriot Act—while regulatory constraints that might have limited data collection were rolled back.

The final thrust is that surveillance is not merely a trade-off for safety. The transcript cites studies suggesting metadata collection has little discernible impact on stopping terrorism, and it argues that the real engine is “surveillance capitalism”: companies profit from prediction models built on personal data, while government and police can benefit from that infrastructure through public-private cooperation. The conclusion is a warning about the threat of future misuse: even if data is used today for advertising or convenience, the same systems can be repurposed quickly for tracking, enforcement, and targeting—leaving people with little practical ability to avoid the watchful infrastructure.

Cornell Notes

Surveillance in the U.S. is portrayed as a coordinated ecosystem linking police, federal intelligence, and tech companies through shared data pipelines. The transcript highlights New York City camera density, facial recognition use, and additional tools like drones, license plate readers, and fake cell towers—then broadens the lens to phone apps and platform tracking that can feed into government surveillance programs. A historical thread connects post-9/11 legal changes (Patriot Act) and intelligence-industry relationships to the growth of large-scale data collection, especially through Google’s search and advertising model. The argument culminates in a safety critique: cited studies suggest metadata collection has limited impact on preventing terrorism, while the incentives for expansion come from profit and institutional dependence. The practical takeaway is that opting out is increasingly difficult, and misuse risk remains even when surveillance is framed as benign.

Why does the transcript treat camera networks and facial recognition as more than a local policing issue?

What role do consumer apps and major platforms play in the surveillance pipeline?

How does the transcript connect post-9/11 legal changes to today’s surveillance scale?

What is the historical “origin story” for the intelligence-tech relationship described here?

Why does the transcript argue surveillance isn’t justified by counterterrorism benefits?

What does the transcript mean by “surveillance capitalism,” and how does it change incentives?

Review Questions

- Which specific surveillance tools mentioned (cameras, facial recognition, drones, license plate readers, fake cell towers) are described as working together, and what does that imply about tracking across time and contexts?

- How does the transcript connect the Patriot Act and national security letters to the ability to collect data without prior judicial approval?

- What evidence is cited to challenge the claim that metadata surveillance meaningfully prevents terrorism, and how is that evidence used to reframe the incentives behind surveillance?

Key Points

- 1

New York City’s camera density is presented as high enough that people can be identified from multiple blocks away, especially when paired with automated facial recognition.

- 2

Law enforcement surveillance is described as multi-layered, combining cameras with drones, automated license plate readers, and fake cell towers to collect phone-related data.

- 3

Consumer apps and major platforms generate persistent location and behavior data (including via non-GPS methods and cross-site tracking) that can feed into government surveillance capabilities.

- 4

Post-9/11 legal changes—especially the Patriot Act and national security letters—lower barriers to collecting Americans’ communications and related records.

- 5

The transcript links intelligence-industry partnerships after 9/11 to the growth of large-scale data collection, particularly through search and advertising models.

- 6

Safety justifications are challenged using cited studies suggesting metadata collection has limited measurable impact on stopping terrorism.

- 7

The central warning is that even “normalized” data collection can be repurposed quickly, leaving people with little practical ability to avoid surveillance infrastructure.