Are junior devs screwed?

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

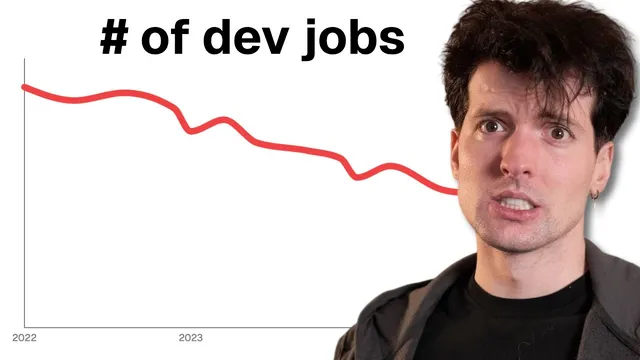

Junior hiring has tightened because fewer engineers are needed per feature and AI-assisted development reduces the number of training-style roles companies used to create.

Briefing

Junior developers aren’t “screwed,” but the path to a first job has become harsher, narrower, and more trust-driven—especially as AI accelerates shipping and reduces the need for large teams. The core shift is leverage: when companies needed many engineers per feature and couldn’t easily hire more, junior candidates had more room to prove themselves. Now, teams can often redeploy existing staff, reorganize instead of hiring, and ship faster with better tooling and AI—so the market has fewer entry points and less tolerance for risk.

A personal hiring-era comparison makes the change concrete. In the past, building a feature like Twitch chat’s new functionality might require four engineers over a year, with two hires coming from outside. Recruiting then meant lots of inbound applications, including early-career candidates and interns, and even senior hires were relatively scarce. Teams could “poach” talent from other groups, pull people via reorgs, and still justify bringing in less-proven engineers because the gap between needed engineers and available engineers created bargaining power. That leverage also enabled aggressive leveling decisions—sometimes underpaying or delaying promotions to fit the team’s timeline.

Today, that same kind of hiring scenario is less likely to exist. The number of engineers needed per feature has generally fallen, while the number of engineers available has risen and budgets have tightened. For many internal projects, the roles that once required multiple new hires simply don’t exist anymore; AI-assisted development and improved web tooling compress timelines and reduce headcount demand. Even when experienced candidates are “junior” by title, the slot they would have filled may have vanished. If a team can’t justify hiring, it may reorganize or lay off instead—leaving capable people scrambling for a smaller set of openings.

The second layer of the problem is how juniors get good. Without AI, the learning loop often forced deeper debugging: isolate the issue, search, and then escalate to a manager when stuck—moving up the “layer” of the problem until the real cause becomes visible. A common junior failure mode is staying too low in the stack—fixating on console errors instead of stepping back to find the higher-level logic that made the error possible. The antidote is emotional discipline: accept that feeling “dumb” is part of the job, and treat that discomfort as the signal you’re actually learning.

Finally, compensation and hiring have become more binary. In the US market framing used here, a candidate below a certain quality bar effectively earns “zero” because companies won’t invest in training when there are many applicants and fewer roles. That bar isn’t just technical ability—it’s trust. Hiring managers can trust people whose work they’ve seen: existing team members, engineers from known companies, interns who proved themselves, or candidates who demonstrate growth through public artifacts. The recommended strategy isn’t spamming GitHub or AI-generated templates; it’s building in public thoughtfully—writing posts, sharing what you tried and what you learned, and asking clear, low-friction questions in communities where people can recognize genuine effort.

The takeaway is practical: use AI to accelerate understanding, not to avoid the hard parts that build skill and credibility. In a world flooded with AI-generated slop, the differentiator is care—solving real problems yourself, communicating clearly, and showing enough work that others can trust you with the next step.

Cornell Notes

The junior job market has tightened because companies now need fewer engineers per feature, can redeploy existing staff, and can ship faster with better tooling and AI. That reduces leverage and eliminates many of the “training slots” that used to exist for early-career hires. To succeed anyway, juniors must build skills by stepping back to the right layer of a problem and embracing the discomfort of feeling unqualified while learning. Hiring has become trust-based: candidates get considered when others can see their work and growth, not when they rely on AI-generated repos or vague resumes. Clear communication and thoughtful public artifacts—what you tried, what failed, and what you learned—help convert effort into credibility.

Why does the “leverage” juniors once had shrink in today’s market?

What changed about hiring for a feature team like Twitch chat?

What’s the most common debugging mistake juniors make?

How should juniors use AI tools without harming their growth?

What does “trust” mean in hiring, and how can a junior build it?

What makes a DM or outreach message more likely to succeed?

Review Questions

- How do changes in the ratio of engineers needed per feature versus engineers available affect junior hiring opportunities?

- What does it mean to “move up the layer” when debugging, and how can a junior practice that habit?

- What specific behaviors build hiring trust more effectively than AI-generated repos or vague resumes?

Key Points

- 1

Junior hiring has tightened because fewer engineers are needed per feature and AI-assisted development reduces the number of training-style roles companies used to create.

- 2

Companies increasingly redeploy or reorganize existing staff rather than take the risk of hiring new juniors, which removes leverage from early-career candidates.

- 3

Debugging skill depends on stepping back to the correct layer of the problem; fixating on console errors often keeps juniors stuck.

- 4

Feeling “dumb” while learning is a feature, not a bug—embracing that discomfort is presented as essential to becoming effective.

- 5

Compensation in the US tech market is framed as effectively zero until a candidate clears a quality bar, making trust and proof of ability central.

- 6

Trust is built through visible work and growth: thoughtful public writeups, clear communication, and evidence of real problem-solving—not template repos or commit-count flexing.

- 7

AI tools should accelerate understanding and learning, not replace the hard practice that builds skill and credibility.