Are juniors screwed? (Getting a job in a post-AI world)

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI tools make code-generation speed a weaker hiring signal, so interviews should emphasize debugging judgment and communication under uncertainty.

Briefing

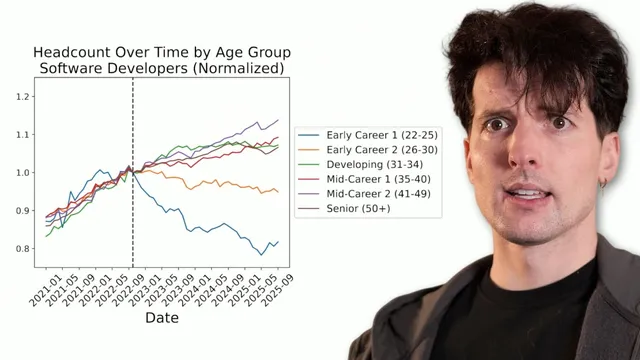

The job market for software engineers is producing contradictory signals—some surveys show juniors struggling while other indicators look less dire—but the practical takeaway is straightforward: hiring and job-search advice built for the pre-AI era no longer fits how work is tested, communicated, and trusted. The biggest mismatch is between what interviews measure and what real jobs now demand, especially as AI tools make “write code fast” less informative and make “work through uncertainty with others” more important.

A central thread is that companies often hire badly, starting with technical interviews. Traditional formats—whiteboard explanations and short coding puzzles—were already a weak proxy for real capability. With AI assistants able to generate plausible code instantly, those same formats become even easier to game. That creates a gap: candidates can “pass” the interview using tools, while the job still requires knowledge to debug, recover, and collaborate when things go wrong. The result is a process that tests the wrong skills under the wrong incentives.

To fix that, the transcript argues for interviews designed around transparency, comfort, and communication. One approach gives candidates choices among interview formats rather than forcing a single “gotcha” puzzle. Examples include a structured Q&A plus a code task with clear expectations, a more real-world spec/API implementation where the candidate’s reasoning matters, or a “realist” format where the candidate works on their own repo while interviewers shadow and ask questions throughout. The goal is to see how someone thinks, explains tradeoffs, and collaborates—because that’s what teams experience every day.

The hiring critique expands beyond interviews into resumes and sourcing. Cold applications are described as largely ineffective in a world where AI-generated “resume slop” floods job boards and non-technical recruiters triage thousands of PDFs. Instead, the transcript emphasizes getting in front of technical people through human signals: engaging with engineers’ posts, attending local meetups, and building real familiarity. It also pushes back on social norms: “stalking” is framed as unacceptable, but basic social competence—being approachable and communicative—has become closer to essential than optional.

For experienced engineers, the transcript highlights a different risk: stagnation. Many mid- and senior developers are said to struggle not only in their current roles but in interviews because they haven’t practiced interviewing, haven’t refined communication, and may be less willing to learn new tools—especially when internal environments restrict AI usage. The advice is to interview more from the “other side,” and to compensate for AI-assisted code generation by improving the ability to justify decisions, document work, and persuade others.

For juniors and early-career engineers, the transcript is blunt about what doesn’t work: random cold applications, chasing prestige credentials, and outsourcing learning to AI until understanding never forms. It recommends using AI as a teaching aid with guardrails (illustrated via an “agent MD” concept that guides an agent to explain, debug by questioning, and provide small examples rather than full solutions). The transcript also argues that the best job-search strategy is to become useful in public: write high-quality GitHub issues with minimal reproductions, comment with actionable debugging details, answer questions in Discord communities, and leave “unblockers” for others. A detailed anecdote about Roy—who became a highly trusted community helper and later got hired—serves as the model.

Underneath all of it is a mindset claim: juniors can win because they still have energy, curiosity, and lower “unlearning costs” than legacy developers. The field is changing quickly, and the transcript frames excitement and collaboration as practical advantages. The job hunt becomes less about proving you can code and more about building trust through communication, usefulness, and participation in the same trenches where problems get solved.

Cornell Notes

Software hiring is misaligned with the post-AI reality: interviews often reward code generation speed and whiteboard-style explanations, even though AI can produce that instantly. The transcript argues that better hiring should prioritize transparency, candidate comfort, and—most importantly—communication and debugging judgment when things go wrong. For experienced engineers, job-search difficulty often comes from weak interview practice and underdeveloped communication, plus slower adaptation to new tools (especially if AI use is restricted at work). For juniors, cold applications and resume “slop” are largely ineffective; trust must be built through human signals, collaboration, and being useful in public—especially by writing high-quality GitHub issues with minimal reproductions and helpful comments. The overall message: build trust by showing how you work with others and how you help solve real problems, not just by generating code.

Why do traditional technical interviews become less reliable in a post-AI world?

What interview formats are proposed to better measure real job performance?

What’s the transcript’s view on cold applications and resume-based hiring?

How should experienced engineers adjust their job-search strategy?

What are the transcript’s key mistakes for early-career developers?

What does “being useful” look like in practice?

Review Questions

- Which parts of technical interviews are most likely to be invalidated by AI, and what should replace them to measure real capability?

- How does the transcript define “trust” in hiring, and what actions build it for early-career engineers?

- What does the transcript recommend doing when you get stuck learning a concept—how should AI be used differently than “outsourcing” understanding?

Key Points

- 1

AI tools make code-generation speed a weaker hiring signal, so interviews should emphasize debugging judgment and communication under uncertainty.

- 2

Technical interview processes should be transparent and candidate-centered (clear expectations, comfort, and multiple ways to demonstrate capability).

- 3

Cold applications are largely ineffective when job boards are flooded with AI-generated resumes; human visibility with technical people matters more.

- 4

Experienced engineers often lose ground through poor interview practice and weaker communication; improving explanations and documentation becomes a competitive advantage.

- 5

Early-career success depends on building trust through usefulness: high-quality GitHub issues (with minimal reproductions), helpful comments, and active participation in community Q&A.

- 6

AI should be used as a teaching aid with guardrails (explaining concepts, guiding debugging, and providing small examples) rather than as a full-solution generator.

- 7

Juniors can leverage lower unlearning costs and higher energy; excitement, collaboration, and being in the trenches increase the odds of landing opportunities.