Augmented Language Models (LLM Bootcamp)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Treat prompt-context construction as an information-retrieval problem rather than a manual selection task, especially when user-specific data is involved.

Briefing

Augmented language models hinge on a simple constraint: modern LLMs are strong at language and instruction-following, but they lack up-to-date world knowledge, access to a company’s private data, and reliable performance on harder tasks like math-heavy reasoning. The practical fix isn’t retraining the model for every new need—it’s giving the model the right external “tools” and “data” at inference time. The core idea is to treat an LLM less like a self-contained oracle and more like a reasoning engine that needs a curated context to answer real questions.

The starting point is retrieval augmentation, which reframes “stuffing context into the prompt” as an information-retrieval problem. Instead of manually deciding which user or document snippets to include, systems search an external corpus for the most relevant items and then inject those results into the prompt. This matters immediately in multi-user settings: rules like “include the most recent users” or “include users mentioned in the query” break down when the relationship between a question and the right data is too complex to encode with simple logic. Retrieval turns that selection step into something searchable and measurable.

Traditional search relies on inverted indexes and word-level heuristics such as Boolean filtering and ranking methods like BM25. Those approaches work well for exact or unambiguous keyword matches, but they miss semantic meaning—so ambiguous queries can return documents about the wrong sense of a word. Embeddings shift the approach by representing text (and other modalities) as dense vectors so semantically similar items land near each other in vector space. The retrieval pipeline then becomes: embed the query, find nearest neighbors among embedded documents, and pass the top matches into the LLM prompt.

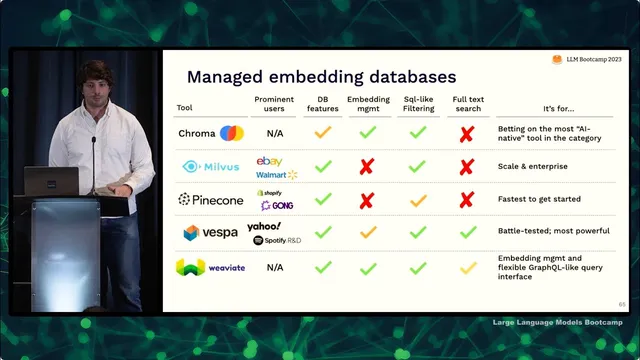

The lecture emphasizes that “embedding databases” are often unnecessary at small scale. For fewer than roughly 100,000 vectors, simple nearest-neighbor search with NumPy can be enough. As scale grows, approximate nearest neighbor methods speed up lookup by using specialized index structures (e.g., HNSW-based approaches) that trade a bit of accuracy for speed. But production systems need more than a fast index: they must handle metadata, filtering, embedding management, and reliable ingestion/update workflows. The practical recommendation is to start with whatever database already powers the application—many support vector search via extensions or built-in features—then graduate to dedicated vector databases when you need richer capabilities.

Beyond nearest-neighbor retrieval, the biggest limitation is context window size. If only a few documents fit, the system can fail when the answer isn’t retrieved into those top slots. One workaround is “chains”: use an LLM to re-rank or select among a larger candidate set before the final answer prompt. Chains also generalize to other patterns like hypothetical document embeddings (invent a document that might contain the answer), map-reduce style summarization across large corpora, and multi-step workflows orchestrated with frameworks such as LangChain.

Finally, tools broaden augmentation beyond search. Rather than only retrieving documents, LLMs can call external systems—calculators, SQL databases, APIs—either through developer-defined chains or through plugin-style interfaces where the model decides when tool use is helpful. The takeaway is a hierarchy of augmentation strategies: start with retrieval and heuristics, move to chains for more complex context-building and token-limit workarounds, and use tools/plugins when the model needs to interact with external capabilities or live data.

Cornell Notes

LLMs often need external help because they don’t have private or up-to-date knowledge and can’t reliably access a user’s data on their own. Retrieval augmentation fixes this by treating “prompt context selection” as an information-retrieval problem: embed the query and retrieve the most similar embedded documents, then insert those snippets into the prompt. Embeddings enable semantic search, while traditional inverted-index search relies on word-level correlations (e.g., BM25). At small scale, simple nearest-neighbor search (even with NumPy) can work; at larger scale, approximate nearest neighbor indices and production-grade retrieval systems become important. When context is too small, “chains” add extra LLM steps (like re-ranking) to build better context, and “tools/plugins” let models call external APIs such as SQL or calculators.

Why does “put more data in the context window” stop working as systems grow?

How does retrieval augmentation differ from traditional keyword search?

What makes an embedding “good,” and how is it evaluated?

When is a dedicated vector database necessary versus simple nearest-neighbor search?

What problem do “chains” solve that pure retrieval can’t?

How do tools/plugins extend augmentation beyond retrieval?

Review Questions

- What trade-offs arise when you retrieve more documents than can fit into the context window, and then use an LLM to select among them?

- Describe how embeddings change the retrieval problem compared with inverted-index search, and give one failure mode each approach can have.

- Why might production retrieval require more than just an approximate nearest-neighbor index? Name at least two operational needs mentioned.

Key Points

- 1

Treat prompt-context construction as an information-retrieval problem rather than a manual selection task, especially when user-specific data is involved.

- 2

Embedding-based retrieval enables semantic matching by mapping text (or other modalities) into dense vectors where similar meaning is geometrically close.

- 3

For small corpora (roughly under 100,000 vectors), simple nearest-neighbor search with tools like NumPy can be sufficient; dedicated vector databases become more important at scale.

- 4

Production retrieval systems need more than fast vector lookup: they must support metadata, filtering, ingestion/update reliability, and embedding management/versioning.

- 5

When context windows are too small, “chains” add extra LLM steps (e.g., re-ranking) to ensure the final prompt contains the most relevant evidence.

- 6

“Tools” and “plugins” expand augmentation beyond search by letting LLMs call external capabilities like SQL, calculators, and APIs, either via developer-orchestrated chains or model-chosen plugin calls.