Backpropagation, intuitively | Deep Learning Chapter 3

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

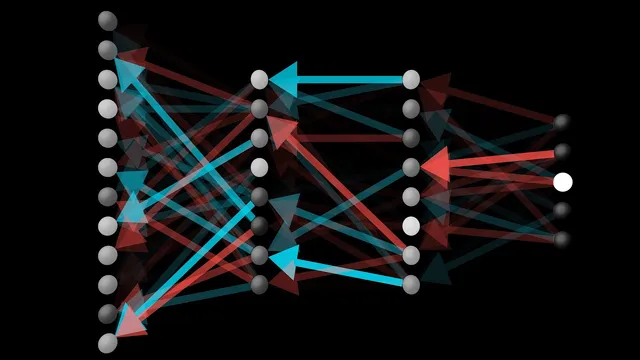

Backpropagation updates every weight and bias by following how the cost function changes with respect to each parameter.

Briefing

Backpropagation is the mechanism that turns a network’s prediction error into specific, proportionate changes to every weight and bias—so the cost function drops efficiently instead of wandering randomly. The core idea is to treat the gradient of the cost as a sensitivity map: each component of the gradient tells how strongly the cost responds if a particular weight or bias changes. In the earlier framing, that gradient lives in a huge-dimensional space, but the intuition is simple—some parameters matter far more than others, because the cost is much more sensitive to certain directions than to others.

Using a single training example (a handwritten “2”) makes the logic concrete. The network’s output activations start essentially random, so the goal isn’t to directly “fix” activations; it’s to adjust weights and biases so that the output cell for “2” rises while the other output cells fall. For the output neuron corresponding to the digit “2,” increasing its activation can happen through three cooperating levers: changing the bias, changing the weights feeding into it, or changing the activations coming from the previous layer. Weight changes are especially targeted because connections from strongly active cells in the previous layer have a larger effect—those weights multiply larger inputs, so they move the output more. This resembles a Hebbian-style intuition (“cells that fire together wire together”): stronger links tend to be those between neurons that are active together and that help produce the desired output.

The process doesn’t stop at one neuron. Every output cell has its own desired direction of change (increase for the correct digit, decrease for the others). Backpropagation aggregates these competing wishes to determine what the previous layer should do. That aggregation yields a set of desired adjustments for the activations in the layer before the output, which then translates into concrete updates for the weights and biases that produced those activations. The “back” in backpropagation comes from repeatedly running this reasoning backward through the network: each layer’s required changes are derived from how later layers’ errors depend on earlier activations.

In principle, the cost gradient for a step would be computed using every training example, then averaged. In practice, that’s too slow. The standard workaround is stochastic gradient descent: shuffle the data, split it into small mini-batches (often around 100 examples), compute gradient-based updates using one mini-batch at a time, and repeat. These updates aren’t the exact full-dataset gradient, but the repeated averaging effect across many mini-batches produces steady improvement and speeds up training dramatically.

Finally, the method’s success depends on having lots of labeled data. MNIST—handwritten digits with many pre-labeled examples—serves as a classic benchmark for demonstrating how backpropagation learns from data rather than from hand-crafted rules.

Cornell Notes

Backpropagation converts prediction mistakes into targeted updates for every weight and bias by following how the cost function changes with respect to those parameters. The gradient acts like a sensitivity vector: some parameters cause much larger cost changes than others, so updates must be scaled accordingly. For a single example (like an image of the digit “2”), the network aims to raise the “2” output activation and suppress the others; the required changes are propagated backward to determine how earlier layers’ activations and connections should shift. Because using all training examples for every update is slow, training typically uses stochastic gradient descent with mini-batches, averaging approximate gradients over many steps. Large labeled datasets like MNIST make this learning approach practical.

Why treat the gradient as a sensitivity vector rather than just a direction?

For a training image of the digit “2,” what does the network try to change first?

How do weights feeding a neuron determine the impact of changing them?

What does “backward” mean operationally in backpropagation?

Why use mini-batches instead of computing gradients on the full dataset each step?

What role does MNIST play in understanding backpropagation?

Review Questions

- In what sense does the gradient provide both direction and magnitude for parameter updates?

- How does the desired change in output activations for one training example translate into updates for earlier layers?

- Why does stochastic gradient descent with mini-batches still converge toward a low-cost solution despite using approximate gradients each step?

Key Points

- 1

Backpropagation updates every weight and bias by following how the cost function changes with respect to each parameter.

- 2

The gradient’s components act as sensitivity scores, indicating which parameters most strongly affect the cost.

- 3

For a labeled example, the network targets higher activation for the correct output neuron and lower activation for the others.

- 4

Weight updates are more effective when they connect to strongly active neurons in the preceding layer because those weights multiply larger inputs.

- 5

Backpropagation works backward by aggregating desired effects from all output neurons to determine needed changes in earlier-layer activations.

- 6

Exact full-dataset gradient computation per step is too slow, so training uses stochastic gradient descent with mini-batches and averages approximate gradients over time.

- 7

Large labeled datasets such as MNIST are crucial for making backpropagation-based learning practical.