Bayes theorem, the geometry of changing beliefs

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Bayes’ theorem updates beliefs by combining base-rate priors with evidence likelihoods, then normalizing by the total probability of the evidence.

Briefing

Bayes’ theorem is presented as a disciplined way to update beliefs when new evidence arrives—without letting that evidence “decide” everything from scratch. The core lesson is that evidence restricts the space of possibilities, and the updated probability depends on both the starting point (prior beliefs) and how diagnostic the evidence is (likelihoods). That combination explains why people can make confident judgments that clash with probability: they often ignore the base rate, the underlying ratio of how common each hypothesis is.

The explanation begins with the classic “Steve” scenario used by Daniel Kahneman and Amos Tversky. Steve is described as shy, tidy, and detail-oriented. Asked whether Steve is more likely a librarian or a farmer, most people choose “librarian” because the description matches a stereotype. The probabilistic critique is not about stereotypes being “right” or “wrong,” but about failing to incorporate the base rate—Kahneman and Tversky cite a U.S. ratio of roughly 20 to 1 for farmers versus librarians. When that ratio is included, even a description that seems four times more likely for librarians can still yield a librarian probability under 50% because there are so many more farmers.

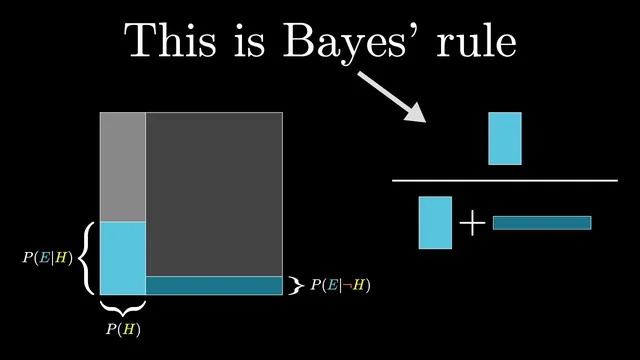

From there, the transcript generalizes the reasoning into Bayes’ theorem. A hypothesis H (e.g., “Steve is a librarian”) is assigned a prior probability P(H) based on base rates. New evidence E (the description) then has a likelihood P(E|H): how often that evidence appears if the hypothesis is true. The complementary likelihood P(E|¬H) captures how often the same evidence would appear if the hypothesis is false. Bayes’ theorem combines these pieces into the posterior probability P(H|E)—the belief after seeing the evidence—by weighting the hypothesis by its prior and likelihood, then normalizing by the total probability of the evidence.

To make the computation intuitive, the transcript discourages memorizing the formula and instead encourages a geometric “area” diagram. The entire space of possibilities is treated like a 1×1 square. The hypothesis occupies a vertical slice with width P(H). Evidence restricts the square into a “wonky” subset, and the updated belief becomes the fraction of the restricted region that lies inside the hypothesis slice. When likelihoods for H and ¬H are similar, the restriction doesn’t change the proportion much; when they differ sharply, the posterior shifts substantially.

The discussion broadens beyond Bayes by revisiting another Kahneman–Tversky finding about probability judgment errors using the Linda problem. People often overestimate the likelihood of a conjunction (“bank teller and feminist movement active”) when asked in percentages, but the error disappears when the question is reframed as counts out of 100. The transcript links this to intuition: representative samples and geometric proportions help people reason about probability as proportions rather than vague uncertainty.

Finally, it returns to “Steve” and notes that debates about the experiment often hinge on context—what population the base rate should come from, and what likelihoods should be assumed. Even so, the takeaway remains consistent: evidence should update beliefs by combining prior information with how diagnostic the evidence is, rather than replacing prior beliefs with the evidence alone.

Cornell Notes

Bayes’ theorem is framed as a method for updating beliefs when new evidence arrives. The posterior probability P(H|E) depends on three ingredients: the prior P(H) (base rates), the likelihood P(E|H) (how well the evidence matches the hypothesis), and the complementary likelihood P(E|¬H). A key intuition is that evidence doesn’t determine beliefs in a vacuum; it restricts the space of possibilities, and the updated belief is the proportion of that restricted space that supports the hypothesis. The transcript also uses a geometric 1×1 square diagram—probabilities become areas—to make the update process easier to visualize and apply. The same base-rate logic is contrasted with common human errors from Kahneman and Tversky’s “Steve” and “Linda” experiments.

Why do people often choose “librarian” in the Steve scenario even when base rates favor farmers?

What do “prior,” “likelihood,” and “posterior” mean in Bayes’ theorem as used here?

How does the transcript’s representative-sample calculation produce the posterior 4/24 = 16.7%?

What is the geometric “area” diagram meant to clarify?

Why does reframing the Linda problem from percentages to counts reduce the error?

What kinds of disagreements about the Steve example change the Bayes calculation?

Review Questions

- In the Steve example, which term in Bayes’ theorem captures the base-rate imbalance between farmers and librarians?

- How does the area diagram translate “posterior probability” into a geometric proportion?

- Give an example of how changing likelihoods versus changing priors would affect the updated belief P(H|E).

Key Points

- 1

Bayes’ theorem updates beliefs by combining base-rate priors with evidence likelihoods, then normalizing by the total probability of the evidence.

- 2

Ignoring base rates can make a highly “stereotype-matching” description lead to a surprisingly low posterior probability.

- 3

The posterior P(H|E) is the probability of the hypothesis after evidence, not the probability implied by the evidence alone.

- 4

Likelihoods P(E|H) and P(E|¬H) determine how strongly the evidence favors the hypothesis over its alternative.

- 5

A 1×1 area diagram can represent probability as geometric proportions, making the update process easier to sketch and apply.

- 6

Representative-sample reasoning (counts out of a fixed total) can reduce common probability errors that appear when people rely on percentages alone.

- 7

Context choices—what population sets the prior and what assumptions set the likelihoods—can change the numerical outcome even when the Bayes logic stays the same.