Building an LLM fine-tuning Dataset

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Treat dataset curation as the main engineering challenge: training is comparatively straightforward once reply chains are correctly reconstructed.

Briefing

Fine-tuning an LLM on Reddit comments is less about model training and more about building a usable dataset—especially when the goal is multi-turn, multi-speaker conversations. The workflow centers on extracting large volumes of Reddit comment data (not posts), converting it into conversation chains, and then formatting those chains into training samples that a causal language model can learn from. The payoff is a dataset tailored to realistic social dynamics: on Reddit, responses often involve more than two participants, and the “bot” reply needs to be grounded in a history of prior comments.

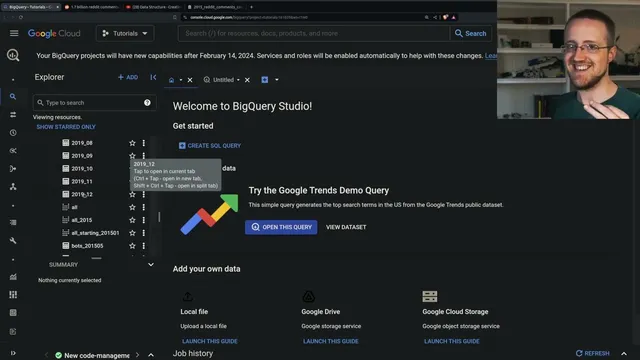

The process starts with sourcing comment archives. A long-running Reddit comment dataset exists via torrents/archives and, crucially for scale and convenience, through a maintained BigQuery dataset containing Reddit comments from roughly 2005 through the end of 2019. That BigQuery resource is large enough to train or fine-tune models at meaningful sizes (the transcript mentions a 7B-class model as a plausible target) and to filter behavior by subreddit. The practical bottleneck is not the amount of data, but exporting it in manageable chunks, downloading it reliably, and reshaping it into something trainable.

To handle export, the workflow uses BigQuery exports to Google Cloud Storage, typically organized by year and month. The transcript emphasizes that CSV exports become unwieldy for comment bodies, so JSON is preferred, even though it creates many small files. Downloading and storage become operational problems: compression (gzip) can reduce transfer size but adds decompression overhead, and unstable internet can force overnight downloads. Because decompression and parsing are expensive at scale, the workflow pre-decompresses files locally (or on a NAS) to avoid repeating CPU work during later iterations.

Once the raw JSON is decompressed, the next step is data wrangling: selecting only the fields needed for conversation reconstruction (comment body, author, timestamps, IDs, parent IDs, and score). The transcript notes that many comments won’t participate in reply chains, and that top-level posts (which may not have a parent) can be missing from naive “parent-based” reconstruction—so the dataset may need additional logic to anchor replies to titles. A key design decision is how to build training samples: instead of simple instruction-response pairs, the dataset is assembled as conversation chains by walking parent IDs backward until no parent is found, then packaging the resulting history.

For WallStreetBets, the dataset is filtered using minimum reply length and minimum upvotes (score), producing multiple dataset variants (e.g., min score 3/5/10 and min length 2/5). The transcript also explores multi-speaker formatting by assigning speaker IDs to each author and using a dedicated bot name (“WallStreetBot”) for the target reply. The resulting samples can be used either as full prompt+response training text or as structured instruction formats, depending on the fine-tuning approach.

On the training side, the workflow experiments with model choices and ultimately uses parameter-efficient fine-tuning (LoRA/QLoRA-style) with an “AutoPFT” approach for causal language models. That automation supports checkpointing adapters and testing them without repeatedly merging or dequantizing full models—making iteration faster and cheaper. Early results suggest the curated, filtered dataset improves quality versus an earlier attempt, and the transcript claims diminishing returns beyond roughly 500–1,000 training steps, with the possibility that far fewer samples could work if the filtering is strong enough. The end state is a set of Hugging Face datasets and fine-tuned adapters, plus a plan to iterate on better prompts and more targeted sample selection (including higher-score thresholds) while avoiding “junk” comments that inflate scores without meaningful content.

Cornell Notes

The core work is turning massive Reddit comment archives into multi-turn, multi-speaker training data for LLM fine-tuning. The workflow pulls Reddit comments from a BigQuery dataset (2005–2019), exports them to JSON chunks in Google Cloud Storage, downloads and pre-decompresses them, then parses only the fields needed to reconstruct reply chains (body, author, created time, ID, parent ID, score). Training samples are built by walking parent IDs to form conversation histories, then labeling participants as “speaker” roles with a dedicated bot name for the target reply. Filtering by minimum reply length and minimum upvotes creates multiple dataset variants, and parameter-efficient fine-tuning with AutoPFT enables fast adapter checkpoint testing without repeatedly merging full models.

Why does the workflow focus on Reddit comments (not posts) and on conversation chains rather than simple instruction-response pairs?

How does the dataset reconstruction avoid producing meaningless training samples?

What makes exporting and preparing the raw Reddit data so time-consuming?

How are multi-speaker training samples represented for fine-tuning?

What role do score and length thresholds play in dataset quality?

Why does AutoPFT matter for iteration speed during fine-tuning?

Review Questions

- When reconstructing conversation chains from Reddit comments, which fields are essential (and why) to link a reply to its prior context?

- What trade-offs arise when choosing JSON vs CSV exports and when using gzip compression for large comment bodies?

- How do minimum score and minimum length filters change the distribution of training samples, and what kinds of “junk” comments are they meant to suppress?

Key Points

- 1

Treat dataset curation as the main engineering challenge: training is comparatively straightforward once reply chains are correctly reconstructed.

- 2

Use a scalable comment source (BigQuery for 2005–2019 Reddit comments) and export in JSON chunks to keep long comment bodies usable.

- 3

Pre-decompress exported data once to avoid repeated CPU overhead during parsing and dataset building; consider decompressing on a NAS to reduce network bottlenecks.

- 4

Rebuild multi-turn context by walking parent IDs backward to form conversation histories, then package those histories as multi-speaker samples with explicit speaker labels.

- 5

Filter by minimum reply length and minimum upvotes (score) to reduce low-effort replies and to create multiple dataset variants for experimentation.

- 6

Prefer parameter-efficient fine-tuning with AutoPFT so adapter checkpoints can be tested quickly without repeatedly merging or dequantizing full models.

- 7

Expect diminishing returns beyond roughly 500–1,000 training steps when the dataset is already well-filtered; further gains may require better sample selection rather than longer training.