But what are Hamming codes? The origin of error correction

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Parity bits enforce even parity over selected groups, letting receivers detect that an error occurred but not where it happened.

Briefing

Scratches, noise, and transmission glitches can flip 1s and 0s—yet many storage and communication systems still recover the original data exactly. The core idea behind that resilience is to add carefully chosen redundancy so that a receiver can both detect an error and, for the common case of a single flipped bit, determine exactly which bit went wrong. Hamming codes are an early, mathematically efficient example: they use a small number of parity bits to turn “something is wrong” into “this specific position is wrong,” without needing to store full extra copies of the data.

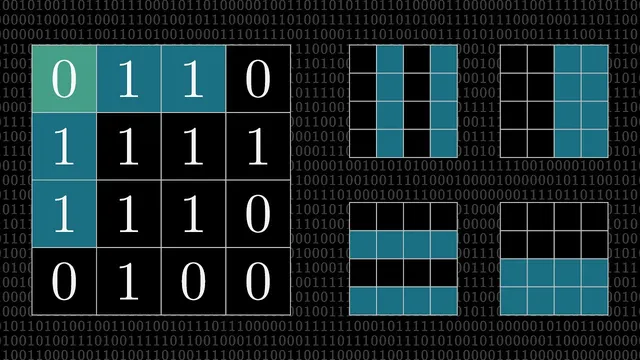

The starting point is parity checking, a simple mechanism that detects odd numbers of bit flips. A sender sets one parity bit so that the total number of 1s in a group is even. If the receiver later finds odd parity, it knows at least one bit changed—though it cannot locate which one. That limitation is fundamental: parity alone can’t distinguish between “one error” and “three errors,” and even an even parity result could still hide two or more flips. The breakthrough comes from applying multiple parity checks, but not to the whole block. Instead, each parity bit covers a carefully selected subset of positions.

In the classic Hamming-code construction, positions are indexed within a block, and certain indices—powers of 2—are reserved for parity. Using a 16-bit layout as an illustrative example, four parity positions (1, 2, 4, and 8) are used to locate a single-bit error. Each parity bit checks the parity of a subset of the remaining positions; taken together, the pattern of which parity checks fail acts like a compact “address” for the error location. Conceptually, it’s like playing 20 questions: each parity check is a yes/no query that halves the remaining possibilities. With four parity checks, the receiver can pinpoint one of the 15 non-reserved positions; the only ambiguous outcome is the “no parity checks fail” case, which is resolved by excluding position 0 from the correction scheme.

That yields the familiar 15-11 Hamming code: 11 data bits plus 4 redundancy bits. The redundancy bits are not simple copies; they’re deterministic functions of the data that create a map from parity outcomes to bit positions. If exactly one bit flips, the receiver can identify the faulty position and correct it. If two bits flip, the receiver can detect that something went wrong but generally cannot correct it.

To detect double-bit errors as well, the construction can be extended by reintroducing position 0 as an overall parity bit across the entire block. With this “extended Hamming code,” a single-bit error makes the overall parity odd, while two-bit errors keep the overall parity even—but still disturb at least one of the four correction-related parity checks. The result: single-bit errors are correctable, and two-bit errors become detectable.

The transcript walks through a full worked example of encoding an 11-bit message into a 16-bit block by setting parity bits so that each checked subset has even parity, then demonstrates decoding by recomputing parity outcomes after flipping one or two bits. It ends by hinting at a more elegant, scalable implementation—compressing the multi-parity logic into a systematic computation suitable for code—setting up a follow-up that turns the hand-calculation method into an algorithm.

Cornell Notes

Hamming codes add a small number of parity bits to data so that a receiver can correct any single-bit error and often detect multi-bit errors. The method starts with parity checking, which can detect that “something changed” but not where. By running several parity checks on carefully chosen subsets of positions, the pattern of which checks fail encodes the error’s location—like a yes/no “address” for the flipped bit. A standard 15-11 Hamming code uses 4 parity bits to correct one error among 15 positions, while an extended version adds an overall parity bit to detect two-bit errors. This matters because it turns noisy reads (like scratched disks) into reliable recovery without storing full redundant copies.

Why does a single parity bit only detect errors but not locate them?

How do multiple parity checks let a receiver pinpoint a single flipped bit?

What is the “15-11” structure, and why does it exclude position 0 from correction?

How does the extended Hamming code detect two-bit errors?

In the worked decoding example, how does the parity pattern determine the exact bit to flip back?

Review Questions

- What information does parity provide, and what ambiguity remains after a single parity check?

- How do the four parity checks in a Hamming code combine to identify a specific bit position for a single-bit error?

- Why does adding an overall parity bit enable detection (but not correction) of two-bit errors in an extended Hamming code?

Key Points

- 1

Parity bits enforce even parity over selected groups, letting receivers detect that an error occurred but not where it happened.

- 2

Hamming codes locate single-bit errors by running multiple parity checks over carefully chosen subsets of positions.

- 3

The pattern of which parity checks fail functions like a compact binary address for the error location.

- 4

A standard 15-11 Hamming code uses 4 redundancy bits to correct any single-bit error among 15 positions, avoiding ambiguity with the “no failures” outcome.

- 5

An extended Hamming code adds an overall parity bit so that two-bit errors can be detected: overall parity stays even while subset checks still show inconsistencies.

- 6

Encoding and decoding can be done by recomputing parity conditions and mapping the resulting yes/no pattern to a specific bit index.