Can Google's new NotebookLM AI answer questions about my book?

Based on Martin Adams's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

NotebookLM can ingest a document and answer questions grounded in the uploaded text, often using clickable citations to relevant sections.

Briefing

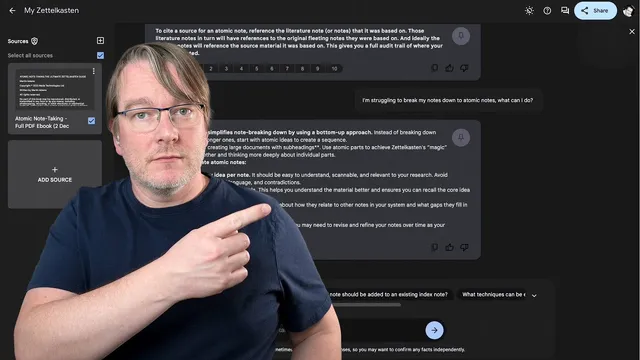

Google’s experimental NotebookLM is positioned as an AI note-taking system that can ingest a document and then answer questions, summarize concepts, and generate ideas grounded in the uploaded text. In a hands-on test using the book “Atomic Note Taking,” NotebookLM produced high-level descriptions of the Zettelkasten-inspired “Zle Caster” method, including the idea of breaking knowledge into stand-alone “atomic notes,” linking them into a growing web of understanding, and using that network to surface patterns, questions, and new insights. The most consequential takeaway is that NotebookLM can behave like a searchable, conversational layer over a source document—complete with citations that can be clicked through to relevant sections.

The workflow tested is straightforward: upload a book, then query it with prompts such as what the book is about, who it’s for, and how the method works. NotebookLM returned a concise “core concept” summary: atomic notes capture single ideas; they’re organized using content maps rather than rigid categories; and linking supports recall, updating, and deeper thinking over time. When asked for an example of an atomic note, it generated a short, one-idea entry (e.g., “Critical thinking is about making informed and sound decisions…”), and when asked how to cite sources for an atomic note, it described an audit trail—literature notes point back to fleeting notes, which ideally trace to the original source material. Clicking citations led to the relevant sections in the uploaded text, reinforcing that the system is drawing from the provided document rather than inventing freely.

Still, the test also exposed practical limitations. The uploader struggled to import certain file types (Markdown and plain text were problematic), suggesting NotebookLM may be better suited to formats like Google Docs or PDFs. More importantly, when challenged with questions that depended on non-text elements—such as a diagram or image on a specific page—NotebookLM could not answer because the underlying text available to it appeared to omit or strip images. That gap mattered when the system claimed the book lacked examples of linked atomic notes, a claim the tester disputed, attributing the mismatch to formatting and how content was extracted.

The system’s marketing output also showed its strengths and weaknesses: it generated a polished advert script for the book, but the tester noted it sometimes failed to retain or correctly reflect earlier answers. Overall, NotebookLM looks like a promising “question-answering over your documents” tool, but its usefulness depends heavily on document formatting and the presence of extractable text. With availability limited to the US and the project still experimental, the long-term impact hinges on whether Google can improve ingestion, citation fidelity, and multimodal understanding (especially diagrams and images) before adoption stalls or the effort is discontinued.

Cornell Notes

NotebookLM is an experimental AI note-taking tool that can ingest a document and answer questions using the uploaded text, often with clickable citations to relevant sections. In a test with “Atomic Note Taking,” it produced accurate-sounding summaries of the Zettelkasten-style “atomic notes” approach: write single-idea notes, link them into a “web” of understanding, and use that network to generate insights. It also described a citation/audit trail from atomic notes to literature notes and back to original sources. The main limitations surfaced around file import formats and missing support for non-text content like diagrams, which can cause incorrect conclusions when key examples are embedded as images.

What does NotebookLM do when given a book—does it summarize from memory or from the uploaded text?

How does the Zettelkasten-style “atomic note” method get translated into NotebookLM’s outputs?

What did NotebookLM say about citing sources for atomic notes, and why is that important?

Where did NotebookLM struggle during the test?

How did NotebookLM perform on a task outside pure summarization, like marketing copy?

Review Questions

- What evidence in the test suggests NotebookLM’s answers are grounded in the uploaded document rather than generated independently?

- Explain the “audit trail” NotebookLM gave for atomic-note citations. How does it connect atomic notes to original sources?

- Why would missing diagram/image understanding undermine NotebookLM’s usefulness for methods that rely on visual examples or page-based figures?

Key Points

- 1

NotebookLM can ingest a document and answer questions grounded in the uploaded text, often using clickable citations to relevant sections.

- 2

The system produced summaries of the atomic-notes approach: single-idea notes linked into a growing network for recall and insight.

- 3

NotebookLM described an audit-trail citation model linking atomic notes to literature notes and back to fleeting notes and original sources.

- 4

File ingestion may be limited: Markdown and plain text imports were problematic, while formats like Google Docs or PDFs were implied to work better.

- 5

NotebookLM’s ability drops when key information is embedded as images/diagrams rather than extractable text, leading to incorrect conclusions.

- 6

Generated marketing copy and other creative outputs can be strong, but consistency may vary across a multi-question workflow.

- 7

Because NotebookLM is experimental and currently limited in availability, improvements to ingestion and multimodal understanding will likely determine whether it gains traction.