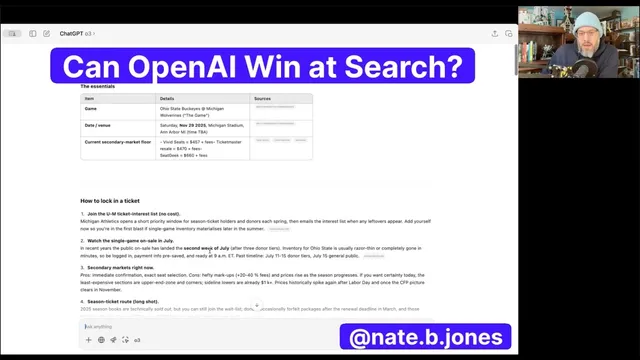

Can OpenAI Win at Search?

Based on AI News & Strategy Daily | Nate B Jones's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

LLM-based search can deliver structured, decision-ready answers (tickets, flights, next steps) without ads, reducing reliance on traditional SERPs.

Briefing

LLM-powered search experiences are now good enough to siphon meaningful “off-the-top” search demand from Google—especially for everyday, decision-heavy questions—while OpenAI’s momentum and distribution scale could make the shift fast once quality reaches the free tier. The core claim is that search value is no longer confined to ad-driven results pages; it can move into a conversational interface that returns structured, actionable answers without the clutter of traditional SERPs.

A series of live-style examples illustrates the point. For ticket hunting, the system doesn’t just list options; it surfaces game time, date, venue, average price, and then adds “next steps” and strategies for locking in tickets. For travel planning, it offers “smart flights” from Seattle and frames options in a way that’s described as less overwhelming than Google Flights—though still not portrayed as a full replacement. Even when switching domains (like quick exercises for back health), the value is framed as clarity: named reps, quick form cues, and a single place to understand how to do the exercises.

The biggest gap identified is presentation. Diagrams and demo-style visuals—common in Google’s image-first approach—are missing in these examples. That omission matters because it affects usability for tasks where visual guidance reduces friction and error. The expectation is that OpenAI can close this gap, turning text-first guidance into more complete “cheat sheets” that better match how people learn and execute.

Beyond structured answers, the transcript argues that natural-language queries can retrieve information more reliably than keyword search for certain “fuzzy memory” tasks. When asked to recall an old movie with a specific plot detail (including a distinctive aircraft), the system is said to pattern-match across pretraining data in a way that standard Google search sometimes struggles with.

Coding is used to show how search could become embedded in the model experience. A beginner-friendly prompt is framed as a practical pathway into the “AI vibe coding space,” with the system providing a stack overview and then dialing back complexity when asked. The tradeoff noted is speed: responses can be slower, attributed to reasoning that builds on prior context. Still, the guidance is described as supportive and safety-conscious—encouraging users to pause and ask for help, and reinforcing that short practice sessions help.

The closing concern is strategic and economic. If OpenAI can deliver this quality of search at scale—potentially across a free tier—Google could face a steep adoption-driven decline in search volume. The transcript suggests a “sigmoid” pattern: gradual peeling away at first, followed by a sharper drop if product adoption for basic searches crosses a tipping point. With OpenAI’s distribution reach and momentum, the argument is that competing head-on may be difficult, not because Google lacks talent, but because the cost and incentives of disrupting its own ad-based search model are misaligned with the new conversational paradigm.

Cornell Notes

LLM-based search is reaching a level where it can take substantial “top-of-funnel” and decision-oriented queries away from Google, particularly when users want structured, actionable guidance rather than a list of links. Examples include ticket planning with strategies and next steps, flight options framed for booking decisions, and health exercise guidance with clear cues and repetition structure. The main weakness identified is the lack of diagrams or demo visuals, which Google can provide quickly via image results; closing that gap could make the experience more complete. For some “fuzzy memory” questions and beginner coding guidance, natural-language pattern matching and conversational context improve reliability and usability. If this quality becomes available broadly (including the free tier), the transcript warns that search demand could decline rapidly after an adoption tipping point.

Why does the transcript claim LLM search can disrupt Google’s business model even without ads?

What specific product gap is identified as the biggest reason LLM search isn’t fully replacing Google yet?

How do the examples argue that natural-language queries can outperform keyword search for certain tasks?

What tradeoff does the transcript highlight in LLM search quality—especially for coding help?

What would have to happen for the disruption to accelerate, according to the transcript’s tipping-point argument?

Review Questions

- Which types of queries in the transcript are most likely to shift away from traditional SERPs, and why?

- What is the single biggest missing element in the LLM search examples, and how would adding it change user outcomes?

- How does the transcript connect model latency to user experience, and what does it imply about adoption?

Key Points

- 1

LLM-based search can deliver structured, decision-ready answers (tickets, flights, next steps) without ads, reducing reliance on traditional SERPs.

- 2

The most cited weakness is lack of diagrams or demo visuals, which affects usability for tasks like exercises.

- 3

Natural-language “fuzzy memory” queries can be answered more reliably by LLMs due to pattern matching across pretraining data.

- 4

Conversational context enables beginner-friendly coding guidance, including adapting complexity when users ask for simpler explanations.

- 5

Latency remains a drawback; some responses are slower, though certain prompts can be faster due to reuse of prior reasoning.

- 6

If OpenAI can match this quality on the free tier for everyday searches, adoption could accelerate quickly.

- 7

Google’s incentives and cost structure for LLM-serving may make direct competition harder, especially if search volume peels away and then drops sharply.