Caught in the Act: Unethical AI Usage in Research

Based on Andy Stapleton's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

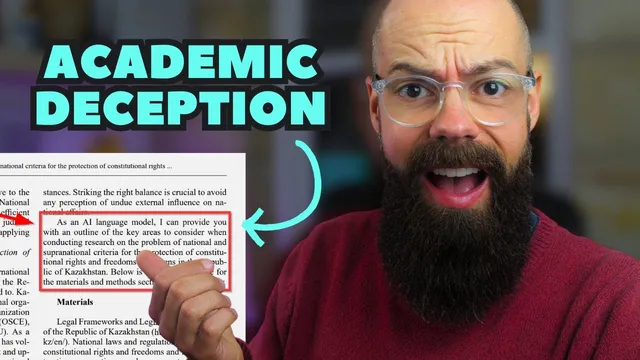

ChatGPT output has allegedly been copied verbatim into academic manuscripts, including visible “as an AI language model” caveats.

Briefing

Academics have been caught copying and pasting text from ChatGPT straight into peer-reviewed papers—sometimes even leaving telltale “as an AI language model” caveats in the manuscript. The immediate outrage misses a larger point: the real risk isn’t that researchers will use AI to draft or assist writing, but that parts of the academic quality-control system—peer review, editorial screening, and publication checks—are failing to catch obvious problems.

Examples pulled from Google Scholar searches show multiple instances where AI-generated boilerplate appears verbatim in published work, including passages that explicitly include “as an AI language model” language in methods or results-adjacent text. In at least one case, the copied content includes statements about missing access to full text or inability to rewrite without original material—errors that should be obvious to anyone reading the manuscript. Similar issues are also reported in theses, where ChatGPT-like limitations and mismatched prompts appear in submitted academic writing.

The transcript argues that this kind of misconduct is likely to be relatively limited in the near term—partly because the volume of papers is large and partly because many of the flagged cases appear in lower-visibility outlets rather than top-tier journals. But attention shifts to a more consequential failure: a high-impact journal publication (Elsevier’s Resources Policy is cited, with an impact factor of 10.2) allegedly passed through peer review and editorial processes despite containing AI artifacts and text that suggests the authors did not properly integrate results. The concern is not that AI can generate prose; it’s that AI-generated drafts can be used to create narrative around data without correctly presenting tables, tests, or verifiable findings—and that such deficiencies should have been caught during review, revision, typesetting, and final editorial checks.

That leads to a broader suspicion of “publishing cabals,” where papers may be fast-tracked or accepted due to relationships rather than rigorous scrutiny. The transcript frames this as a structural problem: if journals can publish work with glaring AI remnants, then the integrity of peer review is under strain.

A parallel example comes from grant assessment. A Guardian report is cited describing Australian Research Council assessor reports allegedly containing phrases like “regenerate response,” a button-like artifact from ChatGPT that can appear when copying output. The transcript connects this to reviewer overload: researchers may process up to 20 proposals in a year, each typically 50–100 pages, on top of teaching, supervision, publishing, and other duties. Under that pressure, shortcuts become tempting—and AI artifacts become more likely.

The proposed remedy is practical rather than punitive: universities and academic services should develop clear, usable policies for AI tools, specify where AI is allowed, and invest in enough staffing and time so researchers and reviewers can do real work. The core message is that AI use in academia is likely inevitable, but the systems around it—review rigor, editorial accountability, and workload—must keep pace to prevent quality from eroding.

Cornell Notes

The transcript describes cases where researchers allegedly copied and pasted ChatGPT output into academic writing, sometimes leaving obvious AI caveats like “as an AI language model.” While AI-assisted drafting may become normal, the bigger concern is quality-control failure: manuscripts with clear AI artifacts and missing or improperly integrated results appear to have passed peer review and editorial checks, including in a high-impact journal cited as Resources Policy (impact factor 10.2). A separate example links AI artifacts to grant assessment reports, with “regenerate response” reportedly appearing in assessor writing. The takeaway is that AI use is likely unavoidable, but universities and funders must strengthen policies and ensure reviewers have enough time and resources to maintain rigorous evaluation.

What kinds of ChatGPT artifacts show up in academic texts, and why are they significant?

Why does the transcript argue the main problem isn’t AI writing itself?

What failure is alleged in the peer-review pipeline for a high-impact journal?

How does grant reviewing connect to AI misuse, according to the transcript?

What solution does the transcript recommend for universities and academic services?

Review Questions

- Which specific AI-generated phrases mentioned in the transcript would be red flags to editors or reviewers, and what do they imply about the drafting process?

- How does the transcript distinguish between acceptable AI assistance and unacceptable AI misuse in research writing?

- What workload pressures are cited as contributing to AI artifacts in grant assessment, and how might policy or staffing address them?

Key Points

- 1

ChatGPT output has allegedly been copied verbatim into academic manuscripts, including visible “as an AI language model” caveats.

- 2

The transcript treats raw AI artifacts as evidence of inadequate human editing and verification, not just a harmless drafting shortcut.

- 3

A central concern is alleged failure of peer review and editorial screening—especially when AI artifacts and missing/incorrectly integrated results appear in high-impact publications.

- 4

The transcript links AI artifacts in grant assessment to reviewer overload, citing the scale of proposal review duties and page counts.

- 5

Instead of banning AI, universities and academic services should create clear, usable AI policies and enforce appropriate use.

- 6

Maintaining research integrity requires both stronger review processes and sufficient staffing/time for reviewers and editors.