Characterizing Test Time Compute on Graph Structur… | Kudzo Ahegbebu | OpenAI Scholars Demo Day 2021

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Test-time compute can improve accuracy on relational, graph-structured tasks, but recurrence alone may not produce a catch-up effect on algorithmic benchmarks like shortest path.

Briefing

Test-time compute—giving a model more computation at inference—can improve performance on graph-structured reasoning, but simply adding recurrence and more iterations doesn’t automatically let models “catch up” to larger, longer-trained baselines. The strongest evidence comes from graph neural network (GNN) experiments on shortest-path and Sudoku-style constraint problems, where accuracy rises as inference iterations increase and where the model’s extra compute is spent refining uncertain parts of the solution.

The talk frames a “test-time compute dream”: models should produce better outputs the longer they’re allowed to think, mirroring how humans are evaluated with more time. Two tracks are distinguished. One is generalization improvement—using extra inference steps to resolve ambiguity and correct earlier mistakes. The other is efficiency—decoupling model size from inference cost so that more parameters don’t necessarily mean more compute at runtime.

Most of the work centers on a shortest-path task cast as sequence-to-sequence prediction over city pairs. Models are trained with a fixed FLOP budget and a fixed number of recurrence steps, then tested with additional recurrence steps to see whether they eventually approach models trained without recurrence (but larger or trained longer). The result is largely negative: no clear “phase transition” appears where extra recurrence alone closes the gap. Linear probing suggests the models learn city-location structure quickly, implying the bottleneck isn’t merely representing node identities; it’s the difficulty of learning a general shortest-path algorithm from the available structure.

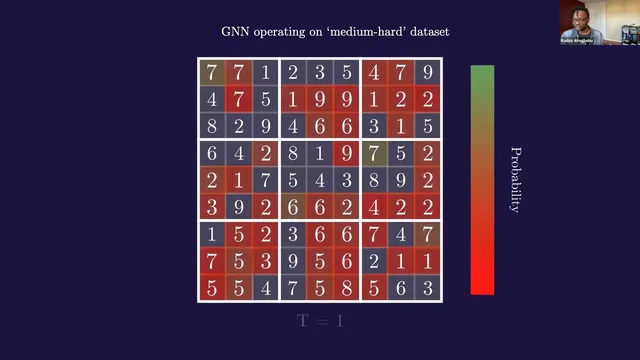

That motivates the Sudoku experiments using GNNs, which explicitly operate on graph structure. In the GNN setup, nodes carry features (e.g., cell values or city locations) and edges are encoded by an adjacency matrix. Message passing iteratively refines node hidden states using a graph refinement equation: each node updates based on a learned function of its own embedding and embeddings of its neighbors, aggregated across neighbors. Standard approaches predict only after a fixed number of refinement steps; here, loss is evaluated at every refinement step, making the model more robust when tested with more iterations than it saw during training.

On Sudoku, the model’s computation appears targeted: uncertain, low-probability cells get revisited and progressively sharpen as iterations increase. Across two datasets—“normal” and “hard”—accuracy improves almost monotonically with more inference iterations, measured at the sequence level (the entire board must be correct). Harder-than-training instances also remain solvable, supporting the idea that test-time compute can drive both robustness and generalization.

To push toward “infinite depth,” the talk introduces deep equilibrium GNN machinery, rewriting the refinement process as a fixed-point problem and using root-finding plus implicit differentiation to avoid storing intermediate states. This yields faster training and lower memory use, but with a caveat: runs can collapse after initially strong progress, possibly tied to spectral norm growth inside the fixed-point iterator.

Finally, the talk explores whether GNN adjacency structure can be learned rather than hand-specified. A small transformer generates candidate neighbors via top-k sampling from attention scores, and a surrogate gradient method (from stochastic compute graph gradient estimation) enables training despite non-differentiable sampling. The approach works in principle but trains slower and performs worse than standard GNNs.

Overall, the work argues that test-time compute is underexplored and promising—especially when paired with message passing and relational reasoning—but that achieving reliable “more thinking helps” behavior likely requires more than recurrence alone.

Cornell Notes

The core claim is that increasing computation at inference can improve performance on relational, graph-structured tasks, but recurrence alone doesn’t guarantee that a model will eventually match larger baselines. Experiments on shortest-path show that adding more test-time recurrence steps after training with a fixed budget fails to produce a clear catch-up effect, suggesting the challenge is learning a general algorithm, not just representing node identities. Sudoku experiments with GNNs show near-monotonic gains as inference iterations increase, with loss evaluated at every refinement step to make the model robust to extra test-time iterations. Deep equilibrium GNNs extend this by treating message passing as a fixed-point problem, enabling infinite-depth behavior with lower memory—though training can collapse. Learning adjacencies from scratch is demonstrated as possible but currently slower and weaker than hand-structured graphs.

Why doesn’t “more recurrence at test time” automatically let shortest-path models catch up to larger baselines?

What design choice makes the Sudoku GNN more robust to running extra refinement steps at test time?

How does the GNN update rule relate to fixed-point behavior and deep equilibrium models?

What evidence suggests test-time compute is being spent on the hardest parts of Sudoku?

What trade-off appears when trying to learn graph adjacencies from raw data instead of hand-specifying them?

What goes wrong in deep equilibrium GNN training despite its efficiency benefits?

Review Questions

- In the shortest-path experiments, what specific evaluation protocol was used to test whether extra test-time recurrence helps, and what outcome was observed?

- How does evaluating loss at every refinement step change the model’s behavior when inference uses more iterations than training?

- What does the deep equilibrium reformulation buy in memory/training speed, and what failure mode can still occur?

Key Points

- 1

Test-time compute can improve accuracy on relational, graph-structured tasks, but recurrence alone may not produce a catch-up effect on algorithmic benchmarks like shortest path.

- 2

Shortest-path models trained with fixed FLOP and fixed recurrence steps did not show a clear phase transition when tested with more recurrence steps.

- 3

Graph neural networks benefit from explicit message passing over an adjacency structure, and Sudoku accuracy rises almost monotonically with more inference iterations.

- 4

Evaluating loss at every refinement step makes GNNs more robust to running additional refinement iterations at test time.

- 5

Deep equilibrium GNNs treat refinement as a fixed-point problem, enabling infinite-depth behavior with lower memory via implicit differentiation—yet training can collapse.

- 6

Learning adjacencies from scratch using transformer attention scores and stochastic top-k sampling is feasible in principle but currently slower and less effective than hand-crafted graph structure.