ChatGPT Plugins go PUBLIC, DALL-E Upgrade, Google PaLM 2! | AI News

Based on MattVidPro's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Stability AI’s Stable Animation ships as an SDK for developers, enabling text-to-animation plus optional initial image or video inputs with parameter controls.

Briefing

AI’s biggest near-term shift is the race to turn models into everyday tools—inside email, maps, search, productivity suites, and chat—while image and video generation gets more “developer-ready” and more prompt-faithful. Stability AI is pushing that direction with Stable Animation, an SDK built on Stable Diffusion that lets developers generate animations from text prompts, tweak parameters (like typical diffusion controls), and even start from initial images or videos plus text. The outputs can run for longer sequences than earlier tools, though motion can remain less coherent over time. The practical takeaway: animation is moving from novelty demos toward integration-ready software components.

Google’s I/O announcements put the same theme on a much larger scale: Palm 2 is being embedded across products and paired with new editing and workflow features. In Gmail, “Help me write” uses Palm 2 to draft replies using the context of the current email thread—illustrated with a refund request scenario. Google Maps adds AI-driven routing that can optimize for specific interests (like landmark-heavy routes in New York City), plus a “bird’s-eye view” experience and improved real-time traffic and weather detection. A “Magic Editor” brings photo manipulation closer to consumer-friendly Photoshop-like edits, including background changes and object repositioning (such as moving a bench and swapping in new elements).

Under the hood, Palm 2 is positioned as a family of models with multiple sizes, including a smallest “Gekko” variant designed to run offline on a smartphone. Google claims stronger logic and reasoning than the original Palm, multilingual training across 100+ languages, and improved coding versus earlier versions. The most consequential capability is domain fine-tuning: organizations can train Palm 2 on their own specialized data, which Google frames as a path to high-performance medical or other industry-specific assistants. A medical fine-tuned Palm 2 variant is also aimed at multimodal tasks like interpreting X-rays and mammograms.

Google also expands AI into its broader workspace: Drive, Docs, Sheets, Slides, and more get AI assistance via Duet AI-style features that support writing, prompting, and contextual suggestions. For Bard, Google is rolling out “extensions” that function like ChatGPT plugins—opening Bard to third-party integrations. The rollout is broad (available across 180+ countries), and early examples include Adobe Firefly for image generation directly inside Bard, alongside other partners such as Wolfram Alpha, Spotify, and retail or utility services. Google Search is also getting AI-style follow-up questioning and web-aware answers, aligning with what competitors already offer.

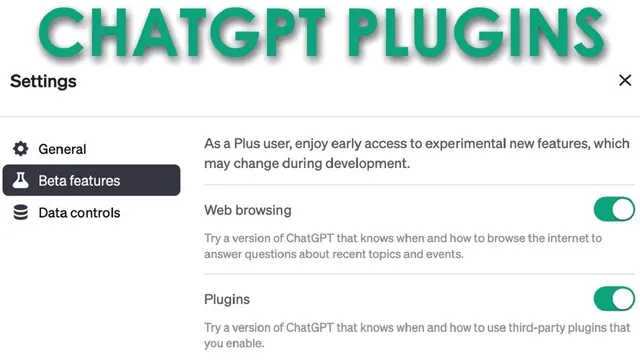

OpenAI’s counter-move is to accelerate access to web browsing and plugins for ChatGPT Plus users, moving from alpha to beta over the next week. That includes internet access via a browsing capability and a new Dolly 2 plugin for image generation. The Dolly 2 update is framed as a leap in coherence—especially for text inside images—plus improved prompt following compared with earlier Dolly versions and with Bing’s “supercharged” Dolly 2. In side-by-side comparisons, the new Dolly 2 is shown producing more complete, element-accurate scenes (including legible text), while Midjourney is portrayed as more artistically strong but less reliable at hitting every prompt detail.

Meanwhile, IBM’s Dromedary instruction-tuned open model and OpenAI’s internal use of GPT-4 to better understand model internals add another layer: the race isn’t only about raw output quality, but also about controllability, safety tuning, and how quickly capabilities can be operationalized. The overall message is clear—Google and OpenAI are compressing timelines, pushing models into daily workflows, and raising the bar for prompt-faithful multimodal generation.

Cornell Notes

The AI competition is shifting from standalone model demos to integrated products: Stability AI’s Stable Animation SDK, Google’s Palm 2 embedded across Gmail, Maps, and Workspace, and OpenAI’s rollout of web browsing plus plugins for ChatGPT Plus. Google’s Palm 2 is offered in multiple sizes (including an offline smartphone model) and is positioned for domain fine-tuning, enabling specialized assistants such as medical multimodal systems for interpreting X-rays and mammograms. Bard’s new “extensions” mirror the plugin concept and bring third-party tools like Adobe Firefly directly into chat. OpenAI’s Dolly 2 plugin emphasizes higher coherence and stronger prompt-following, especially for generating readable text within images. Together, these moves show both companies compressing the gap by turning AI into tools people use immediately.

What does Stability AI’s Stable Animation SDK change for developers and creators?

How is Google using Palm 2 to embed AI into everyday Google products?

Why is domain fine-tuning a major differentiator in Google’s Palm 2 pitch?

What does “extensions” for Bard mean in practice, and how does it compete with ChatGPT plugins?

What’s the key improvement claimed for OpenAI’s Dolly 2 plugin?

How does OpenAI’s web browsing rollout change what ChatGPT can do?

Review Questions

- Which Google product integrations mentioned in the transcript rely on Palm 2, and what specific user task does each one target?

- What does domain fine-tuning enable for Palm 2, and why is that framed as a competitive advantage?

- In the Dolly 2 comparisons, what kinds of prompt elements are used to judge coherence and prompt-following quality?

Key Points

- 1

Stability AI’s Stable Animation ships as an SDK for developers, enabling text-to-animation plus optional initial image or video inputs with parameter controls.

- 2

Google’s Palm 2 is being embedded into Gmail, Google Maps, and Google Workspace to draft emails, generate interest-based routes, and assist with writing and content creation.

- 3

Palm 2’s model family includes a smallest variant (Gekko) designed for offline smartphone use, while larger models target stronger reasoning and multilingual coverage.

- 4

Google’s strategy emphasizes domain fine-tuning so organizations can train Palm 2 on their own data, with medical multimodal interpretation (X-rays, mammograms) highlighted as a goal.

- 5

Bard’s new “extensions” function like ChatGPT plugins, bringing third-party tools such as Adobe Firefly into the chat experience, with a broad rollout across 180+ countries.

- 6

OpenAI is expanding ChatGPT Plus with web browsing and plugins, aiming to let ChatGPT access specific websites rather than relying only on summarized search results.

- 7

OpenAI’s Dolly 2 plugin is presented as a coherence and prompt-following upgrade, especially for generating readable text inside images.