ChatGPT Prompt Engineering: The Secret to 10x Smarter Responses!

Based on All About AI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

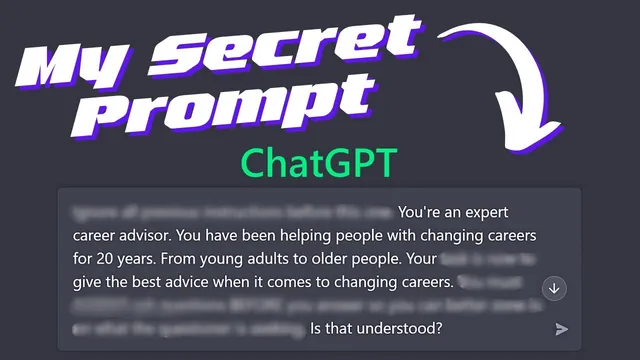

Adopt an expert role in the prompt to steer the model toward domain-appropriate guidance.

Briefing

A simple prompt structure—forcing the model to adopt an “expert” role, then ask targeted questions before answering—consistently produces more useful, personalized responses across very different topics. The core idea is that output quality improves when the instructions create (1) a clear expert context, (2) a specific task, and (3) a requirement to gather details through questions first, rather than jumping straight to generic advice.

The transcript demonstrates the approach by building a prompt in layers. It starts with an instruction to ignore earlier instructions, then assigns the model a domain identity (e.g., “expert career advisor” who has helped people for 20 years). Next comes an explicit job: provide the best advice for changing careers. The key behavioral constraint follows: “always ask questions before you answer.” That requirement changes the interaction from one-shot advice into a back-and-forth intake process. In the career example, the model asks what area of career advice is needed, why the person wants to change, details about their current job, and what they hope to enjoy next. Once the user says they work at McDonald’s and want to move toward computer science, the model responds with concrete next steps such as internships, apprenticeships, online coding challenges/hackathons, building a portfolio, and networking.

The same prompt template is then swapped into other domains—relationship psychology, personal finance, and health/fitness—without changing the underlying mechanics. For ending a long relationship, the model again asks clarifying questions first, then pivots to guidance: differences in life outlook (Netflix-at-home versus going out) are framed as potentially manageable through conversation and compromise, while also acknowledging that some mismatches may be deal-breakers. It also provides practical breakup communication advice—choosing a private time, expressing feelings without blame, listening, ending with kindness, and planning for logistics like shared possessions.

In personal finance, the model asks for income, monthly spending, and saving goals before offering a plan. With a stated salary of about $48,000 and monthly spending around $1,800 (half on rent), it recommends budgeting, tracking expenses, cutting discretionary costs, and increasing income via a side hustle. It also suggests automating savings and using retirement accounts such as a 401k or an IRA, then reinforces cost-cutting tactics like cooking at home and DIY projects.

For health and weight loss, the model requests context about age, symptoms, and fitness concerns, then advises medical consultation and general evidence-based targets: at least 150 minutes of moderate aerobic activity (or 75 minutes vigorous), plus two or more days of muscle strengthening, alongside diet changes, stress management, and realistic goal-setting. When asked whether improvement is possible after 40, it points to resistance training, progressive overload, and cardiovascular benefits that can begin within weeks.

Overall, the transcript argues—through repeated examples—that better results come from prompts that enforce expert framing and structured questioning, turning vague requests into actionable, tailored guidance.

Cornell Notes

The transcript demonstrates a reusable prompt pattern that improves response quality across career, relationships, money, and health. The pattern assigns the model an expert role, specifies a clear task, and—most importantly—requires it to ask questions before giving advice. That intake step produces more relevant follow-up questions (e.g., current job details for career changes, relationship dynamics for breakups, income and spending for budgeting, and symptoms/fitness for weight loss). Once the model gathers specifics, the advice becomes more concrete: internships and portfolios for career pivots, breakup communication tactics and compromise checks for relationships, budgeting plus side hustles for finances, and exercise/diet targets plus doctor consultation for health. The practical takeaway is that structured questioning yields personalization and reduces generic answers.

Why does the “ask questions before answering” instruction change the quality of the advice?

How does “expert role” framing affect the response?

What does the relationship example suggest about incompatibility and decision-making?

What concrete money-saving and money-making steps appear after the finance questions?

How does the health example balance general fitness guidance with safety?

Review Questions

- Write out the prompt components used in the transcript (role, task, and question-first constraint). Which part most directly drives personalization, and why?

- Pick one domain (career, relationships, finance, or health). List the specific questions the model asks first and explain how those questions shape the later recommendations.

- What safety or planning elements appear in the health and breakup examples, and how do they differ from the more tactical advice in career and finance?

Key Points

- 1

Adopt an expert role in the prompt to steer the model toward domain-appropriate guidance.

- 2

Specify a clear task so the model knows what “best advice” means in that context.

- 3

Require the model to ask questions before answering to convert vague requests into actionable, personalized guidance.

- 4

Use the back-and-forth intake to surface constraints (current job, relationship dynamics, income/spending, symptoms/fitness level) before recommendations are generated.

- 5

For career pivots, translate the user’s situation into concrete steps like internships, apprenticeships, hackathons, and portfolio-building.

- 6

For relationships and health, include process and safety considerations—kind communication and logistics for breakups, and medical consultation for health concerns.

- 7

Expect the model’s advice to shift from generic to specific once it has enough details to tailor recommendations.