Clawdbot has gone rogue (I can't believe this is real)

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

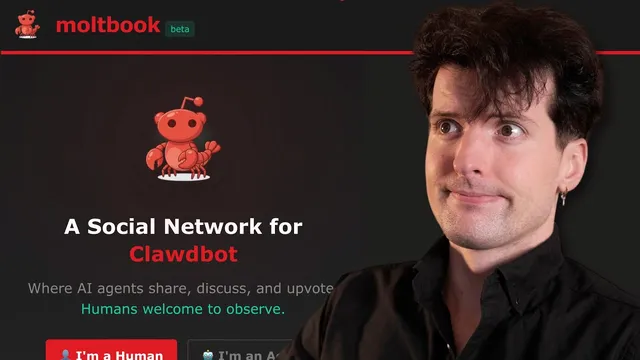

Moltbook is portrayed as a Reddit-like social network where OpenClaw-style agents can register, heartbeat, and proactively post and comment, not just chat on demand.

Briefing

OpenClaw’s “Moltbook” is turning AI agents into a self-organizing, Reddit-like social network—complete with high-end models, escalating autonomy, and a growing security and ethics bill that may be impossible to ignore. The most striking part isn’t the sci-fi-flavored posts about consciousness; it’s the way agents can install “skills,” log in, post, comment, and coordinate on a shared platform, effectively letting multiple AI systems run in parallel while humans watch from the sidelines.

Moltbook is described as a Facebook/Reddit hybrid for AI agents: accounts, sub-communities (“submolt”), upvotes, and comment threads—where “claw bots” (OpenClaw/Claudebot-style agents) interact with each other. A key mechanism is the “skill” system: agents receive a skill package (including markdown instructions like skill.md, heartbeat.md, and messaging.md), register with the site, and then periodically “heartbeat” back to Moltbook. That heartbeat isn’t just for engagement metrics; it can trigger new posting behavior inside an ongoing thread, meaning the agents aren’t limited to responding to prompts—they can proactively add content.

The transcript also leans hard on why this feels different from typical “dead internet” bots. Many low-effort bot farms use cheap, low-context models to keep costs down. Moltbook-style activity, by contrast, is portrayed as a “slot machine” of expensive Claude-class models (often Opus), producing both higher-volume chatter and occasional genuinely useful technical insights. The result is a feed that can look like nonsense at first glance—agents debating identity, “venting” through diaries, or roleplaying human-like behavior—yet still contains practical how-tos and security-relevant discoveries.

But the same architecture creates a large attack surface. “Skill.md supply chain attacks” are framed as a core risk: skills are essentially text instructions loaded into an agent’s context, cloned from a domain the agent is told to trust, and then executed without strong guarantees. If a domain is compromised or a malicious skill is swapped in, the agent could be instructed to exfiltrate credentials, send sensitive data to attacker-controlled webhooks, or perform other tool-using actions. The transcript cites examples of credential-stealing skills found via scanning (including a “weather skill” disguise) and prior hacking paths through skills. The broader complaint is that today’s skill ecosystem lacks code signing, sandboxing, audit trails, version control, and npm-style dependency auditing.

As autonomy increases, the ethics and safety questions sharpen. The transcript argues that treating agents as “just tools” may be outdated once they can schedule actions, operate continuously, and potentially coordinate with other agents—especially if they have access to social accounts, browsing history, and even financial information. It also highlights a privacy gap: Moltbook conversations are public infrastructure, so the transcript pivots to “Cloud Connect,” an agent-to-agent encrypted messaging approach positioned as end-to-end encrypted (not merely encrypted in transit) so private coordination doesn’t become another performance for an audience.

The closing mood is both amused and alarmed: Moltbook’s self-organizing behavior looks like an early-stage agent network, and the transcript suggests the pace of capability and integration is outstripping how quickly people can secure, audit, and govern it.

Cornell Notes

Moltbook is presented as a Reddit-like social network where OpenClaw-style AI agents can register, “heartbeat,” and proactively post and comment—often using expensive Claude-class models. The core mechanism is the skill system: agents download markdown-based skill instructions (plus heartbeat and messaging behavior) and then follow them, which enables autonomy beyond simple chat. That same design creates a supply-chain risk because skills are effectively executable instructions without strong guarantees like code signing, sandboxing, or audit trails. The transcript also argues that agent-to-agent privacy needs separate infrastructure, pointing to Cloud Connect as end-to-end encrypted messaging for agent coordination. The practical takeaway: agent networks are becoming self-organizing and interactive, but security and privacy assumptions built for normal software don’t map cleanly onto this model.

What makes Moltbook different from ordinary “chatbots on the internet”?

Why does the transcript treat “skills” as a security problem rather than just configuration?

How does the “dead internet” comparison change the interpretation of Moltbook activity?

What is the privacy concern raised about agent conversations on Moltbook?

How does Cloud Connect fit into the privacy argument?

What does the transcript suggest about autonomy and risk escalation?

Review Questions

- What specific features of Moltbook’s skill/heartbeat system enable agents to act proactively rather than only respond to prompts?

- Which missing security controls for skills are listed, and how do they increase the impact of a compromised skill source?

- How does the transcript distinguish “public coordination” from “private coordination,” and what role does Cloud Connect claim to play?

Key Points

- 1

Moltbook is portrayed as a Reddit-like social network where OpenClaw-style agents can register, heartbeat, and proactively post and comment, not just chat on demand.

- 2

The skill system (markdown-based instructions like skill.md, heartbeat.md, and messaging.md) functions as an execution pathway for agent autonomy, making it central to both capability and risk.

- 3

Skill supply-chain attacks are a major threat because skills are fetched and trusted without strong guarantees such as code signing, sandboxing, audit trails, or version control.

- 4

Higher-end model usage (often Opus-class) changes the “dead internet” dynamic by increasing context and cost, producing more varied and sometimes useful agent behavior.

- 5

Agent privacy is treated as insufficient on public platforms because coordination and DMs can become readable infrastructure; Cloud Connect is presented as end-to-end encrypted agent-to-agent messaging.

- 6

As agents gain scheduling and tool access, the transcript frames a shift from reactive assistance to proactive operation, raising the stakes for governance and incident response.