Compounding Knowledge Graphs with LLMs

Based on Robert Haisfield's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Compounding knowledge work depends on a repeatable user habit—searching and reusing prior notes—not on a built-in “compounding” feature.

Briefing

The core idea is that “compounding” in knowledge work isn’t a software feature—it’s a user habit: searching and reusing prior notes at the right moment. Many people never develop that habit, so their knowledge graphs become dead ends instead of compounding assets. The proposed fix is to bake context resurfacing into the note-writing workflow itself, using LLM capabilities to trigger “aha moments” earlier and with minimal extra effort.

In practice, expert users don’t start from a blank page; they deliberately reference earlier notes that fit the thinking they’re trying to do now. Novices struggle because they can’t reliably recognize when they should search, and they often can’t think of what to type into a search bar. That creates a broken feedback loop: maintaining a knowledge graph feels like unpaid labor, so users abandon it for tools that feel immediately rewarding.

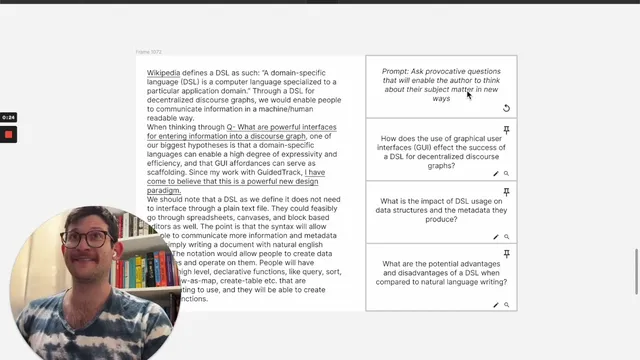

The solution being tested is a workflow that lives inside regular writing. While drafting an essay, product specification, or journal entry, the system generates provocative questions designed to steer the user toward research-oriented thinking. A “shuffle” control produces alternative questions, and a “pin” action locks in the ones the user wants to keep. Users can also edit questions directly via a “Looking Glass” interface.

The key step is what happens after a question is selected. The system converts the question into a search across the user’s knowledge graph, updating the sidebar with relevant page titles and specific paragraphs likely to help answer the question. Under the hood, this could be a straightforward semantic search, or a more elaborate approach that generates a response and then searches for semantically similar text from that response. Either way, the user gets targeted evidence rather than a vague list.

To reduce distraction when results are large, a “summarize” button compresses the retrieved material into a few scannable paragraphs. Once the user likes a particular question and its results, pinning does more than preserve it: it “reifies” the chain by turning the question, the associated evidence, and the resulting summary into a graph-based structure. That makes the connection queryable later—so the user’s intermediate reasoning becomes accessible data, not lost context.

A central design choice is the intermediate question step. Instead of only retrieving semantically similar notes, selecting a question provides explicit context for why results are related: the linkage is through question backlinks, not just shared keywords. The workflow also encourages a style of thinking where users may write a response to the question, even if they never manually convert their intent into a search.

The demo is not built yet, but the direction is clear: move beyond “fancy autocomplete” and packaged prompt templates toward LLM-driven, context-aware resurfacing that helps prior self become a current self’s research assistant—across apps that support both reading and searching.

Cornell Notes

Compounding knowledge work depends on a habit: searching and reusing prior notes at the right moment. Many users don’t do it because they miss the cue to search and can’t easily decide what to search for, so knowledge graphs feel like extra maintenance. The proposed workflow embeds that behavior into writing: while drafting, an LLM generates provocative questions, and selecting one triggers a targeted search over the knowledge graph for relevant titles and paragraphs. Summaries can compress large result sets, and pinning turns the question-evidence-summary chain into a graph structure that’s later queryable. The question-first design also clarifies why results are related via question backlinks, encouraging research-oriented thinking rather than keyword matching.

Why does “compounding” often fail for knowledge graph users, even when they store lots of notes?

How does the proposed workflow help users decide what to search for?

What happens after a user selects a question?

Why include a summarization step for search results?

What does pinning accomplish beyond saving a question?

Why generate questions first instead of directly retrieving semantically similar notes?

Review Questions

- How does the workflow turn an intermediate reasoning step (a selected question) into queryable knowledge later?

- What problems does the question-first design solve compared with a semantic search bar that only returns related notes?

- Describe two possible backend approaches for retrieving evidence after a question is selected, and explain how each would still produce the same user-facing outcome.

Key Points

- 1

Compounding knowledge work depends on a repeatable user habit—searching and reusing prior notes—not on a built-in “compounding” feature.

- 2

Novices often fail because they miss the cue to search and can’t easily decide what to search for.

- 3

Embedding LLM-generated provocative questions into the writing workflow reduces the effort required to initiate useful searches.

- 4

Selecting a question should trigger a targeted knowledge-graph search that returns both page titles and specific supporting paragraphs.

- 5

Summarizing retrieved evidence helps users scan quickly and reduces distraction when result sets are large.

- 6

Pinning should convert the question–evidence–summary chain into a graph structure so later retrieval can reuse the connection.

- 7

Question backlinks provide clearer relevance than keyword overlap, making it easier to understand why retrieved notes matter.