DBSCAN Clustering Algorithms | Density Based Clustering | How DBSCAN Works | CampusX

Based on CampusX's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

DBSCAN clusters by density using ε (neighborhood radius) and MinPts (minimum neighbors), not by pre-setting the number of clusters.

Briefing

DBSCAN’s core strength is that it clusters data by density—grouping together regions where points are packed closely—while automatically flagging sparse points as noise. That density-first approach matters because many real datasets don’t form neat, spherical clusters, and algorithms like k-means can break down when cluster shapes are irregular or when outliers distort centroids.

The discussion starts with why k-means often fails in practice. k-means requires the number of clusters up front, which becomes especially awkward in high-dimensional data where visual intuition is missing. Even when the “right” cluster count is guessed (for example via elbow plots), the result can be ambiguous. k-means is also sensitive to outliers: a single distant point can pull centroids away from where they should be. Finally, k-means assumes centroid-based, roughly spherical structure; when data forms non-spherical shapes, it can produce incorrect groupings.

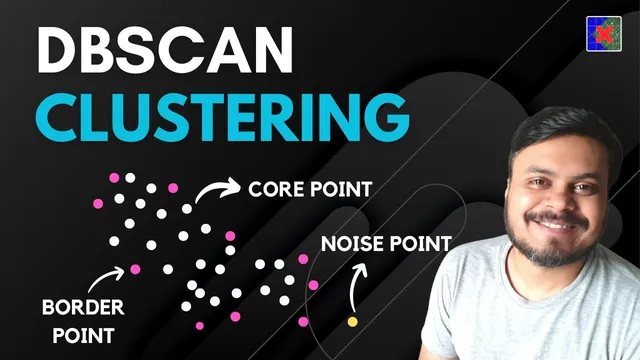

DBSCAN avoids those pitfalls by using two hyperparameters: ε (epsilon), the neighborhood radius, and MinPts, the minimum number of points required to consider a region dense. For any point, DBSCAN counts how many points fall within its ε-neighborhood. If that count is at least MinPts, the point is a core point. If the count is below MinPts but the point lies within ε of a core point, it becomes a border point. Points that are neither core nor border are labeled as noise.

The algorithm then builds clusters through the idea of density connectivity. Two points are density-connected if there exists a chain of core points linking them such that each adjacent step stays within ε. If the chain breaks—because a link passes through a non-core region or because the distance between core points exceeds ε—then the points belong to different clusters.

Operationally, DBSCAN runs in four steps. First, it sets ε and MinPts, then labels every point as core, border, or noise based on neighborhood counts. Second, it iterates over unassigned core points: each time it finds a new core point, it starts a new cluster and expands it by repeatedly adding all core points density-connected to the current cluster. Third, it assigns each border point to the cluster of its nearest core point within ε. Fourth, it leaves noise points unassigned.

A practical implementation example uses scikit-learn’s DBSCAN, fitting the model with ε and MinPts (min_samples) and reading cluster labels from the output; noise points receive a label of −1. The walkthrough also highlights how changing hyperparameters can dramatically alter results: increasing MinPts can turn previously clusterable points into noise, while increasing ε can merge clusters by expanding neighborhoods.

Finally, the advantages and limitations are laid out. DBSCAN is robust to outliers, doesn’t require specifying the number of clusters, and can find arbitrarily shaped clusters. But it is sensitive to ε and MinPts, struggles when clusters have very different densities, and doesn’t provide a direct prediction function for new points without re-running clustering. The takeaway is clear: DBSCAN is powerful for density-shaped structure, but it demands careful tuning of its two neighborhood parameters.

Cornell Notes

DBSCAN clusters data by density rather than by distance to centroids. It uses two hyperparameters: ε (epsilon), the radius of a point’s neighborhood, and MinPts, the minimum number of points required for that neighborhood to be considered dense. Points are categorized as core (neighborhood count ≥ MinPts), border (neighborhood count < MinPts but within ε of a core point), or noise (neither core nor border). Clusters form by density connectivity: core points can be linked through chains where each step stays within ε. This matters because DBSCAN can find non-spherical clusters and automatically label outliers as noise, but it can fail or merge clusters when ε/MinPts are poorly chosen or when cluster densities differ widely.

Why does DBSCAN avoid the “number of clusters” problem that k-means faces?

How exactly do ε and MinPts determine whether a point is core, border, or noise?

What does “density-connected” mean, and why is it central to cluster expansion?

What is the difference between assigning border points and expanding via core points?

How do hyperparameter changes (MinPts or ε) alter the clustering outcome?

When does DBSCAN tend to struggle, even though it can find arbitrary shapes?

Review Questions

- If a point has fewer than MinPts neighbors within ε, what conditions would still allow it to be labeled as a border point?

- Describe the four main steps of DBSCAN and specify which step uses density connectivity.

- Why can increasing ε cause two clusters to merge, even if the underlying data still contains two distinct dense regions?

Key Points

- 1

DBSCAN clusters by density using ε (neighborhood radius) and MinPts (minimum neighbors), not by pre-setting the number of clusters.

- 2

Core points are those whose ε-neighborhood contains at least MinPts points; border points are within ε of a core point but don’t meet MinPts themselves; noise points are neither.

- 3

Clusters form by density connectivity: core points can be linked through chains of core-to-core steps where each step stays within ε.

- 4

DBSCAN’s workflow expands clusters using core points first, then assigns border points to the nearest reachable core cluster, and leaves noise unassigned.

- 5

Hyperparameters are highly influential: raising MinPts can turn cluster points into noise, while raising ε can merge clusters by expanding reachability.

- 6

DBSCAN is robust to outliers and can find arbitrarily shaped clusters, but it can struggle when clusters have widely different densities.

- 7

DBSCAN doesn’t provide a direct prediction rule for new points; new data typically requires re-running the clustering process.