Deep Learning Chatbot R&D

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Train-time checkpoints vary sharply in conversational quality, so selecting only one model’s output leaves many prompts underserved.

Briefing

A large Reddit-trained neural machine translation chatbot can produce noticeably better replies by treating generation like an ensemble problem—running many independently trained checkpoints and then selecting outputs using lightweight, hand-built scoring rules. Instead of trusting a single model’s “best” beam-search response, the workflow generates dozens to hundreds of candidate replies, scores them for surface-level quality signals (like punctuation and repetition), and then keeps the top-ranked options. The practical payoff is immediate: responses that were often incomplete, repetitive, or nonsensical under a single model become more consistently coherent and contextually plausible once multiple checkpoints are pooled.

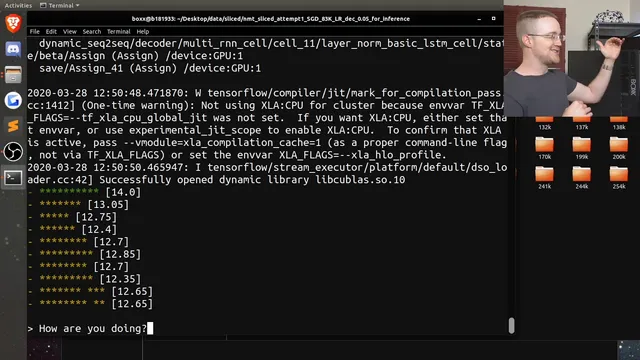

The system starts with a neural machine translation architecture repurposed for English-to-English chat. Using Reddit comment/response pairs, it trains a very large model—20 total layers (10-layer encoder, 10-layer decoder) with 1,024 nodes per layer—running on RTX 8,000 hardware. Training is checkpointed over many steps (the transcript references behavior around 100k, 120k, and later up to roughly 259k steps). A key observation is that no single checkpoint is uniformly good: improving performance on some questions can degrade answers to others, producing a classic “good at some, bad at others” pattern.

To address that instability, the project shifts from single-model selection to ensemble-style selection. The approach runs many models in parallel—22 checkpoints are mentioned as active in the ensemble—so each user input is inferred against all checkpoints. Each model returns multiple beam-search candidates (the transcript notes a beam width producing about ten responses per model), yielding a large pool of candidate replies. The ensemble then wrangles these outputs into a dictionary keyed by response text and an existing “Daniel score” (a prior scoring function that the narrator finds imperfect).

Because the existing score is unreliable—sometimes ranking clearly unfinished or low-quality replies highly—the next step is building new scoring heuristics. The transcript describes a rudimentary “H score” based on three signals: whether the response ends with acceptable punctuation (or certain emoji-like endings), the response length (favoring longer answers when they aren’t repetitive), and a repetition penalty computed by counting repeated words. A further refinement idea is penalizing overuse of the word “I” by counting occurrences and reducing the score when it appears too often. After scoring, the candidates are sorted by score and the top slice is used as the response set.

When the ensemble selection is applied live, the chatbot’s behavior changes in a way that’s easy to see: it stops getting stuck on one repetitive reply pattern and instead surfaces varied, often more sensible responses. The transcript also highlights multilingual quirks—responses can appear in Spanish and other languages, and the project considers using language detection and translation (e.g., converting non-English replies to English) while preserving the ability to retain useful non-English outputs.

The work remains explicitly R&D: scoring is still basic, and the next improvements include coherence checks using NLP tools (NLTK or spaCy), better handling of emoji/punctuation, repetition and length weighting, and additional filters such as detecting subreddit-style links (e.g., “/r/...”) that sometimes produce genuinely good replies. The broader takeaway is that checkpoint ensembles plus transparent, modifiable scoring heuristics can turn a temperamental large model into a more dependable conversational system without waiting for a perfect end-to-end metric.

Cornell Notes

The project repurposes a neural machine translation model for English-to-English chat using Reddit comment/response data. Training checkpoints behave inconsistently: some steps answer certain questions well while failing on others. To stabilize quality, the system runs many checkpoints (22) and collects beam-search candidates from each, producing a large pool of replies per prompt. Instead of trusting the model’s single “top” output, the code scores candidates using heuristics—especially acceptable ending punctuation/emoji, response length, and word repetition penalties—then selects the highest-scoring replies. This matters because it improves coherence and variety without requiring a perfect learned scoring model, and it creates a testbed for iterating on better scoring rules.

Why does a single chatbot checkpoint struggle, and what pattern does the transcript observe during training?

How does the ensemble change the candidate-generation process compared with using one model?

What is the “Daniel score,” and why does the project still build new scoring rules?

What does the rudimentary “H score” reward or penalize?

How does the ensemble selection affect observable chatbot behavior?

What multilingual issue does the project raise, and what mitigation is considered?

Review Questions

- How does checkpoint ensembling address the “good at some questions, bad at others” behavior seen during training?

- Which heuristic signals are used in H score, and how would changing their weights likely affect the chatbot’s tendency toward short vs. long replies?

- What kinds of failure modes does the transcript associate with the existing Daniel score, and what new scoring features are proposed to fix them?

Key Points

- 1

Train-time checkpoints vary sharply in conversational quality, so selecting only one model’s output leaves many prompts underserved.

- 2

Running multiple checkpoints in parallel and pooling beam-search candidates increases the chance of finding a high-quality reply for each prompt.

- 3

Hand-built scoring heuristics can outperform a single imperfect metric by explicitly rewarding punctuation endings and penalizing repetition.

- 4

Response length is treated as a quality proxy because the underlying loss dynamics often push the model toward overly short answers.

- 5

A repetition penalty (word counts) and a proposed “I”-frequency penalty are used to reduce rambling and self-referential loops.

- 6

Multilingual outputs can be useful but complicate evaluation; language detection and translation are considered to normalize replies.

- 7

Next-step improvements include coherence checks (NLTK/spaCy), better emoji/punctuation handling, and detecting subreddit-style links that sometimes yield good responses.