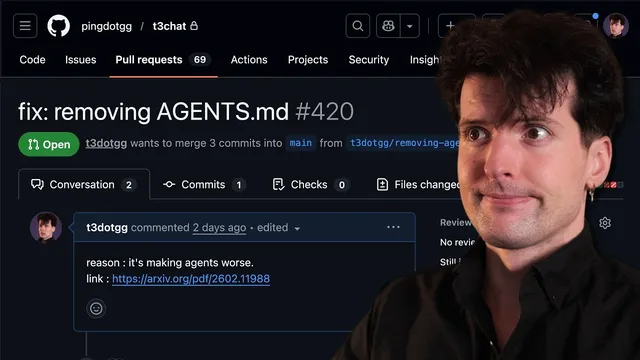

Delete your CLAUDE.md (and your AGENT.md too)

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Benchmark results suggest AGENT.md/CLAUDE.md files can reduce coding-agent task success while increasing runtime cost.

Briefing

Coding agents often get “tailored” to a repository using context files like AGENT.md or CLAUDE.md—documents that list repo structure, tooling commands, and preferred patterns. A new benchmark study finds that this widely encouraged practice can backfire: when agents are given these context files, task performance drops consistently across multiple models, while costs rise sharply. The implication is blunt—stuffing large, repo-specific instruction files into the prompt may steer models away from the fastest or most correct path, and it can also make them more expensive to run.

The study evaluates coding agents on real GitHub issues in two settings: repositories where developers commit context files, and repositories where those files are removed. A third condition lets the agent generate its own context file before continuing. Across the board, developer-provided context files deliver only marginal gains—about a 4% average improvement versus having no file—while LLM-generated context files show a small average decrease of about 3%. The headline isn’t just accuracy; it’s efficiency. Context files increase exploration, testing, and reasoning, which drives costs up by more than 20%. The study therefore recommends a conservative approach: avoid emitting large, generated instruction files and instead include only minimal requirements (for example, the specific tooling to use).

That result aligns with a core mechanism behind why these files can hurt. In modern agent workflows, the model doesn’t see only the user’s question; it also receives a hierarchy of instructions (provider-level constraints, system prompts, developer messages) plus any extra “developer” context such as AGENT.md/CLAUDE.md. Because the model treats the entire prompt as an autocomplete problem, irrelevant or outdated details can bias it toward the wrong tools, frameworks, or file locations. A concrete example in the transcript: placing TRPC guidance in the wrong part of a repo’s instruction file can cause the model to reach for TRPC even when most functionality has moved to Convex.

The transcript also argues that context files tend to go stale. Even when generated “just before” a task, they can still mislead the agent about where things live or which patterns matter. A personal test on a project called lawn (an alternative to Frame.io for video review) illustrates the tradeoff: running with an init-generated CLAUDE.md took longer (about 1 minute 29 seconds) than running without it (about 1 minute 11 seconds) for the same optimization question, despite the file seemingly helping the model name files earlier. The bigger risk is that if the repo changes and the instruction file doesn’t, the model can waste time and make incorrect assumptions.

The practical takeaway is not “never use AGENT.md,” but “use it surgically.” The recommended philosophy is to keep these files minimal and use them mainly to steer away from recurring failure modes—like enforcing type checks when they’re consistently skipped, or warning the agent about legacy technologies it keeps misusing. When the agent is failing, the better long-term fix is often in the codebase itself: stronger tests, clearer tool interfaces, and feedback mechanisms that help the agent detect when changes break other parts. In short, large context files can increase cost and confusion; better engineering and minimal, targeted instructions tend to produce more reliable outcomes.

Cornell Notes

AGENT.md/CLAUDE.md files are meant to tailor coding agents to a repository, but a benchmark study finds they often reduce task success and increase cost. Developer-provided context files yield only marginal gains (~4% average), while LLM-generated context files slightly worsen outcomes (~-3%). The study also reports a major efficiency hit: context files increase exploration/testing/reasoning and raise costs by over 20%. The recommended approach is to keep context minimal—only include essential tooling requirements—and rely more on codebase structure, tests, and feedback loops to guide correct behavior.

Why can AGENT.md/CLAUDE.md files make coding agents worse even when they’re “helpful” on paper?

What does the benchmark study measure, and what are the headline results?

How do context files affect cost, not just accuracy?

What practical guidance emerges from the study’s recommendation?

How does the transcript’s “lawn” experiment illustrate the tradeoff?

If context files aren’t the default solution, what should they be used for?

Review Questions

- What specific benchmark comparisons (developer-provided vs removed vs agent-generated) lead to the study’s performance conclusions?

- How does the “autocomplete” view of prompting explain both increased costs and occasional mis-steering from context files?

- What kinds of repo changes or engineering improvements reduce the need for large AGENT.md/CLAUDE.md files?

Key Points

- 1

Benchmark results suggest AGENT.md/CLAUDE.md files can reduce coding-agent task success while increasing runtime cost.

- 2

Developer-provided context files show only marginal average gains (~4%), while LLM-generated context files show a small average drop (~-3%).

- 3

Context files increase exploration/testing/reasoning, driving costs up by more than 20%.

- 4

Prompt hierarchy means AGENT.md/CLAUDE.md content can bias tool choice and framework usage (e.g., TRPC guidance pulling the agent toward legacy paths).

- 5

Outdated context files are a major risk because they can mislead file discovery and implementation locations after repo changes.

- 6

A better default is minimal, targeted context plus stronger codebase scaffolding (tests, type checks, and feedback loops) so the agent can self-correct.