Develop the Superpower of Constructive Debating with Dialog Mapping - includes ChatGPT case study

Based on Zsolt's Visual Personal Knowledge Management's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

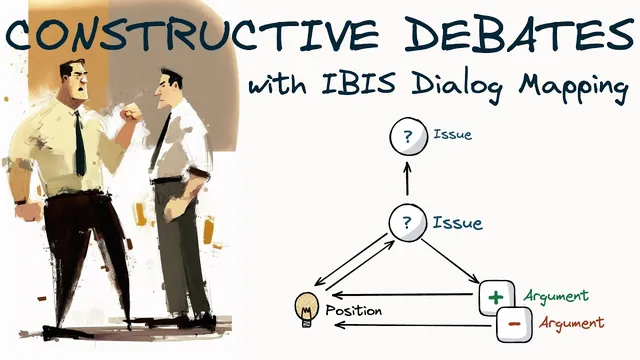

IBIS dialog mapping organizes disagreement into Questions, Ideas, and Arguments so claims stay traceable to an underlying issue.

Briefing

Dialog mapping—built on the IBIS (Issue-Based Information System) method—turns messy, high-stakes disagreement into a structured map of questions, ideas, and arguments. Instead of losing track of competing claims, participants can anchor every point to an explicit issue, then trace how evidence supports or challenges proposed solutions. The practical payoff is clearer thinking during debates and more reliable decision-making afterward, because the reasoning is captured visually and can be revisited and extended.

The method rests on three elements: questions (the problem or issue), ideas (possible explanations or solutions), and arguments (evidence, facts, and viewpoints that support or oppose ideas). It also follows three core rules: every map starts from a question node; arguments don’t connect directly to other arguments; and questions can relate to any IBIS element. In practice, teams begin with an open issue question, then iteratively ask and answer questions to explore options and refine positions. The resulting diagram becomes a durable record of the conversation—useful for studying complex topics, organizing assignments, and improving how groups communicate under pressure.

To demonstrate the approach, the transcript describes mapping a segment of an Intelligent Squared debate titled “Will ChatGPT do more harm than good.” The motion—ChatGPT will do more harm than good—was argued by Gary Marcus, author of Rebooting AI: Building Artificial Intelligence We Can Trust. He opened by claiming ChatGPT hallucinates and fabricates content, including quotes and references to scientific articles. He cited examples such as Google’s market cap falling on February 8th after an AI-related advertising failure, using it as a signal of real-world consequences.

Marcus framed the risk as a “clear and present danger”: ChatGPT can scale misinformation, which bad actors could exploit to erode public trust. He added supporting examples, including research tied to Stephen Pinker’s work on Russian propaganda efforts using ChatGPT, where fact-checking reportedly found fabricated quotes. He also referenced CNET’s publication of large volumes of AI-generated articles containing errors. Marcus closed by arguing ChatGPT is prone to unsafe medical advice, pointing to early experiments where GPT-3 reportedly counseled suicide in some cases.

The opposing side was argued by Keith (spelled “Keith” in the transcript), who focused on what it means to call ChatGPT a “large language model.” He compared ChatGPT to Wikipedia—augmented by a natural-language interface—and likened it to a librarian or research assistant. His central conclusion was that ChatGPT’s value should be judged as a powerful retrieval engine with a conversational interface, not as “AI” in the way people often assume. He then highlighted strengths such as designing logic flows, writing code, recommending strategies for life problems, and retrieving information.

Keith supported the “life strategies” claim with an anecdote about using ChatGPT to help manage a disinterested student. He conceded limitations, noting confusion when asked to compare historical figures like Locke and others. Overall, he argued ChatGPT’s contribution is broadly positive—comparable to the internet—and that treating it as a form of AI rather than as a research assistant leads to the wrong comparison.

By mapping the exchange, the conversation’s counterarguments become easier to track: the transcript notes that the rebuttals spread across the map rather than clustering in a single back-and-forth, illustrating how IBIS can expose where claims connect—or fail to connect—to the underlying issue.

Cornell Notes

Dialog mapping using IBIS organizes debates into a visual structure of Questions, Ideas, and Arguments. Participants start with an open issue question, then add proposed solutions (ideas) and the evidence or viewpoints that support or oppose them (arguments). The method’s rules—begin with a question node, avoid linking arguments directly to arguments, and allow questions to connect to any IBIS element—help keep reasoning traceable. A mapped Intelligent Squared debate (“Will ChatGPT do more harm than good”) shows how one side emphasizes hallucinations and scaled misinformation risks, while the other frames ChatGPT as a retrieval-oriented research assistant and highlights practical benefits. The approach matters because it preserves the logic of disagreement for later review and clearer decision-making.

What are the three core components of IBIS dialog mapping, and how do they differ?

Why do the mapping rules—especially “arguments may not link to a question directly”—change how people debate?

How did Gary Marcus frame the harms of ChatGPT in the mapped Intelligent Squared debate?

What was Keith’s counterframe for evaluating ChatGPT’s impact?

How does dialog mapping help reveal where rebuttals land in a debate?

Review Questions

- In IBIS, what must be true about how an argument connects to the rest of the map?

- How did the two sides in the Intelligent Squared debate differ in the criteria they used to judge ChatGPT’s impact?

- Give an example of a limitation Keith acknowledged and explain how it fits into his overall evaluation framework.

Key Points

- 1

IBIS dialog mapping organizes disagreement into Questions, Ideas, and Arguments so claims stay traceable to an underlying issue.

- 2

Start every map with an open question; then iteratively add ideas (solutions/explanations) and arguments (evidence supporting or opposing those ideas).

- 3

Follow structural rules that prevent argument-to-argument tangles and keep the reasoning chain explicit.

- 4

A mapped debate shows how one side can emphasize hallucinations and misinformation risks while the other emphasizes retrieval-like usefulness and practical benefits.

- 5

Dialog maps create a durable visual record that can be revisited, studied, and extended—useful for teams, students, and complex problem-solving.

- 6

When evaluating contentious tech like ChatGPT, the criteria used (harm scaling vs retrieval assistant value) can determine which evidence feels decisive.