Differential equations, a tourist's guide | DE1

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

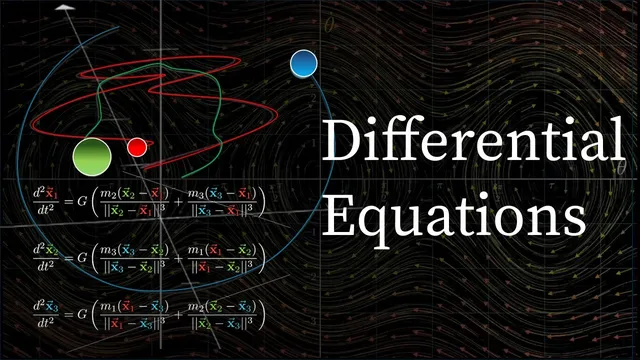

Differential equations model change by relating a function to its derivatives, often capturing feedback where acceleration depends on the current state.

Briefing

Differential equations are the language for describing change—when it’s easier to model how a system evolves than to pin down its exact state at every moment. From Newtonian mechanics to population growth and even the dynamics of affection, they formalize relationships where rates of change depend on the current values themselves. The core takeaway is that many real-world systems can be expressed as equations linking a function to its derivatives, and that this structure unlocks both qualitative insight and practical computation, even when exact formulas are out of reach.

The tour begins by distinguishing two main types: ordinary differential equations (ODEs), where the unknown depends on a single input such as time, and partial differential equations (PDEs), where the unknown depends on multiple inputs like space and time. A thrown object under gravity provides a first ODE: if y(t) is vertical position, then ÿ = −g. Solving it means “working backwards” from derivatives—integrating to recover velocity and position while using initial conditions to fix the constants. That simple example already hints at a recurring theme: differential equations often contain feedback loops where acceleration depends on position (or other state variables), not just on time.

Gravity between bodies makes that feedback explicit. In the two-body gravitational setting, acceleration depends on distance, so the system becomes a coupled dance between position and velocity. More generally, physics often uses second-order differential equations, where the highest derivative appearing is the second derivative; higher-order equations involve third, fourth, or even higher derivatives. Conceptually, solving such equations is like assembling an “infinite jigsaw puzzle”: the values of the unknown at every time must fit together with their own rate-of-change constraints.

The pendulum example turns that abstraction into something concrete—and more realistic than the standard small-angle sine-wave story. The true restoring acceleration involves sin(θ), not θ, so the pendulum’s period lengthens for larger swings and the motion stops resembling a pure sine wave. With damping included (modeled as a term proportional to angular velocity), the resulting nonlinear differential equation becomes “juicy”: analytic solutions are either extremely complicated or unavailable in closed form. That limitation pushes the focus from exact solving to understanding the dynamics directly from the equations.

To build that understanding, the discussion shifts from plotting θ versus time to using phase space: a two-dimensional state space with axes (θ, θ̇). Each point represents a complete snapshot of the system, and the differential equation induces a vector field that shows how the state moves. Trajectories spiral toward stable fixed points when damping is present, and changing parameters like the air-resistance coefficient μ changes how quickly the spiral tightens. This same phase-space approach generalizes beyond pendulums: the three-body problem expands the state space dramatically (18 dimensions for positions and momenta), and the stability question becomes a matter of whether nearby trajectories contract or expand.

Finally, when exact solutions are unavailable, numerical simulation offers a practical path: step forward in time using small increments Δt, repeatedly updating θ and θ̇ based on the differential equation. The tour closes by connecting these modeling limits to chaos theory—small measurement errors can cause trajectories to diverge rapidly, making long-term prediction unreliable even when the equations are known. The result is a sobering but motivating message: the complexity of nature isn’t hidden; it lives inside the math itself.

Cornell Notes

Differential equations describe systems by relating a quantity to its derivatives, capturing how change depends on the current state. ODEs involve one independent variable (often time), while PDEs involve multiple inputs like space and time. The pendulum example shows why real motion deviates from the small-angle sine approximation: the restoring force depends on sin(θ), and damping adds a velocity-dependent term. Because nonlinear equations are often unsolvable in closed form, phase space (θ, θ̇) and vector fields provide qualitative insight into stability and long-term behavior. When analytic solutions fail, numerical methods approximate trajectories by stepping forward with small time increments Δt, though chaos theory warns that prediction can still break down due to sensitivity to initial conditions.

Why does gravity lead to a differential equation even in the simplest “thrown object” scenario?

What changes when gravity depends on where the bodies are, as in planetary motion?

Why does the pendulum’s motion stop being a sine wave for large angles?

How does phase space (θ, θ̇) turn a second-order equation into something visual and analyzable?

What does damping do to the pendulum’s trajectories in phase space, and how can μ be interpreted?

How do numerical methods approximate solutions when analytic forms are unavailable?

Review Questions

- How does rewriting a second-order differential equation as a system of two first-order equations enable phase-space visualization?

- In the pendulum model, what role does sin(θ) play in producing deviations from the small-angle sine-wave approximation?

- Why can numerical simulation still fail to provide reliable long-term prediction in chaotic systems?

Key Points

- 1

Differential equations model change by relating a function to its derivatives, often capturing feedback where acceleration depends on the current state.

- 2

Ordinary differential equations (ODEs) use a single independent variable (commonly time), while partial differential equations (PDEs) involve multiple inputs such as space and time.

- 3

The thrown-object example yields ÿ = −g, and solving it requires integrating twice and using initial conditions to fix constants.

- 4

The pendulum’s realistic dynamics depend on sin(θ) and, with damping, on a velocity term proportional to θ̇, which breaks the pure sine-wave approximation for large angles.

- 5

Phase space (θ, θ̇) converts a second-order problem into a vector-field picture, making stability and attraction patterns visible as trajectories.

- 6

When closed-form solutions are impractical or nonexistent, numerical methods approximate trajectories by stepping forward with small time increments Δt.

- 7

Chaos theory limits long-term predictability: tiny differences in initial conditions can produce wildly different trajectories even when the governing equations are known.