Everything You Believe Is Based on What You've Been Told

Based on Pursuit of Wonder's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

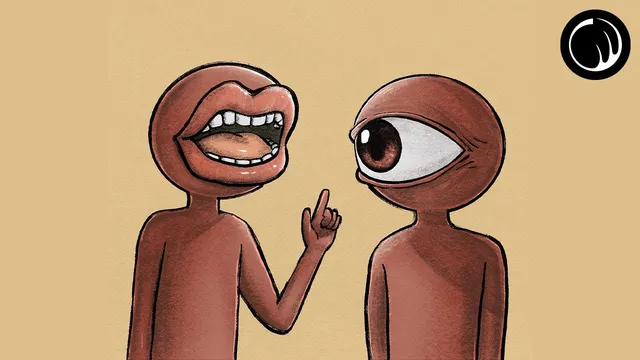

Many everyday beliefs are formed through authority and social explanation rather than direct observation or testing.

Briefing

Beliefs about how the world works—time, history, bodies, the universe, even morality—often rest less on direct evidence than on authority, tradition, and social explanation. The central warning is blunt: human beings are “consistently very wrong,” and the people who once sounded credible are frequently later treated as mistaken. That pattern matters because it challenges the default habit of trusting experts uncritically and treating reason as an unbiased path to truth.

Across centuries, widely held views have collapsed under new understanding. In Peru in the mid-1400s, a belief in appeasing gods helped drive what’s described as the largest known child sacrifice, alongside the killing of hundreds of animals. In 17th-century Europe, the Earth-centered cosmos was treated as settled fact until Galileo Galilei’s work argued the Sun sat at the center and the Earth moved; the Roman Inquisition banned his ideas and pursued him for heresy. In the late 19th century, doctors used schedule 1 narcotics for children’s cold symptoms and discouraged handwashing during childbirth and medical procedures. Not long ago, cigarettes were widely believed to be harmless. Far back in history, slavery and forced labor were often considered morally permissible. The through-line is not just that people can be wrong—it’s that “smart for their time” can be a euphemism for ignorance that later generations will recognize.

The transcript then shifts from history to logic and psychology. A classic syllogism—“All flowers are beautiful; a lilac is a flower; therefore lilacs are beautiful”—can be valid in form while still failing in substance when the key premise is unsupported or circular. In domains where claims can’t be tested at scale—philosophy, politics, morality, spirituality, meaning—arguments frequently rely on subjective presumptions tied to personal experience and cultural context. Even when reasoning sounds airtight, it can be built on unproven assumptions that feel convincing.

Cognitive science enters with a provocative framing from Hugo Mercier and Dan Sperber: reasoning may have evolved less to produce accurate beliefs and more to help people coordinate socially—explaining actions, defending identity, and improving status and cohesion. That means “good reasons” can function as social tools, not truth-finding instruments. From there, the transcript argues that people often use reason to justify what they already believe, rather than to test and revise those beliefs. Biases, perceptual tricks, and logical fallacies can steer conclusions while still producing confident narratives.

The proposed remedy is humility and skepticism—especially toward one’s own certainty. Since no one can foresee all consequences or access all relevant information, the best posture may be assuming one is “probably always some amount wrong,” using reason to recognize its limits, and practicing empathy, compassion, and humility. The closing sponsor segment reinforces the same theme in learning terms: echo chambers and confirmation bias feel like progress, but encountering opposing ideas—via tools like Blinkist summaries—can help break the cycle of self-reinforcing belief.

Cornell Notes

The transcript argues that many beliefs—about science, history, health, and morality—are built on authority and social explanation rather than direct observation. Historical examples show that views once treated as credible (from Earth-centered astronomy to medical practices and cigarette safety) later proved wrong, suggesting that “smart for their time” often means “ignorant by later standards.” Logical and psychological analysis adds that arguments can sound valid while relying on unproven or circular premises, especially in areas that can’t be tested at scale. Cognitive science work by Hugo Mercier and Dan Sperber suggests reasoning evolved largely for social coordination and defense, not purely for accuracy. The takeaway is to treat certainty cautiously, use skepticism and empathy, and aim to be more curious than right.

Why does the transcript claim that “authority” often leads to error?

How can reasoning be “valid” yet still lead to a wrong conclusion?

Why are philosophy, politics, and morality described as especially vulnerable to flawed argumentation?

What does Mercier and Sperber’s theory add to the story about human reasoning?

What does the transcript recommend as a practical stance toward belief and argument?

How does the Blinkist segment connect to the same theme?

Review Questions

- Give one historical example from the transcript where a widely accepted belief later reversed. What does it suggest about trusting authority?

- Explain how an argument can be logically valid yet still be fallacious in practice, using the lilac/flowers example.

- According to Mercier and Sperber’s framework, what is the social function of reasoning, and how might that affect the way people defend beliefs?

Key Points

- 1

Many everyday beliefs are formed through authority and social explanation rather than direct observation or testing.

- 2

Historical “expert consensus” repeatedly flips, showing that credibility in one era can mask ignorance in hindsight.

- 3

Logical validity doesn’t guarantee truth when premises are unsupported, circular, or based on subjective claims.

- 4

In domains that can’t be tested at scale—morality, politics, meaning—arguments often rely on subjective presumptions shaped by culture and experience.

- 5

Reasoning can function as a social tool for defending identity and persuading others, not only as a mechanism for accuracy.

- 6

A safer mindset is to assume personal fallibility, use skepticism inwardly, and prioritize curiosity over the need to be right.

- 7

Breaking echo chambers—by seeking opposing ideas—can reduce confirmation bias and improve learning quality.