Facial Recognition on Video with Python

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Switch from iterating over an “unknown faces” directory to reading frames from `cv2.VideoCapture` in a `while True` loop and treating each frame as the source of unknowns.

Briefing

Facial recognition on live video becomes practical once the workflow shifts from “recognize against a fixed folder of images” to “recognize against an evolving database of face encodings.” The core move is to treat the video stream as the source of “unknown faces,” extract face encodings frame by frame, and either match them to an existing identity or assign a new ID when no match clears the similarity threshold. That ID can then be persisted so the system grows over time—turning a simple demo into a security-style pipeline for detecting familiar vs. unfamiliar people.

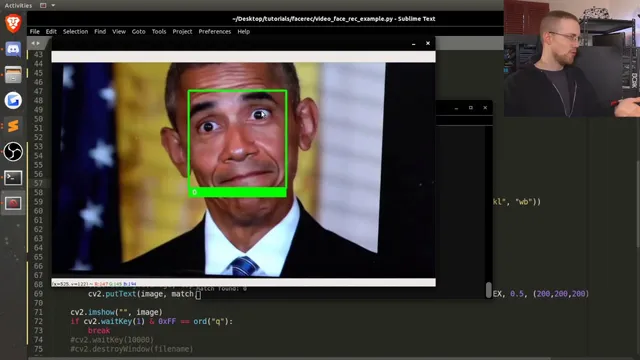

The transcript first walks through replacing image-file iteration with a continuous loop driven by OpenCV. A `cv2.VideoCapture` object supplies frames either from a webcam index (e.g., 0/1/2) or from a local video file. Each loop reads a frame (`ret, image = video.read()`), runs face recognition on that frame, and displays results until the user presses `q`. The key implementation detail is that the face-recognition step can operate directly on OpenCV’s BGR frames without extra color conversion in this setup, simplifying the real-time path.

Once video recognition works, the logic pivots to an “ID labeling” mode aimed at scenarios like offices, schools, or airports—places where some people appear frequently and others should be treated as anomalies. Instead of storing names, the system assigns numeric IDs. It loads precomputed encodings from disk using `pickle`, then maintains two parallel lists: known encodings and known IDs. When a detected face fails to match any existing encoding under a chosen tolerance, the code creates a new ID (based on the current maximum) and appends the new encoding to the in-memory database.

To persist learning, the transcript describes saving newly discovered identities to disk. For each new face encoding, it creates a directory named with the assigned ID and writes a pickle file containing the encoding (and a timestamp-based filename). This is the mechanism that lets the system remember “uncommon” faces across runs.

A major practical finding is that the number of IDs created depends heavily on the tolerance threshold. With a stricter tolerance, the same person can fragment into many IDs when the face is partially visible or out of focus. The transcript reports that increasing tolerance (e.g., from 0.5 to 0.6) reduces ID churn, bringing the system closer to stable recognition—though some bouncing can still occur when the face detection quality changes.

Finally, the transcript sketches next-step improvements: using multiple tolerances, merging IDs that likely belong to the same person, and adding additional encodings when a face is confidently recognized. The overall takeaway is that video-based face recognition isn’t just about running inference—it’s about managing identity stability over time, tuning tolerance, and building a feedback loop that updates the stored database responsibly.

Cornell Notes

The workflow shifts from recognizing faces in static image folders to recognizing faces in a video stream by extracting face encodings frame by frame. Known identities are stored as face encodings loaded from disk with `pickle`, while unknown faces trigger ID assignment when no match meets the similarity threshold (“tolerance”). New IDs are persisted by saving the new encoding under a directory named for that ID, allowing the system to grow across runs. A key operational insight is that tolerance strongly affects identity stability: too strict a threshold can split one person into many IDs when the face is blurry or partially visible, while a slightly higher tolerance reduces churn. Future robustness can come from merging IDs that likely represent the same person and adding more encodings when recognition is confident.

How does the system turn a video stream into “unknown faces” for recognition?

What data structure supports ID-based recognition instead of name-based recognition?

Why does tolerance matter so much in video face recognition?

How are newly discovered identities persisted so the system improves over time?

What security-style problem does the ID labeling approach target?

What improvements are proposed to prevent ID fragmentation and make recognition more robust?

Review Questions

- When no existing encoding matches a detected face under the tolerance threshold, what exact steps must occur for the new ID to be both recognized immediately and saved for future runs?

- How would you expect changing tolerance to affect the trade-off between false matches (wrongly merging identities) and false non-matches (splitting one person into many IDs)?

- What kinds of video conditions (pose, focus, occlusion) are most likely to cause ID bouncing, and how do the proposed merging/extra-encoding strategies address that?

Key Points

- 1

Switch from iterating over an “unknown faces” directory to reading frames from `cv2.VideoCapture` in a `while True` loop and treating each frame as the source of unknowns.

- 2

Store identities as numeric IDs paired with face encodings, loaded via `pickle`, rather than relying on name strings.

- 3

Use a tolerance threshold to decide whether a detected face matches an existing encoding; failure to match triggers new ID creation.

- 4

Persist newly assigned identities by saving the new face encoding to disk under a directory named for the assigned ID, so the database grows across runs.

- 5

Tune tolerance to reduce ID fragmentation caused by blur, partial visibility, or detection instability; increasing tolerance can stabilize recognition.

- 6

To improve long-term accuracy, merge IDs that likely represent the same person and add more encodings when recognition is confident.