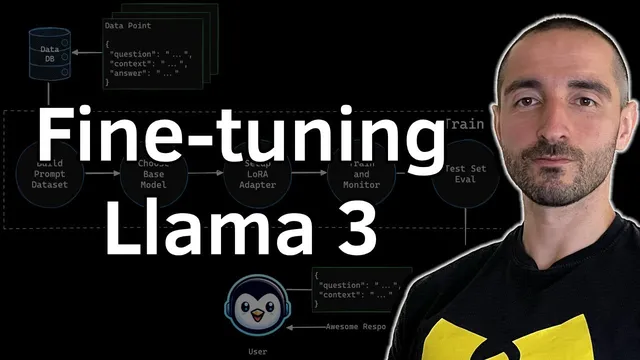

Fine-Tuning Llama 3 on a Custom Dataset: Training LLM for a RAG Q&A Use Case on a Single GPU

Based on Venelin Valkov's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Build a domain dataset by converting question–context–answer records into the model’s chat-template format, then tokenize to measure length.

Briefing

Fine-tuning Meta’s Llama 3 8B Instruct on a domain-specific Q&A dataset can be done on a single GPU by combining 4-bit quantization with a LoRA-style adapter (called “LoRA” in the transcript as “war adapter”). The practical payoff is a model that answers financial questions in a format closer to the target dataset and behaves more reliably than the untouched base model—without the cost of updating all 8B parameters.

The workflow starts by building a training dataset from question–context–answer pairs. The example dataset is “Financial Q&A 10K” from Hugging Face, with about 7,000 records and fields including question, context, answer, plus extra columns like filing and ticker that get ignored for this run. Each record is converted into a chat-style prompt using the tokenizer’s chat template, then tokenized to measure length. To keep training efficient and stable, examples exceeding 512 tokens are dropped; the remaining data is sampled down and split into roughly 4,000 training examples, 500 validation, and 400 test.

On the modeling side, the base system is the “Llama 3 8B Instruct” model loaded in 4-bit using the nf4 quantization scheme. Because the tokenizer for this model lacks a padding token in the transcript’s setup, a new “<|pad|>” token is added and the embedding matrix is resized accordingly. The transcript highlights a failure mode seen during earlier attempts: without a padding token, generation can become repetitive or run on indefinitely, and fine-tuning the base model without the padding token can still produce looping behavior.

Instead of full fine-tuning, training updates only a small fraction of parameters via LoRA. The adapter targets linear layers across the attention and MLP components (including query/key/value projections and other linear modules), with a rank of 32 and alpha of 16. With this approach, only about 1.34% (roughly 84 million) of the model’s ~8B parameters are trained, making the run feasible on consumer hardware. The transcript reports training on a single NVIDIA T4, with the adapter training taking about two hours per run.

Before and after training, the model is evaluated using a generation pipeline that removes the ground-truth answer from the prompt and asks the model to produce up to 128 new tokens. A key implementation detail improves both speed and correctness of the loss: the training collator masks labels for the prompt portion (setting them to -100) so the loss is computed only on the completion tokens, not the already-provided input text.

After training, the adapter is merged back into the base model and the resulting model is pushed to Hugging Face Hub as “Llama 3 8B Instruct Finance R” with sharded weights capped at 5 GB. Qualitative comparisons on sampled test prompts suggest the fine-tuned model produces more compact, dataset-aligned answers and avoids some of the verbose or off-format behavior seen in the untrained baseline. The overall message is that domain fine-tuning for RAG-style Q&A can be practical on one GPU when quantization, LoRA, careful token-length filtering, and completion-only loss masking are combined.

Cornell Notes

The transcript lays out a single-GPU recipe for adapting Llama 3 8B Instruct to a financial RAG-style Q&A task. It builds a chat-formatted dataset from Financial Q&A 10K, tokenizes each example, drops sequences longer than 512 tokens, and splits into train/validation/test. The base model is loaded in 4-bit (nf4) and a padding token is added to prevent repetitive generation. Instead of updating all weights, LoRA trains only ~1.34% of parameters by targeting linear layers in attention and MLP blocks. Training uses a completion-only loss mask (labels set to -100 for the prompt) and the adapter is merged into the base model before uploading to Hugging Face Hub.

Why add a padding token and resize embeddings when fine-tuning Llama 3 8B Instruct?

What makes LoRA/adapter fine-tuning workable on a single GPU here?

How does the training code ensure loss is computed only on the model’s answer, not the prompt?

Why filter out examples longer than 512 tokens?

What evaluation method is used to compare the base model vs the fine-tuned model?

What happens after training, and why merge the adapter?

Review Questions

- What specific generation failure mode was observed when fine-tuning without adding a padding token, and how does adding <|pad|> address it?

- How does masking labels to -100 for prompt tokens change the training objective compared with standard next-token loss over the entire sequence?

- Which LoRA target modules (attention and MLP linear layers) are selected in the transcript, and how do rank/alpha settings influence the number of trainable parameters?

Key Points

- 1

Build a domain dataset by converting question–context–answer records into the model’s chat-template format, then tokenize to measure length.

- 2

Load Llama 3 8B Instruct in 4-bit (nf4) to fit on a single GPU, and add a padding token if the tokenizer lacks one to prevent repetitive generation.

- 3

Use LoRA/adapter fine-tuning to train only a small fraction of parameters (about 1.34% in this setup) by targeting linear layers in attention and MLP blocks.

- 4

Filter training examples by token length (drop sequences >512 tokens) to keep training stable and efficient.

- 5

Compute loss only on completion tokens by masking prompt labels to -100, so the model isn’t penalized for reproducing the input.

- 6

Evaluate by removing the ground-truth answer from the prompt and generating up to a fixed number of new tokens (128 here), then compare outputs qualitatively.

- 7

Merge the trained adapter into the base model and upload the consolidated model to Hugging Face Hub for easier deployment in RAG pipelines.