Future Computers Will Be Radically Different (Analog Computing)

Based on Veritasium's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Analog computers represent numbers with continuous voltages and currents, letting circuits solve differential equations in real time by matching electrical behavior to mathematical structure.

Briefing

Analog computers once dominated practical computation—forecasting eclipses and tides and even helping guide anti-aircraft guns—until solid-state transistors made digital machines cheap, flexible, and fast enough to win. Today, a different set of pressures is pushing back toward analog: deep neural networks are exploding in use, but they rely heavily on massive matrix multiplications, and modern digital hardware spends much of its energy just moving weights in and out of memory. At the same time, transistor scaling is running into physical limits, threatening the pace of improvement promised by Moore’s Law.

Analog computing works by mapping a mathematical problem directly onto electrical behavior. Instead of representing numbers as zeros and ones, it uses continuous voltages and currents. By wiring components in specific configurations, an analog circuit can solve differential equations in real time. A damped mass on a spring, for instance, can be simulated so that an oscilloscope shows the mass’s position over time; changing parameters like damping or spring constant immediately changes the waveform. The same idea extends to chaotic systems such as the Lorenz equations, producing the characteristic “butterfly” Lorenz attractor and letting parameters be adjusted live.

The trade-off is clear. Analog systems are typically single-purpose and not easily used for general software like word processing. Their continuous inputs and outputs make exact repeatability difficult, and manufacturing tolerances in resistors and capacitors introduce errors—often estimated around 1%. Those limitations helped explain why analog faded once digital computers became viable.

The resurgence begins with AI’s hardware appetite. Early neural network efforts—starting with Frank Rosenblatt’s perceptron at Cornell University—showed promise but hit hard limits. The perceptron used a simple threshold rule with weighted inputs and a bias, trained by adjusting weights until it could separate categories like circles versus rectangles. Critiques by Marvin Minsky and Seymour Papert in 1969 contributed to an “AI winter,” partly because the perceptron struggled with tasks like distinguishing cats from dogs.

Later progress returned with deeper networks and better data. Carnegie Mellon’s ALVINN used a hidden layer and learned from human-driving examples via backpropagation, but speed constraints limited performance. Fei-Fei Li’s ImageNet project (1.2 million labeled images) and the ImageNet Large Scale Visual Recognition Challenge helped drive breakthroughs such as AlexNet, which used eight layers and massive parallel computation on GPUs to cut top-5 error dramatically. Yet the computational and energy cost of training and running ever-larger networks has become a bottleneck.

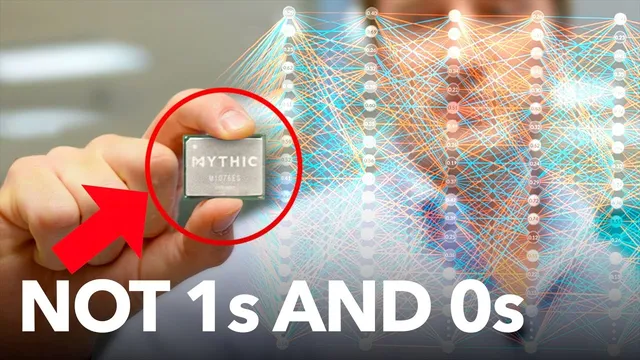

Analog chips aim to attack that bottleneck directly. Mythic AI repurposes digital flash memory cells as variable resistors by storing intermediate charge levels on floating gates rather than only on/off states. In this design, conductance encodes neural network weights, and input activations become voltages. The circuit then produces currents proportional to voltage times conductance—effectively performing multiply-and-accumulate operations as matrix multiplication. Mythic reports throughput around 25 trillion math operations per second while consuming about three watts, with digital systems offering similar peak operation counts but at far higher power in large setups.

The approach still isn’t plug-and-play. Long sequences of analog operations can distort signals, so some systems convert between analog and digital between processing blocks to preserve accuracy. Even so, the core message is that analog computing is returning not as a replacement for digital everywhere, but as a targeted way to make the most expensive AI math—matrix multiplication—faster and more energy-efficient, especially for deployment scenarios like sensors, cameras, and embedded devices.

Cornell Notes

Analog computers compute by using continuous electrical signals—voltages and currents—to represent mathematical quantities directly, avoiding zeros-and-ones. That makes them well suited for fast, energy-efficient solutions to differential equations and, crucially for modern AI, matrix multiplication. The comeback is driven by deep neural networks’ heavy reliance on matrix math, while digital hardware faces energy costs from memory traffic (the Von Neumann bottleneck) and slowing gains from Moore’s Law. The main drawbacks remain: analog circuits are often single-purpose, not perfectly repeatable, and subject to component variation and about ~1% error. Startups like Mythic AI show a practical path by using flash memory cells as analog weight storage (variable conductance) so neural network inference can run in the analog domain with very low power, sometimes with digital “reset” steps to control distortion.

How does an analog computer “program” itself to solve a differential equation?

Why did analog computers fall out of favor once digital machines became practical?

What AI developments turned the spotlight back toward hardware efficiency?

How does Mythic AI’s flash-based analog approach represent neural network weights and inputs?

What limits fully analog inference, and how do hybrid designs respond?

Review Questions

- What specific electrical quantities in an analog computer correspond to variables in the mathematical problem (and why does that matter for speed)?

- List two reasons analog computing struggled historically, and explain how modern AI changes the cost-benefit balance.

- Describe, at a circuit level, how variable conductance in flash cells can implement multiply-and-accumulate operations for neural networks.

Key Points

- 1

Analog computers represent numbers with continuous voltages and currents, letting circuits solve differential equations in real time by matching electrical behavior to mathematical structure.

- 2

Digital computing displaced analog because it offered general-purpose programmability, better repeatability, and fewer issues from component tolerances and continuous input uncertainty.

- 3

Deep neural networks demand enormous matrix multiplications, and digital systems often waste energy moving weights through memory rather than performing arithmetic (Von Neumann Bottleneck).

- 4

Transistor scaling is approaching physical limits, weakening the long-term advantage of simply making digital chips smaller and faster (Moore’s Law).

- 5

Analog AI hardware targets inference efficiency by performing matrix multiplication directly in the analog domain, where small precision errors can be acceptable for classification tasks.

- 6

Mythic AI uses flash memory cells as variable resistors by storing intermediate charge levels on floating gates, encoding weights as conductance and activations as voltages.

- 7

Hybrid analog-digital designs may be necessary because long analog computation chains can distort signals beyond usable accuracy.