GPT-4 Prompt Engineering: Why This Is a BIG Deal!

Based on All About AI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

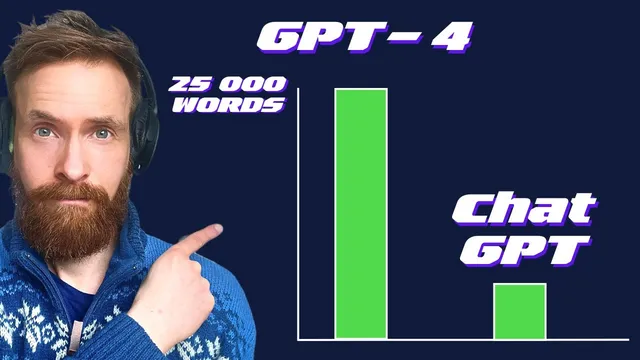

GPT-4’s context window expansion (4,000 → 8,000 and up to 32,000 tokens) enables prompts that include much longer source material without losing earlier details.

Briefing

The biggest practical shift highlighted is that GPT-4’s context window has expanded dramatically—up to 8,000 tokens in one version and 32,000 tokens in a larger one—making it far easier to feed long, detailed source material into a single prompt. That matters because it changes what “prompt engineering” can accomplish: instead of compressing ideas down to fit a small window, users can keep full transcripts, prior drafts, and reference text intact, then ask for rewriting, expansion, or transformation without losing earlier details.

A key clarification centers on how context length works. Tokens are treated as the unit of input (and output) the model can consider at once. Roughly, 1,000 tokens is about 750 words. With a 4,000-token window, information placed beyond that limit effectively falls outside the model’s working memory. The transcript gives a concrete example using a YouTube channel name: when the channel reference is placed within 4,000 tokens, the model can retrieve it; when additional filler pushes that reference past the 4,000-token boundary, the model can no longer “find” it. Switching to the 32k version keeps the same reference well within the window, restoring correct answers. The explanation also notes that the window isn’t just about what’s typed in—generated output consumes space too, so long answers reduce how much input can be retained.

With that foundation, the transcript demonstrates use cases built around long-context workflows. One example turns a recent YouTube video into a blog post. The process starts by converting the video to audio and running a Python transcription step using OpenAI’s Whisper API to produce a full text transcript. Then the transcript is combined with formatting from a prior article and additional context pulled from Midjourney’s site (including details about “Mid Journey 5”). All of that material is fed into GPT-4 with a prompt to write an in-depth article titled along the lines of “GPT-4 plus Mid Journey V5: the future of photo,” including section structure and style guidance. The author iterates on weak spots by requesting deeper coverage of “GPT-4 priming” and expanding the conclusion into a longer first-person perspective.

A second practical claim is that long-context prompting can preserve the user’s own voice. After generating the article, the text is run through OpenAI’s own text classifier, which returns a “very unlikely AI generated” result. The reasoning offered is that the model is mostly using user-provided context (transcripts, prior drafts, and specific reference material) rather than relying on generic model knowledge.

Finally, the transcript shows a learning-oriented workflow: feeding a compressed research paper into GPT-4 to generate a quiz. Because even large windows still require some spacing, the text is split into two parts and processed sequentially. The resulting quizzes range from difficult, machine-learning-focused questions to simplified versions for fourth graders, illustrating how the same long source material can be repackaged for different audiences. Overall, the expanded context window is framed as an enabler for richer, more faithful transformations—turning long documents into structured writing, study materials, and iterative drafts without the usual “forgetting” that comes with smaller windows.

Cornell Notes

GPT-4’s expanded context window (up to 8,000 tokens and a 32k version) changes prompt engineering by allowing far more source material to be included in a single request. The transcript clarifies that context length is about tokens the model can consider at once, and that both input and output consume that budget—pushing key details beyond the limit causes the model to miss them. Using this, a long YouTube transcript is transcribed with Whisper, combined with prior article formatting and Midjourney reference info, then fed into GPT-4 to generate and iteratively refine a blog post with deeper sections on “priming.” The same long-text approach is also used to create quizzes from a research paper by splitting content into chunks when needed for window space.

How does the transcript explain why a model “forgets” information in smaller context windows?

Why does the transcript convert a YouTube video into text before prompting GPT-4?

What role does “priming” play in the article-writing example?

How does the transcript claim the long-context approach preserves the user’s voice?

Why does the quiz-generation example split the research text into two parts?

How does the transcript demonstrate audience adaptation using the same source material?

Review Questions

- What happens to a key piece of information when it is pushed beyond the model’s token window, and why does switching to a 32k model change the outcome?

- In the blog-post workflow, what specific inputs are combined before prompting GPT-4, and how does iterative prompting improve weak sections?

- Why might splitting a long research paper into multiple prompt segments be necessary even with a 32k context window?

Key Points

- 1

GPT-4’s context window expansion (4,000 → 8,000 and up to 32,000 tokens) enables prompts that include much longer source material without losing earlier details.

- 2

Context length is token-based, and both input and generated output consume the same budget; long outputs reduce how much input can be retained.

- 3

Information placed beyond the token limit becomes effectively inaccessible, which can cause the model to miss facts that were previously within range.

- 4

A practical workflow for long-context writing: transcribe video audio with Whisper, combine it with prior formatting and external references (e.g., Midjourney info), then ask GPT-4 to draft and iteratively refine sections.

- 5

Long-context prompting can keep outputs anchored to user-provided material, which the transcript claims is reflected in OpenAI’s text classifier scoring as “very unlikely AI generated.”

- 6

Long documents can be transformed into study tools by chunking content when necessary and instructing GPT-4 to generate quizzes for different audiences and difficulty levels.