Hardware/Mobile (7) - Testing & Deployment - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Mobile and embedded deployment often fails when training-time model constructs don’t map cleanly to on-device runtime capabilities, so architecture choices must anticipate export constraints.

Briefing

Deploying deep learning models on mobile and embedded hardware is less about model design in the abstract and more about surviving the constraints of smaller runtimes, tighter memory budgets, and slower processors. Training frameworks like PyTorch and TensorFlow often support a wide range of layers and dynamic Python workflows, but mobile/embedded targets typically offer fewer supported operations and require models to be packaged into formats that can run efficiently on-device. That mismatch forces careful architecture choices early—especially if the model must be portable across platforms.

A central theme is the need for interchange formats and platform-specific runtimes. For mobile, TensorFlow Lite is positioned as a smoother successor to the older, more error-prone TensorFlow Mobile workflow. The process starts by converting a TensorFlow model into the TensorFlow Lite format using a TensorFlow Lite converter, producing a .tflite file that can be loaded by the TensorFlow Lite runtime on the phone. TensorFlow Lite can also quantize weights, shrinking them from 32-bit floats down to 16-bit or even 8-bit integers to reduce compute and memory demands.

On the PyTorch side, the workflow avoids running Python on-device. PyTorch can quantize weights and then uses TorchScript (via torch.jit scripting) to compile a flexible Python model into a static execution graph, saved in a TorchScript format suitable for deployment. PyTorch also provides platform libraries such as LibTorch iOS and PyTorch Android, which load the compiled model and run inference. A key limitation remains: not every PyTorch model can be converted to TorchScript, so portability depends on staying within what the target format can represent.

For cross-framework portability, ONNX (Open Neural Network Exchange) is presented as the closest thing to a “global interchange format.” ONNX is open source and backed by multiple companies, with converters that translate models from frameworks like PyTorch and TensorFlow into an ONNX representation. Deployment then happens through ONNX-compatible runtimes on different devices. The tradeoff is strict: ONNX can only store operations and constructs defined in its specification. If a model uses “super weird” PyTorch features that can’t be mapped into ONNX, it may not deploy elsewhere.

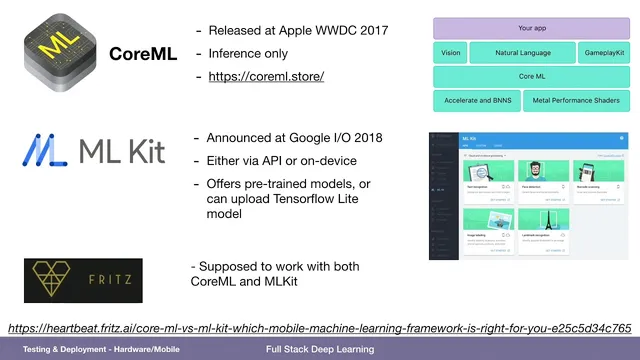

Beyond interchange formats, ecosystems matter. Apple’s Core ML and Google’s ML Kit are described as more ecosystem-oriented deployment paths, with Google generally better aligned with TensorFlow Lite. Startups such as Fritz AI are mentioned as aiming to convert once and deploy across Core ML and ML Kit. The reason to lean on these primitives is that platform vendors optimize their operators for speed on their own hardware.

Embedded systems add another layer of constraint: low-power GPUs and limited memory. The typical approach is aggressive weight quantization (often to float16 or even int8) to shrink model size, paired with NVIDIA’s TensorRT to optimize execution on embedded GPUs. Upstream engineering can also reduce the model’s footprint without sacrificing accuracy: MobileNet is cited as an example architecture that separates spatial and channel-wise computation using depthwise separable convolutions, cutting operations and memory. Finally, when a smaller model still needs to learn from a larger one, knowledge distillation is highlighted—training a “student” network on the teacher network’s predictions rather than directly on the raw labels, with Hugging Face’s work on distilling large models like BERT offered as a recent case study.

Cornell Notes

Mobile and embedded deployment demands more than exporting a trained network: on-device runtimes support fewer operations, memory is limited, and processors are slower. That drives choices like quantization (e.g., 32-bit floats down to 16-bit or 8-bit integers) and sometimes retraining via knowledge distillation so a smaller “student” model matches a larger “teacher.” For TensorFlow, TensorFlow Lite converts models into a .tflite file and can quantize weights for efficient inference. For PyTorch, TorchScript compiles Python models into a static execution graph, avoiding Python execution on-device, with LibTorch iOS and PyTorch Android libraries to run the result. For portability across frameworks, ONNX acts as an interchange format, but only operations representable in ONNX can be deployed elsewhere.

Why do mobile and embedded deployments require different engineering than training on servers?

How does TensorFlow Lite change the deployment workflow for TensorFlow models?

What role does TorchScript play in PyTorch mobile deployment?

What is ONNX, and what limitation comes with using it?

How do quantization and knowledge distillation work together on constrained devices?

Review Questions

- What specific constraints (memory, supported layers, runtime capabilities) most directly influence whether a trained model can be deployed to mobile or embedded hardware?

- Compare TensorFlow Lite and TorchScript in terms of how they package a model for on-device inference and what kinds of compatibility issues can arise.

- Why does ONNX portability break down for models that use operations outside the ONNX specification?

Key Points

- 1

Mobile and embedded deployment often fails when training-time model constructs don’t map cleanly to on-device runtime capabilities, so architecture choices must anticipate export constraints.

- 2

TensorFlow Lite deployment centers on converting a TensorFlow model into a .tflite file and optionally quantizing weights to 16-bit or 8-bit integers for faster, smaller inference.

- 3

PyTorch mobile deployment typically avoids running Python on-device by compiling models into TorchScript static execution graphs, then loading them via LibTorch iOS or PyTorch Android.

- 4

ONNX provides cross-framework portability, but only operations representable in the ONNX definition can be exported and deployed to other targets.

- 5

Embedded GPU deployment commonly relies on aggressive weight quantization and NVIDIA TensorRT to optimize execution on low-power hardware.

- 6

Model size and compute can be reduced upstream by using efficient architectures like MobileNet’s depthwise separable convolutions, which separate spatial and channel-wise computation.

- 7

Knowledge distillation helps smaller models recover accuracy by training a student network on teacher predictions rather than only on ground-truth labels.