Hilbert Spaces 8 | Proof of the Approximation Formula

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

A Hilbert space (complete inner product space) is required so Cauchy sequences of approximating points converge.

Briefing

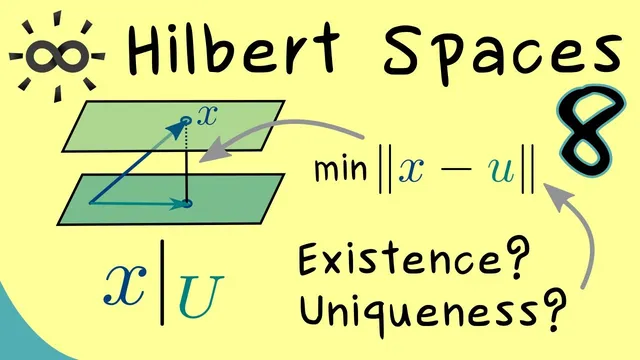

Hilbert spaces guarantee more than just an “almost closest” point: under the right geometric conditions, every vector has a unique best approximation from a subset, and the infimum distance is actually attained. The result matters because it turns an optimization problem defined by an infimum over a set into a concrete projection-like object inside the set—something essential for analysis, PDEs, and numerical methods.

The approximation formula requires two key assumptions. First, the ambient space must be a Hilbert space: an inner product space that is complete, so Cauchy sequences converge. Second, the approximating set U must be nonempty and closed; if U is not a subspace, it must at least be convex, meaning every line segment between two points of U stays inside U. With these conditions, for any vector X in the Hilbert space there exists a unique element in U—denoted informally as X restricted to U—that minimizes the distance from X to U. Distance is measured using the norm induced by the inner product, so minimizing distance is equivalent to minimizing the norm of the difference vector X − u.

The proof starts with existence by taking the infimum definition of distance. Since the distance from X to U is an infimum over real numbers, one can choose a sequence (u_n) in U whose distances to X approach that infimum. Crucially, the sequence need not be convergent at first; what is known is that ||X − u_n|| tends toward the distance d(X, U). The next step uses Hilbert space geometry: the parallelogram law, which holds because the norm comes from an inner product. Considering ||u_n − u_m|| and rewriting it in terms of X − u_n and X − u_m, the argument shows that the sequence (u_n) is Cauchy (a “Kösi sequence” in the transcript’s wording). This is where convexity enters: a term involving u_n + u_m − 2X is controlled by expressing it as a convex combination of points in U. Convexity ensures that the relevant midpoint lies in U, allowing the distance lower bound to be applied.

Once the sequence is shown to be Cauchy, completeness of the Hilbert space forces (u_n) to converge to some limit in the space. Closedness of U then ensures the limit cannot “escape” U, so the limit point u* lies in U. By construction, this u* achieves the minimal distance, establishing that the infimum is a minimum.

Uniqueness follows by contradiction-style geometry. If two distinct best approximations u_tilde and u_hat both attain the same minimal distance, then alternating between them produces a sequence that stays in U and still attains the same distance value at every step. The earlier Cauchy argument applies again, forcing this alternating sequence to be convergent. But an alternating sequence can converge only if the two points coincide. Therefore, the best approximation is unique.

Together, these steps show that in a Hilbert space, closed convex sets act like well-defined targets for closest-point approximation: every X has exactly one closest point in U, and the distance is realized rather than merely approached.

Cornell Notes

In a Hilbert space, every vector X has a unique closest point in a nonempty closed convex set U. The proof begins by choosing a sequence (u_n) in U whose distances ||X − u_n|| approach the infimum distance d(X, U). Using the parallelogram law (valid because the norm is induced by an inner product) and convexity (to keep midpoints of points in U inside U), the sequence is shown to be Cauchy. Completeness makes it converge, and closedness forces the limit to lie in U, so the infimum becomes a minimum. Uniqueness comes from assuming two minimizers and showing an alternating sequence between them must converge, which can happen only if the two minimizers are the same.

Why does the proof start with an infimum and a sequence (u_n) rather than directly producing a minimizer?

How does the parallelogram law help show that (u_n) is Cauchy?

Where exactly does convexity of U enter, and why is it necessary?

Why do completeness and closedness finish the existence part?

How does the uniqueness argument work using an alternating sequence?

Review Questions

- What roles do convexity and closedness of U play at different stages of the proof?

- How does the parallelogram law connect distances ||X − u_n|| to the Cauchy property of ||u_n − u_m||?

- Why does the existence of two distinct best approximations contradict the convergence of an alternating sequence?

Key Points

- 1

A Hilbert space (complete inner product space) is required so Cauchy sequences of approximating points converge.

- 2

The approximating set U must be nonempty and closed so the limit of an approximating sequence remains inside U.

- 3

Convexity of U is needed to ensure midpoints of points in U stay in U, enabling distance bounds via the parallelogram-law algebra.

- 4

Choosing a sequence (u_n) in U that approaches the infimum distance is the standard route to upgrading “infimum” to “minimum.”

- 5

The parallelogram law (inner-product geometry) is the engine that turns distance convergence into a Cauchy property for (u_n).

- 6

Completeness plus closedness yields existence of an actual minimizer u* in U.

- 7

Uniqueness follows because two minimizers would create an alternating sequence that can converge only if the minimizers coincide.