How the "Online Safety Act" might destroy the web

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

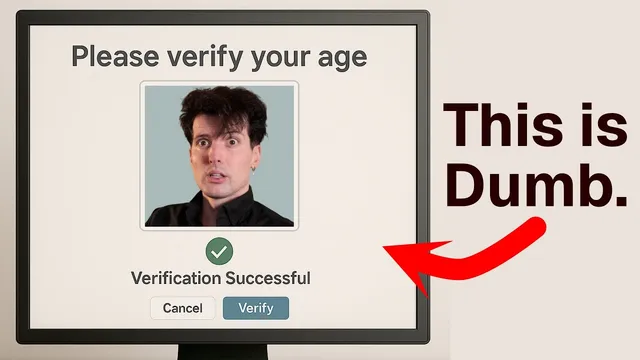

The Online Safety Act requires many UK-accessible services to implement “robust age checks,” often using sensitive identity data rather than a government-run verification system.

Briefing

The UK’s Online Safety Act is pushing websites to verify users’ ages—often through government-issued ID, face scans, or third-party “age assurance” services—at the same time it demands tighter controls on “harmful” content. The central worry is that forcing identity checks at scale will not reliably protect children online, while it will substantially increase privacy risks, data-leak exposure, censorship-by-proxy, and the operational burden that can reshape how the open web works.

Supporters of the law point to real harm experienced by young people online. Internet safety advocates cite survey findings that a significant share of children aged 9 to 17 have encountered harm online, and the act’s stated goal is to prevent minors from accessing content deemed harmful. But the critique is that the chosen mechanism—mandatory age checks across a wide range of services, including social media, search engines, music platforms, and adult content—turns a child-protection problem into a system-wide identity and moderation regime.

Under the act’s framework, platforms face steep consequences for failing to comply: fines up to 10% of global revenue and, in some cases, court-ordered blocking. The law defines harmful content in multiple categories, including pornography, content encouraging self-harm, abusive content tied to protected characteristics, incitement of hatred, instructions for serious violence, bullying, depictions of serious injury, “stunts and challenges” likely to cause harm, and content encouraging self-administration of harmful substances. It also includes broader “material risk” standards, requiring providers to judge whether content could cause significant harm to an appreciable number of children.

The practical implementation is where the backlash concentrates. The act requires platforms to run “robust age checks” rather than relying on a government-run verification system. That means companies must collect and process sensitive identity data—passport details, selfies or videos, or even bank-access permissions for age confirmation—often via third-party vendors. Critics argue this expands the number of organizations handling highly sensitive data, increasing the odds of breaches and identity theft. They also warn that face-based checks and identity pipelines can enable algorithmic discrimination and expose users to “opaque supply chains,” where people cannot easily see how their data is stored, shared, or secured.

The concern extends beyond privacy. Mandatory age verification can restrict free expression by forcing platforms to arbitrate what speech minors can access, and it can create a chilling effect for users who lack a personal device or ID. The rollout has already coincided with a surge in VPN downloads in the UK and a petition surpassing 400,000 signatures, which supporters interpret as evidence that users are seeking workarounds rather than embracing the new regime.

The argument also draws parallels to past internet-restricting efforts such as the Stop Online Piracy Act (SOAPA), which would have empowered ISPs and advertisers to cut off access based on copyright claims—an approach widely seen as damaging to the free web. In the same spirit, the Online Safety Act is framed as a policy that shifts responsibility down the chain—from parents to platforms to identity providers—so that when systems fail, the harm multiplies.

The proposed alternative is not abandoning child safety, but changing the method: fund education for parents and children, improve device-level controls, and avoid a universal identity-verification mandate that critics say will inevitably increase privacy risk, data-leak surface area, and circumvention—while failing to solve the underlying problem of children encountering harmful content.

Cornell Notes

The UK’s Online Safety Act aims to protect children online by requiring platforms to verify users’ ages before they can access large portions of the internet. The law threatens major penalties (including fines up to 10% of global revenue and potential blocking) for services that don’t implement “robust age checks” and content safeguards. Critics say the approach will not reliably make children safer because it forces companies to collect sensitive identity data—often via ID documents, face scans, or third-party age assurance—creating new privacy and security risks. They also argue it restricts free expression and shifts responsibility away from parents, increasing harm when systems fail. The backlash includes increased VPN use and calls for repeal, with similar age-check proposals discussed in other regions.

What mechanism does the Online Safety Act use to protect children, and why is it controversial?

How does the act define “harmful” content, and what kinds of services are affected?

What are the compliance consequences, and how do they shape platform behavior?

Why do critics say age verification increases privacy and security risks?

How does the backlash show up in user behavior and public response?

What alternative approach does the critique favor for protecting children online?

Review Questions

- What specific types of “harmful” content does the Online Safety Act target, and how does that breadth affect which services must comply?

- Why do critics argue that third-party age assurance increases the risk of data leaks and identity theft compared with content moderation alone?

- How does the critique use past internet-restricting efforts (like SOAPA) to frame the potential long-term effects of age verification mandates?

Key Points

- 1

The Online Safety Act requires many UK-accessible services to implement “robust age checks,” often using sensitive identity data rather than a government-run verification system.

- 2

Platforms face severe penalties for noncompliance, including fines up to 10% of global revenue and potential court blocking, which critics say encourages rushed and risky implementations.

- 3

The law’s definition of harmful content spans pornography, self-harm, hate and abuse, serious violence, bullying, injury depictions, dangerous stunts, and self-administration of harmful substances.

- 4

Mandatory age verification can restrict free expression and exclude users who lack a personal device or ID, while also increasing privacy and security exposure through identity pipelines.

- 5

Critics argue that shifting responsibility from parents to platforms to third-party identity providers increases harm when systems fail, including the risk of large-scale identity theft.

- 6

Workarounds have already gained traction, with VPN downloads rising in the UK and repeal petitions gathering hundreds of thousands of signatures.

- 7

The favored alternative is education and better parental/device controls rather than universal identity verification mandates that critics say will be circumvented and will not solve the underlying safety problem.