How to Chat With Your Data in Private Without Internet (Using MPT-30B Open-Source LLM)

Based on Chat with data's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

A private document chatbot can be built by running embeddings, vector retrieval, and LLM generation entirely on local hardware rather than sending content to third-party APIs.

Briefing

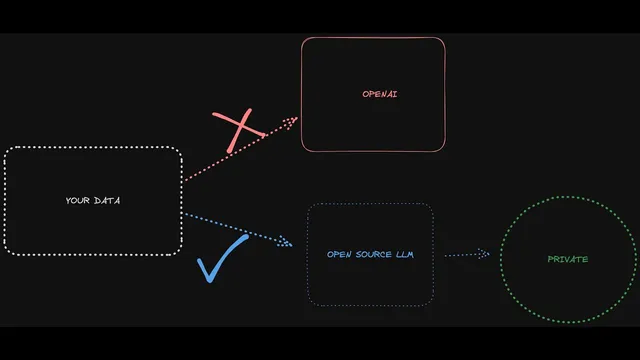

Local document chat is possible without sending sensitive text to third parties—by swapping closed APIs for an all-open pipeline built around the MPT-30B open-source LLM. The core payoff is privacy: embeddings, retrieval, and generation can run on a user’s own machine, reducing data-leak risk and avoiding reliance on external services.

The workflow starts the same way as typical “chat with your PDFs” systems: documents are split into chunks, converted into embeddings (numbers), and stored in a local vector database. When a user asks a question, the question is embedded, a similarity search pulls the most relevant chunks from the local store, and those retrieved passages are fed into the language model along with the prompt context to produce an answer. In the demo, the system identifies an earnings-call transcript (Q1 2024) and answers questions using cited source chunks—showing how retrieval grounding works even when the model runs locally.

A major theme is why closed-model setups are a poor fit for private or regulated use. The transcript lists five recurring problems: data leakage concerns when sensitive IP or customer information is sent to an API; limited customization because closed models and pricing are controlled by vendors; the need for constant internet (or high connectivity costs) in regions with limited access; server overload and outages that can block usage; and shifting quality or usage limits after policy changes, plus vendor lock-in.

To address those issues, the pipeline replaces each closed component with open alternatives. Sentence Transformers are used to turn text into embeddings. Chroma DB serves as the local vector store. Most importantly, the language model is swapped from a hosted API to an on-device model: Mosaic’s MPT-30B, distributed with a commercial license, is run via ggml tooling (with a downloadable “ggml version” hosted on Hugging Face). Once the model is downloaded, the system can answer questions without internet access and without exporting document content.

The transcript also gets practical, walking through a repo-based setup with scripts for ingestion and chatting. Users clone the project, ingest files from a “source documents” folder (PDF, TXT, CSV, DOC, etc.), and generate a local DB folder containing embeddings. A separate script downloads the model into a local models directory (noted as about 19GB). Environment variables configure the persist directory, model path, embedding model name, and retrieval parameters such as how many chunks to pull back (“target source chunks”).

Performance is the trade-off. Answers can take minutes when retrieval and context injection are involved, while simpler chat-only queries may return in tens of seconds. The transcript emphasizes that retrieval settings strongly affect latency and that scope management matters—asking for too many chunks slows things down. Hardware guidance is explicit: at least 32GB RAM is recommended for MPT-30B, with Docker suggested as an easier route.

Finally, licensing is flagged: the “chat” version of the MPT model is non-commercial, while the base and instruct variants can be used commercially. The overall message is a move toward democratized, private document Q&A—at the cost of local compute and some speed limitations—while keeping the architecture modular so components can be swapped as needs evolve.

Cornell Notes

The system described enables private “chat with your documents” without internet by running embeddings, retrieval, and generation locally. Documents are chunked, embedded with Sentence Transformers, stored in a local Chroma DB, and retrieved via similarity search when a question is asked; the retrieved chunks are then passed to an on-device MPT-30B model for grounded answers. This avoids common closed-API drawbacks such as data leakage risk, lack of customization, internet dependency, outages/overload, and vendor lock-in. The trade-off is speed: retrieval-augmented answers can take several minutes, and MPT-30B needs substantial hardware (at least 32GB RAM is recommended). Licensing matters too: the MPT chat variant is non-commercial, while base/instruct variants are commercially usable.

How does the local “chat with documents” pipeline keep answers grounded in the source text?

Why are closed hosted LLM setups considered risky or limiting for private data use?

What open-source components replace the closed parts of the typical architecture?

What practical steps are used to ingest documents and enable local Q&A?

What hardware and configuration choices affect speed and feasibility?

What licensing constraint is called out for the MPT model variants?

Review Questions

- What components in the described system run locally, and how does that change the privacy profile compared with API-based chat?

- How do “target source chunks” and question complexity influence latency in retrieval-augmented local chat?

- What licensing distinction is made between the MPT chat variant and the base/instruct variants, and why does it matter?

Key Points

- 1

A private document chatbot can be built by running embeddings, vector retrieval, and LLM generation entirely on local hardware rather than sending content to third-party APIs.

- 2

The retrieval-augmented approach chunks documents, embeds them, stores them in a local vector database, and injects the most similar chunks into the prompt for grounded answers.

- 3

Closed hosted LLM setups are flagged for privacy risk, limited customization, internet dependency, outages/overload, and vendor lock-in.

- 4

The open-source replacement stack uses Sentence Transformers for embeddings, Chroma DB for local storage, and Mosaic’s MPT-30B run via ggml for on-device generation.

- 5

MPT-30B requires substantial resources (at least 32GB RAM recommended) and can be slow when retrieval pulls many chunks; tuning retrieval scope is critical.

- 6

Licensing matters: the MPT chat variant is non-commercial, while base/instruct variants are described as commercially usable.