How To Do A Literature Review (STRESS-FREE!)

Based on Andy Stapleton's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Use Zotero as the reference manager so AI tools can connect to a single, organized library of papers.

Briefing

A stress-free literature review workflow hinges on using AI to triage the flood of papers—then doing the real academic work yourself. The core idea is to avoid reading everything ever published in a field by combining reference management, AI-powered filtering, and concept mapping, while still performing a meaningful subset of full-paper reading and a rigorous human editing pass. The payoff: a literature review that’s grounded in the right sources, structured coherently, and polished to academic standards without skipping the skills that make researchers effective.

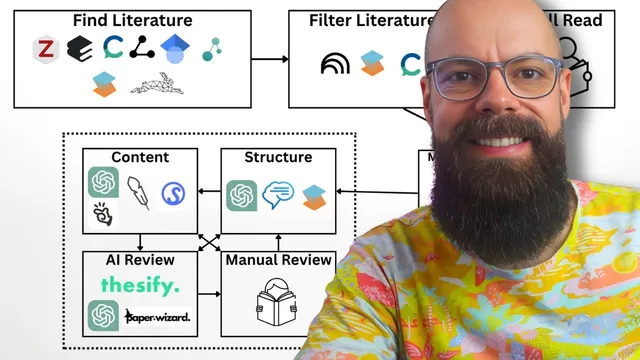

The process starts with building a literature library. A reference manager is treated as the backbone—Zotero is recommended because it integrates with many AI tools. From there, researchers gather papers through a mix of AI-assisted discovery platforms and traditional search. Illicit, Consensus, Litmaps, Connected Papers, SciSpace, and Research Rabbit are listed as ways to collect relevant studies, alongside Google Scholar, which is explicitly framed as a reliable “old-fashioned” option for keyword searches.

Once a sizable set of references is stored, AI shifts from discovery to filtering. The goal is sanity: narrowing the reading list to the most relevant work so the literature review can be anchored in the strongest evidence. Tools like SciSpace are used with relevance ranking (for example, selecting top papers based on a high relevance score), and with features that add structured “insights” columns derived from abstracts. Consensus is used to identify “key papers” and surface claims and evidence associated with top contributors. NotebookLM is positioned as a synthesis layer—uploading references and prompting it to highlight key findings and the most important papers.

After filtering, the workflow insists on selective deep reading. Instead of attempting to read every paper, it recommends reading roughly 20–25% of the bubbled-up set to build genuine understanding of the field. From there, the literature review becomes more than a summary list: NotebookLM mapping features and Consensus matrices help organize concepts, themes, and gaps. NotebookLM can generate mind maps that resemble a draft literature review outline, including potential headings and subheadings. Consensus provides a matrix view of how many studies exist across application areas, making underexplored regions—sometimes labeled as “gap”—visible.

The next stage is structure, where AI helps translate concept maps and gap analysis into an outline. ChatGPT (or another large language model) is suggested for generating a suggested structure based on the headings and topics the researcher wants to cover. Thesis AI is offered as an example of a tool that can produce a detailed, referenced draft for structural inspiration, but not for copy-and-paste submission. SciSpace’s agent-based “create a literature review” function is treated similarly: useful for generating options, not for replacing the researcher’s own academic synthesis.

Content generation is where boundaries tighten. The workflow warns against fully automated writing tools (like “Manis” or “Genpite,” mentioned as examples) for university assignments, emphasizing that narrative control matters. ChatGPT is framed as a controllable option, while Jenny AI is described as a “gray zone” tool that outputs information as you type, potentially reducing your thinking effort—subject to university rules. Sourcely is recommended for source discovery when new claims or questions arise during drafting.

Finally, the draft undergoes review in two passes: AI review and manual review. AI review tools (ChatGPT, Thesisify, Paper Wizard) are used to check alignment with learning outcomes, thesis clarity, purpose, and evidence usage—while the researcher retains the right to disagree with AI feedback. The last step is human rigor: read every sentence, ideally with external feedback from someone in the field, then proofread carefully. A specific proofreading tactic is suggested—reading paragraphs backwards—to catch typos and logic slips. The workflow ends with a reminder that future self-critique is normal and even useful for academic growth, as long as the current draft meets standards and reflects real understanding.

Cornell Notes

The workflow for a literature review uses AI to reduce the reading burden while preserving academic skills. It begins by collecting papers into Zotero, using discovery tools (e.g., Google Scholar, Illicit, Consensus, Litmaps, Connected Papers, SciSpace, Research Rabbit) to build a reference library. AI then filters that library using relevance ranking and synthesized “key papers” outputs (SciSpace, Consensus, NotebookLM), after which the researcher reads about 20–25% of the most relevant papers in full. NotebookLM mapping and Consensus matrices help organize concepts, themes, and research gaps into an outline. The draft is then structured with an LLM, written with human control, and polished through AI checks plus a mandatory manual review (including backward paragraph proofreading).

How does the workflow prevent a literature review from turning into an impossible “read everything” project?

What’s the role of full-paper reading if AI is doing so much triage?

How do NotebookLM and Consensus help turn scattered papers into a structured literature review?

Why does the workflow recommend using AI for structure but not for final content submission?

What does “review” look like in this workflow, and how do AI and humans divide the labor?

How does the workflow handle new questions that appear while writing?

Review Questions

- If a researcher has 200 papers after discovery, what concrete steps in this workflow reduce that to a manageable reading set, and which tools are used at each step?

- How do the mind map output from NotebookLM and the matrix output from Consensus complement each other when identifying research gaps?

- What are the differences between AI review and manual review in this workflow, and why is backward paragraph reading recommended?

Key Points

- 1

Use Zotero as the reference manager so AI tools can connect to a single, organized library of papers.

- 2

Combine modern discovery tools (Illicit, Consensus, Litmaps, Connected Papers, SciSpace, Research Rabbit) with Google Scholar keyword searches to build a strong initial set.

- 3

Filter aggressively with AI using relevance ranking and “key papers” identification so the literature review is grounded in the most important studies.

- 4

Read only about 20–25% of the filtered papers in full to build real understanding while avoiding exhaustive coverage.

- 5

Use NotebookLM mapping and Consensus matrices to convert papers into concepts, headings, and visible research gaps.

- 6

Generate an outline with an LLM, but keep narrative control during writing; avoid fully automated drafting for university submissions.

- 7

Finish with AI-assisted checks plus a mandatory manual review, including backward paragraph proofreading and ideally feedback from a senior researcher.