Hyperparameter Tuning (7) - Infrastructure and Tooling - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Define a concrete hyperparameter search space (ranges and discrete choices) rather than guessing a single architecture or training setup.

Briefing

Hyperparameter tuning is often where deep-learning experiments stall: teams can guess a rough model size, but the real question is how to search the “in-between” space—learning rates, dropout rates, and layer counts—without manually running every combination. The core need is software that can intelligently explore a defined parameter range, sampling values like learning rates from 0.0001 to 0.1 and layer counts such as 128, 256, or 512, then using search strategies (random search or more advanced methods) to find better-performing configurations.

Hyperopt is highlighted as a long-standing Python package for hyperparameter optimization across many machine learning model types. A practical example uses a wrapper approach for Keras: when building a model, hyperparameters such as dropout can be declared as a distribution (e.g., uniform between 0 and 1). Once the model is instantiated through the wrapper, Hyperopt samples dropout rates and runs experiments across the specified search space for all declared parameters. The workflow is straightforward: define the ranges, let the optimizer sample, and evaluate each trial’s performance.

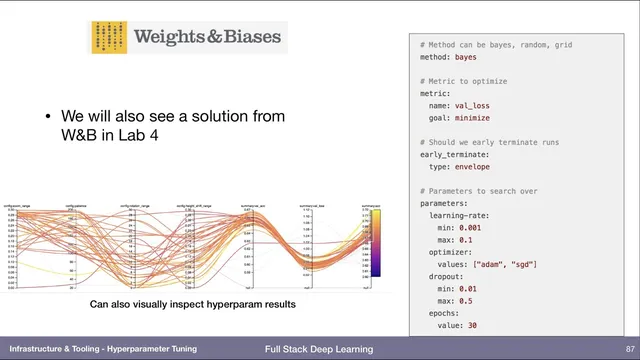

Beyond local tuning, the transcript points to hyperparameter tuning as a service. A startup called SigOpt is described as running the optimization loop externally: the user trains on their own hardware, but SigOpt receives the parameter set and the resulting metric, then decides what to try next. It can also terminate weak experiments early (“kill this experiment”) when results suggest they won’t beat better-performing runs, creating a tight feedback loop between training and the optimization service.

For distributed and research-grade tuning, Ray is presented as a key ecosystem, with Ray Tune providing implementations of modern algorithms. The transcript emphasizes that these methods can allocate compute toward promising trials. Hyperband is singled out as a technique that stops underperforming experiments after they show poor early progress, then reallocates resources to configurations that look more likely to succeed. Population Based Training is also mentioned as another approach within Ray Tune.

The discussion then shifts to adjacent automation: architecture search, sometimes grouped under AutoML, aims to find not only the right parameters but also the right model structure. The transcript notes that full architecture search is usually too expensive for most teams, except at large-scale organizations, though limited structural searches—like varying the width or depth of parts of a network—can be treated as hyperparameter search. The conversation frames architecture search as potentially resembling genetic algorithms that manipulate the structure of a computational graph, but it also admits that no widely used framework for that exact style is mentioned in this material.

Finally, the transcript briefly flags visualization as a separate concern, particularly tools for understanding data bias and data distributions, suggesting it belongs under data management rather than tuning infrastructure. Overall, the throughline is clear: effective tuning depends on tooling that defines search spaces, runs trials efficiently, and—crucially—cuts off wasteful experiments early while steering compute toward better candidates.

Cornell Notes

Hyperparameter tuning becomes practical when software can search a defined space of model settings—like learning rates, dropout, and layer counts—using strategies such as random search or more structured optimizers. Hyperopt provides a Python-based way to declare hyperparameters (including Keras-friendly wrappers) and sample values from distributions to run many trials. For scalable or managed workflows, SigOpt offers tuning as a service, coordinating trial selection and early stopping based on observed metrics. Ray Tune (built on Ray) implements state-of-the-art tuning algorithms, including Hyperband, which reallocates compute by stopping experiments that look unlikely to win. Architecture search (AutoML) overlaps with tuning but is often too expensive unless the search is limited to parts of the architecture.

How does Hyperopt turn a Keras model into a tunable search problem?

What does “tuning as a service” change about the workflow?

Why does Hyperband matter for hyperparameter tuning efficiency?

How does Ray Tune fit into the tuning ecosystem?

When does architecture search overlap with hyperparameter tuning?

Review Questions

- What kinds of hyperparameters are practical to define as search spaces (e.g., dropout, learning rate, layer count), and how would you represent them as distributions?

- Compare Hyperopt, SigOpt, and Ray Tune in terms of where the optimization logic runs and how early stopping is handled.

- Under what conditions can architecture search be approximated as hyperparameter tuning, and what makes full architecture search costly?

Key Points

- 1

Define a concrete hyperparameter search space (ranges and discrete choices) rather than guessing a single architecture or training setup.

- 2

Use Hyperopt with Keras wrappers to declare hyperparameters as distributions and automatically sample values across trials.

- 3

Consider tuning as a service (e.g., SigOpt) when you want an external system to choose next trials and stop weak experiments early.

- 4

Leverage Ray Tune for distributed hyperparameter optimization with algorithms like Hyperband and population-based training.

- 5

Use Hyperband-style early stopping to avoid wasting compute on configurations that show poor early performance.

- 6

Treat limited architecture variations (like layer width/depth) as hyperparameter search when full architecture search is too expensive.

- 7

Separate visualization needs—such as bias and data distribution checks—from tuning infrastructure, and handle them under data management.