I Mapped Where Every AI Agent Actually Sits. Most People Pick Wrong.

Based on AI News & Strategy Daily | Nate B Jones's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

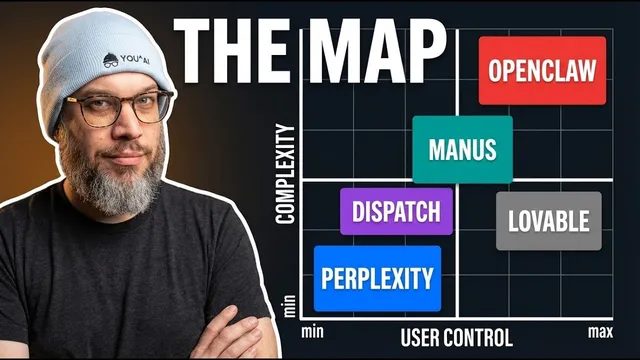

Evaluate agent products using three axes: where the agent runs, who orchestrates intelligence, and how users interact with it.

Briefing

OpenClaw didn’t just launch an AI agent framework—it forced every major company to pick a different strategy for where agents run, who orchestrates them, and how users interact with them. That strategic divergence matters because it turns today’s “agent” announcements into a set of tradeoffs: data sovereignty versus convenience, model control versus managed quality, and flexible integrations versus a guided, safer workflow. Once those bets are understood, new agent products stop feeling like a confusing blur of forks and instead become predictable choices for specific kinds of users.

The core problem is that OpenClaw’s category-defining success triggered an ecosystem explosion. Alongside corporate spin-offs, open-source forks attacked perceived weaknesses in the original implementation—rewriting it in Rust, targeting enterprise Rust deployments, stripping it down to smaller codebases, or pitching alternative “agent operating systems.” The result is that many variants look similar on the surface but blur together for non-experts. The practical takeaway: instead of reacting to each new release as “another OpenClaw” or “another security disaster,” buyers can evaluate each option by peeling back what’s underneath.

Three axes provide that clarity. First, where the agent runs—local, cloud, or hybrid—directly shapes privacy posture, security surface area, and accountability when something goes wrong. Second, who orchestrates the intelligence—single-model, multimodel routing, or model-agnostic plug-ins—determines cost, output quality, and whether users feel locked into a vendor. Third, the interface contract—messaging app, desktop app, or phone-based control—defines the day-to-day experience and what behavior the product assumes from the user.

Profiling OpenClaw itself shows the “sovereignty” bet. It runs locally with users’ API keys and data, supports swapping in LLMs and modular components, and is designed to interoperate with messaging platforms like Telegram, WhatsApp, Signal, and Slack. That flexibility is exactly why it appeals to technical power users who want maximum control over infrastructure and model choice. It’s also why security risk becomes part of the bargain: researchers cited over 30,000 publicly exposed instances with weak or missing authentication in OpenClaw plugins, and the Skills registry faced a supply-chain attack with more than 800 compromised skills documented. OpenClaw’s target audience is therefore clear—users willing to manage security and complexity.

Perplexity Computer represents the opposite bet: delegation. It runs in the cloud inside a secure container, decomposes user goals into subtasks, and executes them remotely, including claims about long-running tasks. The tradeoff is straightforward—users pay (about $200/month), must trust Perplexity with their data, and accept that Perplexity controls orchestration and model selection. Meta’s Manis is framed as a distribution play: it aims to capture attention inside the Meta ecosystem at scale, likely using a mix of local Meta models and other models, while postponing monetization. Anthropic’s Dispatch is positioned as a safety-and-brand reinforcement strategy: it enables phone-to-desktop control of Claude through a secure, single-threaded workflow, making it easier for non-technical users to get productive results without setting up OpenClaw.

Even Lovable—once the most imitated “vibe coding” product—signals how agent interfaces are compressing. It moved toward “agent-first” execution after realizing that users now want agents to kick off complex workflows rather than only respond to human prompts.

The bigger forecast is that 2026 will reward products that either go deep with unique capabilities or go broad as a default delegation layer. The “middle” risks disappearing. For buyers, the winning method is consistent: evaluate each agent by where it runs, who picks the model, and what interface it assumes—then choose the bet that matches the tradeoffs they’re willing to make.

Cornell Notes

OpenClaw’s success forced the agent market to split into distinct strategies rather than a single “best” product. The transcript proposes three evaluation axes: where the agent runs (local vs cloud), who orchestrates intelligence (single model, multimodel routing, or plug-in model choice), and the interface contract (messaging app, desktop app, or phone-based control). OpenClaw exemplifies a sovereignty bet: it runs locally with users’ data and supports modular swapping of LLMs and integrations, but it also brings a larger security burden. Perplexity Computer flips to delegation by running in the cloud and handling orchestration, trading user control for managed safety and convenience. Anthropic’s Dispatch and Meta’s Manis illustrate other bets—safety/brand reinforcement and distribution inside the Meta ecosystem—showing how different companies optimize for different user needs.

Why does the OpenClaw ecosystem feel confusing, and what framework helps cut through it?

What tradeoff does OpenClaw make by running locally, and what security concerns come with that?

How does Perplexity Computer’s “delegation” strategy differ from OpenClaw’s sovereignty approach?

What does Meta’s Manis represent in the sovereignty/delegation landscape, and why does distribution matter?

How does Anthropic’s Dispatch use messaging to sell safety, and what limitation does it introduce?

Why does Lovable’s shift toward “agent-first” execution matter for the broader market?

Review Questions

- If an agent product runs locally versus in the cloud, which of the three axes changes most directly, and what user risk or benefit follows from that change?

- How would you compare two agent systems that both claim “control” but differ in model orchestration (plug-in model choice vs multimodel routing vs vendor-chosen models)?

- Which interface contract would you expect to be easiest for non-technical users, and what assumptions about user behavior does that interface likely require?

Key Points

- 1

Evaluate agent products using three axes: where the agent runs, who orchestrates intelligence, and how users interact with it.

- 2

OpenClaw’s local, modular design maximizes sovereignty and interoperability but increases the user’s security and operational burden.

- 3

Perplexity Computer’s cloud delegation reduces security workload for users, but it requires ongoing subscription cost and trust in Perplexity’s orchestration and model choices.

- 4

Meta’s Manis is positioned less as a sovereignty tool and more as a distribution strategy to keep agent-driven activity inside the Meta ecosystem.

- 5

Anthropic’s Dispatch leans on safety and brand reinforcement by making phone-to-desktop control simple, but it limits advanced multimodel orchestration flexibility.

- 6

The market is compressing toward conversational agent interfaces; products that are neither uniquely deep nor broadly delegating face higher risk of becoming obsolete.

- 7

In 2026, “which bet wins” will depend on which niche of users values the specific tradeoffs—delegation, safety, sovereignty, or distribution—most strongly.