I Wrote Research Papers Faster Using This 4-Step System (Anyone Can Do It)

Based on Andy Stapleton's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Start by crafting a research question that is clear, concise, open-ended, and narrow enough to fit the project timeline while still being broad enough to sustain meaningful research.

Briefing

A strong research paper starts with a research question that is clear, concise, open-ended, and—crucially—answerable within a specific time window. The process begins by shaping that question into its “purest” form: narrow enough to stay focused, broad enough to allow meaningful exploration, and supported by the existence of credible, accessible sources. Questions that can be answered with a single fact, that invite yes/no responses, or that are impossible to tackle within a semester (or too small to sustain a multi-year project) are treated as common failure points. The goal is to land in a “Goldilocks zone” where there’s enough literature to investigate a gap, but not so much scope that the work becomes unmanageable.

From there, research splits into two practical tracks. For many undergraduate assignments, the work often becomes a structured synthesis—essentially a meta-analysis of existing studies—where the “new” contribution is the framing and interpretation built from the literature. For projects aiming at novel results, the research stage includes generating new information through field-specific methods (such as lab experiments, sample creation, testing, and data reporting). Regardless of track, the workflow follows a familiar pattern: find literature, filter it, read a targeted subset (about 20–25% of the most relevant items), and map concepts and gaps. To speed this up, the system leans on citation management (Zotero) and discovery tools (including Google Scholar, Google Scholar Labs, SciSpace, Research Rabbit, Connected Papers, and others). It also uses AI-assisted filtering and sense-making—such as NotebookLM—to query a research question against a body of papers, then generate a mind map from a curated set (up to 50 papers in the described setup, or more with a paid tier).

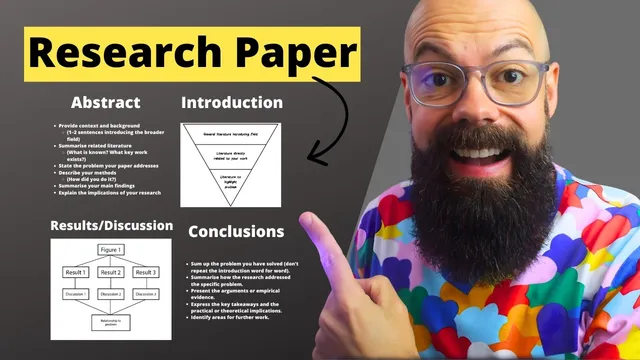

Once the question and evidence are locked in, writing and citing follow a structured approach that prioritizes results and discussion before the abstract. The core technique is to build the paper claim-by-claim (or figure-by-figure): each figure or claim is treated as a “unit” that gets explained, its implications spelled out, and then tied back directly to the research question. This repeated loop—figure/claim → meaning → implications → relationship to the problem—creates a coherent narrative without losing sight of the central aim.

The introduction then uses an “inverse triangle” structure: start broad with general background, narrow to literature closest to the specific question, and end at the pointy end where the gap and problem become explicit. Conclusions and abstracts come after, with the conclusion framed as a sentence-level checklist: state the solved problem, summarize how it was addressed, present evidence and key takeaways (often with specificity), articulate implications, and close with limitations and directions for further work. The abstract is built with a sentence template as well—context, what’s known, the gap, how the work was done, and the main findings and implications.

Finally, the system insists on review as a separate step: an AI-assisted review pass (examples include Thesify, ChatGPT, Claude, Perplexity, Gemini, and Paper Wizard) to flag missing citations and unsupported claims, followed by manual review for logic and flow, and—when possible—peer review to catch blind spots that close reading misses. The four-step system is straightforward: research question → research → write and cite → review, with AI tools used to reduce friction while keeping the argument grounded in evidence.

Cornell Notes

The four-step writing system centers on a research question that is clear, concise, open-ended, and realistically answerable within the project’s time limits. Research then proceeds in two modes: synthesizing existing literature (common in undergraduate work) or generating new results through experiments and data collection (common in novel projects). Writing and citing are organized around “units” of evidence—figures or claims—explained and interpreted, then repeatedly tied back to the research question; the introduction follows an inverse-triangle structure from broad background to a specific gap. Conclusions and abstracts use structured sentence checklists, and the final quality pass combines AI review (for missing evidence/citations) with manual and peer review (for logic, flow, and overlooked errors).

What makes a research question “good” in this system, and why does scope matter so much?

How does the research stage differ between undergraduate papers and projects aiming for novel results?

Which tools are used to speed up literature discovery and filtering, and what do they do?

What’s the recommended structure for writing the results/discussion sections?

How should the introduction narrow from general background to the specific gap?

What does a strong review workflow look like here?

Review Questions

- What characteristics should a research question have to avoid being unanswerable, unmanageable, or trivial?

- How does the “figure/claim → implications → tie back to the research question” loop improve coherence in results and discussion?

- Why combine AI review with manual and peer review rather than relying on one method alone?

Key Points

- 1

Start by crafting a research question that is clear, concise, open-ended, and narrow enough to fit the project timeline while still being broad enough to sustain meaningful research.

- 2

Use the research stage to either synthesize existing literature (often for undergraduate meta-analyses) or generate new results through experiments, testing, and data reporting (for novel projects).

- 3

Speed up literature discovery and organization with tools like Zotero for citations and platforms such as Google Scholar, SciSpace, Research Rabbit, and Connected Papers for finding relevant work.

- 4

Filter and interpret sources by querying the research question in tools like NotebookLM, then map concepts and gaps using generated mind maps from a curated set of papers.

- 5

Write results and discussion by building the paper unit-by-unit—each figure or claim gets explained, its implications stated, and its relevance tied back to the research question.

- 6

Structure the introduction as an inverse triangle: broad field background, then narrower literature directly related to the question, ending with the specific gap or problem.

- 7

Treat review as a separate step: run AI checks for missing evidence/citations, then do manual logic/flow review and seek peer feedback when possible.